Gradient Descent For Machine Learning Machinelearningmastery

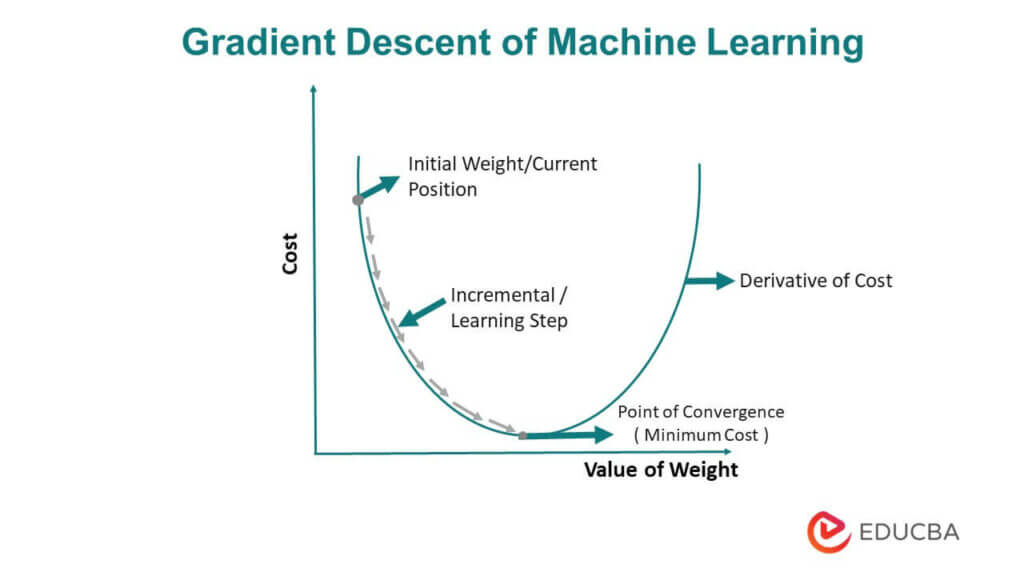

Gradient Descent For Machine Learning Machinelearningmastery Gradient descent is a simple optimization procedure that you can use with many machine learning algorithms. batch gradient descent refers to calculating the derivative from all training data before calculating an update. Gradient descent is an optimisation algorithm used to reduce the error of a machine learning model. it works by repeatedly adjusting the model’s parameters in the direction where the error decreases the most hence helping the model learn better and make more accurate predictions.

Gradient Descent For Machine Learning Machinelearningmastery Gradient descent for machine learning – this beginner level article provides a practical introduction to gradient descent, explaining its fundamental procedure and variations like stochastic gradient descent to help learners effectively optimize machine learning model coefficients. Gradient is a commonly used term in optimization and machine learning. for example, deep learning neural networks are fit using stochastic gradient descent, and many standard optimization algorithms used to fit machine learning algorithms use gradient information. Stochastic gradient descent is an important and widely used algorithm in machine learning. in this post you will discover how to use stochastic gradient descent to learn the coefficients for a simple linear regression model by minimizing the error on a training dataset. Gradient descent represents the optimization algorithm that enables neural networks to learn from data. think of it as a systematic method for finding the minimum point of a function, much like.

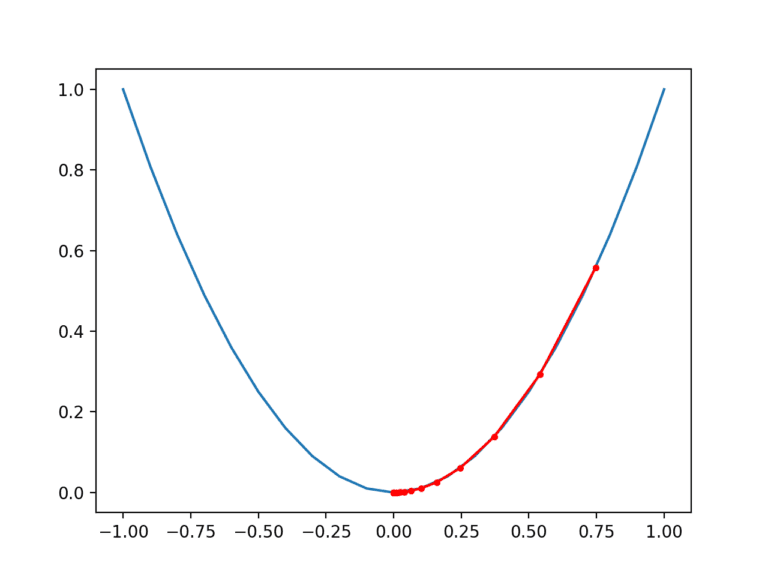

Gradient Descent For Machine Learning Machinelearningmastery Stochastic gradient descent is an important and widely used algorithm in machine learning. in this post you will discover how to use stochastic gradient descent to learn the coefficients for a simple linear regression model by minimizing the error on a training dataset. Gradient descent represents the optimization algorithm that enables neural networks to learn from data. think of it as a systematic method for finding the minimum point of a function, much like. A quick and easy to follow tutorial on the gradient descent procedure. the algorithm is illustrated using a simple example. The algorithm also provides the basis for the widely used extension called stochastic gradient descent, used to train deep learning neural networks. in this tutorial, you will discover how to implement gradient descent optimization from scratch. Gradient descent is an iterative optimization method used to find the minimum of an objective function by updating values iteratively on each step. with each iteration, it takes small steps towards the desired direction until convergence, or a stop criterion is met. Gradient descent is an optimization algorithm that uses the gradient of the objective function to navigate the search space. gradient descent can be updated to use an automatically adaptive step size for each input variable in the objective function, called adaptive gradients or adagrad.

Ai Explained Gradient Descent A Machine Learning Tool A quick and easy to follow tutorial on the gradient descent procedure. the algorithm is illustrated using a simple example. The algorithm also provides the basis for the widely used extension called stochastic gradient descent, used to train deep learning neural networks. in this tutorial, you will discover how to implement gradient descent optimization from scratch. Gradient descent is an iterative optimization method used to find the minimum of an objective function by updating values iteratively on each step. with each iteration, it takes small steps towards the desired direction until convergence, or a stop criterion is met. Gradient descent is an optimization algorithm that uses the gradient of the objective function to navigate the search space. gradient descent can be updated to use an automatically adaptive step size for each input variable in the objective function, called adaptive gradients or adagrad.

Gradient Descent For Machine Learning Machinelearningmastery Gradient descent is an iterative optimization method used to find the minimum of an objective function by updating values iteratively on each step. with each iteration, it takes small steps towards the desired direction until convergence, or a stop criterion is met. Gradient descent is an optimization algorithm that uses the gradient of the objective function to navigate the search space. gradient descent can be updated to use an automatically adaptive step size for each input variable in the objective function, called adaptive gradients or adagrad.

Gradient Descent In Machine Learning Optimized Algorithm

Comments are closed.