Gradient Descent Explained

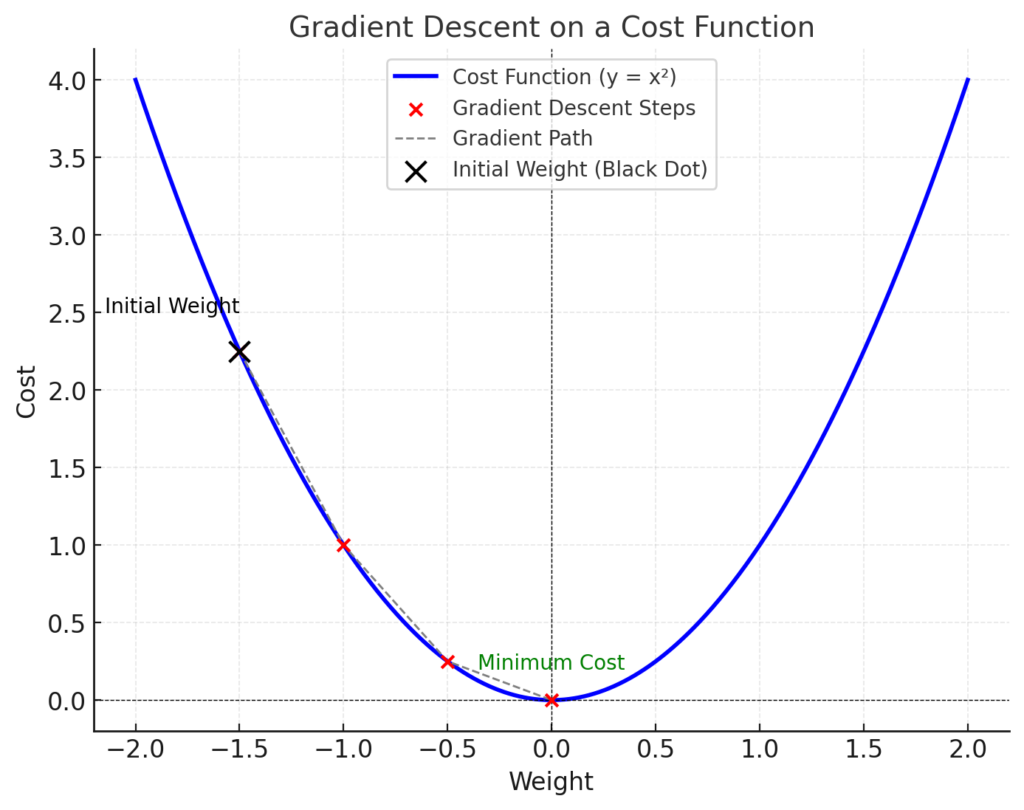

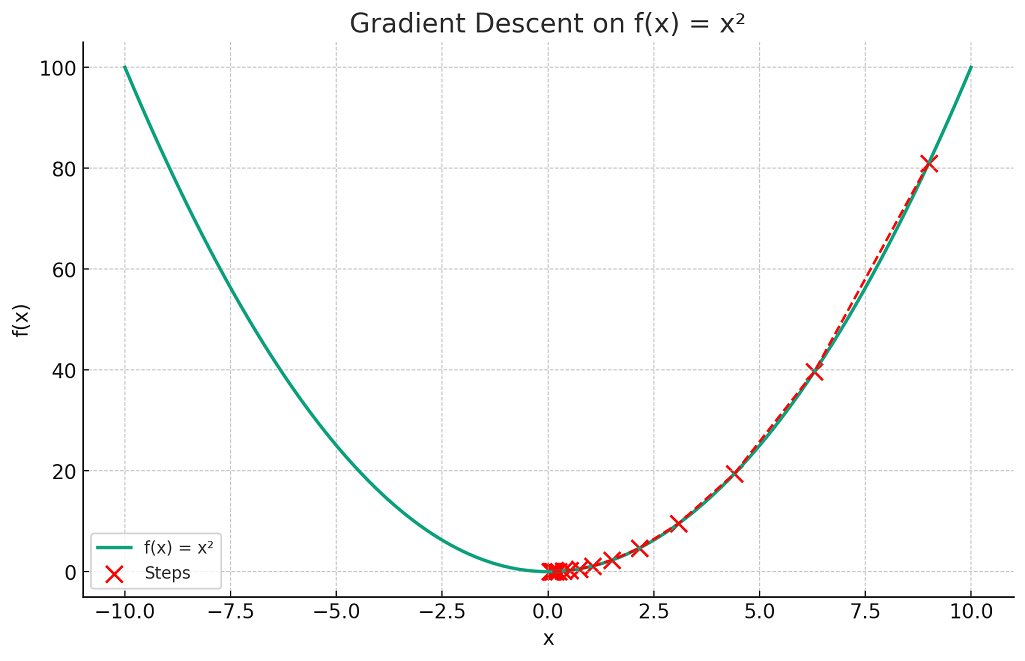

301 Moved Permanently Gradient descent is an iterative optimization algorithm used to minimize a cost function by adjusting model parameters in the direction of the steepest descent of the function’s gradient. Gradient descent is a method for unconstrained mathematical optimization. it is a first order iterative algorithm for minimizing a differentiable multivariate function.

301 Moved Permanently At first glance, it might sound like scary math jargon — but don’t worry. at its core, gradient descent is just a simple idea dressed up in mathematical clothing. let’s break it down together. One way to think about gradient descent is to start at some arbitrary point on the surface, see which direction the “hill” slopes downward most steeply, take a small step in that direction, determine the next steepest descent direction, take another small step, and so on. Learn what gradient descent is, how it optimizes machine learning models, its main variants, and how to implement it in practice. Yet, despite its simplicity, the different flavours of gradient descent — batch, mini batch, and stochastic — can behave very differently in practice. in this article, we’ll demystify these three variants, understand how they work, and see when to use each.

Gradient Descent Algorithm Explained Learn what gradient descent is, how it optimizes machine learning models, its main variants, and how to implement it in practice. Yet, despite its simplicity, the different flavours of gradient descent — batch, mini batch, and stochastic — can behave very differently in practice. in this article, we’ll demystify these three variants, understand how they work, and see when to use each. What is gradient descent? gradient descent is an optimization algorithm which is commonly used to train machine learning models and neural networks. it trains machine learning models by minimizing errors between predicted and actual results. Gradient descent is an iterative optimization algorithm used to minimize a cost (or loss) function. it adjusts model parameters (weights and biases) step by step to reduce the error in predictions. Learn gradient descent variants with practical examples. complete guide covering batch, stochastic, mini batch, momentum, and adaptive. In summary, the gradient descent is an optimization method that finds the minimum of an objective function by incrementally updating its parameters in the negative direction of the gradient of the function which is the direction of steepest descent.

Understanding Gradient Descent In Ai Ml Go Gradient Descent What is gradient descent? gradient descent is an optimization algorithm which is commonly used to train machine learning models and neural networks. it trains machine learning models by minimizing errors between predicted and actual results. Gradient descent is an iterative optimization algorithm used to minimize a cost (or loss) function. it adjusts model parameters (weights and biases) step by step to reduce the error in predictions. Learn gradient descent variants with practical examples. complete guide covering batch, stochastic, mini batch, momentum, and adaptive. In summary, the gradient descent is an optimization method that finds the minimum of an objective function by incrementally updating its parameters in the negative direction of the gradient of the function which is the direction of steepest descent.

Gradient Descent In Machine Learning Python Examples Learn gradient descent variants with practical examples. complete guide covering batch, stochastic, mini batch, momentum, and adaptive. In summary, the gradient descent is an optimization method that finds the minimum of an objective function by incrementally updating its parameters in the negative direction of the gradient of the function which is the direction of steepest descent.

Comments are closed.