Gradient Descent Algorithm Explained With Linear Regression Example

Gradient Descent In Linear Regression Pdf Mathematical Optimization Gradient descent is an optimization algorithm used in linear regression to find the best fit line for the data. it works by gradually adjusting the line’s slope and intercept to reduce the difference between actual and predicted values. Learn how gradient descent iteratively finds the weight and bias that minimize a model's loss. this page explains how the gradient descent algorithm works, and how to determine that a.

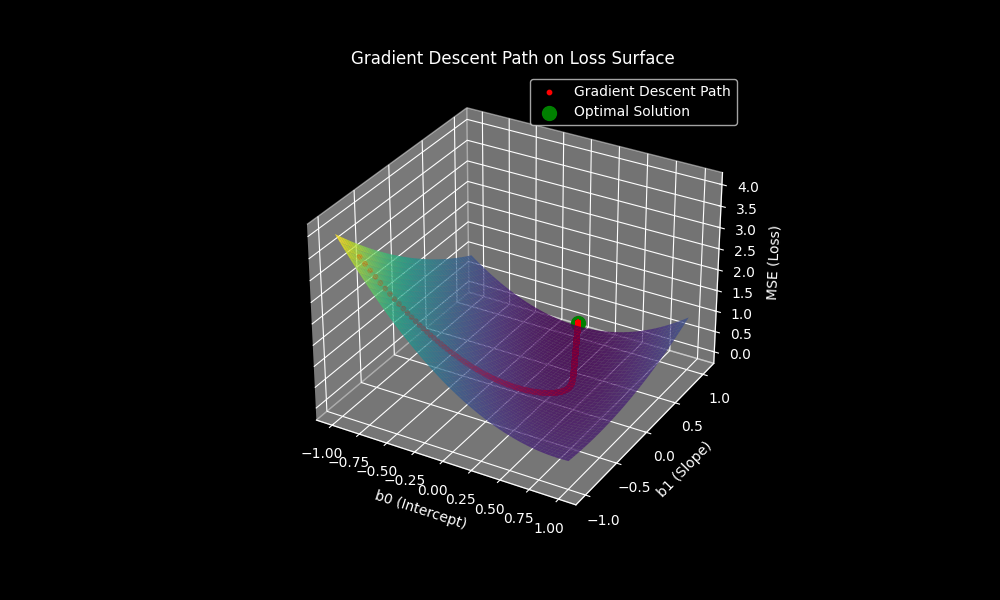

Unit 3 1 Gradient Descent In Linear Regression Pdf Linear We found the best line that fit the data for our regression model using the gradient descent algorithm. this is the overall intuitive explanation of the gradient descent algorithm. Gradient descent (gd) is a fundamental optimization algorithm that helps achieve this goal. in this article, we will explore gradient descent in detail, understand its types, working mechanism, applications, and implement a simple example. In the following sections, we are going to implement linear regression in a step by step fashion using just python and numpy. we will also learn about gradient descent, one of the most common optimization algorithms in the field of machine learning, by deriving it from the ground up. There is an enormous and fascinating literature on the mathematical and algorithmic foundations of optimization, but for this class we will consider one of the simplest methods, called gradient descent.

Gradient Descent Algorithm For Linear Regression A Developer Bird Blog In the following sections, we are going to implement linear regression in a step by step fashion using just python and numpy. we will also learn about gradient descent, one of the most common optimization algorithms in the field of machine learning, by deriving it from the ground up. There is an enormous and fascinating literature on the mathematical and algorithmic foundations of optimization, but for this class we will consider one of the simplest methods, called gradient descent. Gradient descent is an algorithm that finds the best fit line for linear regression for a training dataset in a smaller number of iterations. With this, you have now learned how to use gradient descent to iteratively solve linear regression. we’ve looked at the problem from a mathematical as well as a visual aspect, and we’ve translated our understanding of it into working code, once using just python and once with the help of scikit learn. Gradient descent is one of the most important algorithms in all of machine learning and deep learning. it is an extremely powerful optimization algorithm that can train linear regression, logistic regression, and neural network models. Linear regression is a linear approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted x.

Gradient Descent Algorithm Explained With Linear Regression Example Gradient descent is an algorithm that finds the best fit line for linear regression for a training dataset in a smaller number of iterations. With this, you have now learned how to use gradient descent to iteratively solve linear regression. we’ve looked at the problem from a mathematical as well as a visual aspect, and we’ve translated our understanding of it into working code, once using just python and once with the help of scikit learn. Gradient descent is one of the most important algorithms in all of machine learning and deep learning. it is an extremely powerful optimization algorithm that can train linear regression, logistic regression, and neural network models. Linear regression is a linear approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted x.

Gradient Descent Algorithm Explained With Linear Regression Example Gradient descent is one of the most important algorithms in all of machine learning and deep learning. it is an extremely powerful optimization algorithm that can train linear regression, logistic regression, and neural network models. Linear regression is a linear approach for modeling the relationship between a scalar dependent variable y and one or more explanatory variables (or independent variables) denoted x.

Gradient Descent Algorithm Explained With Linear Regression Example

Comments are closed.