Gpt Parser

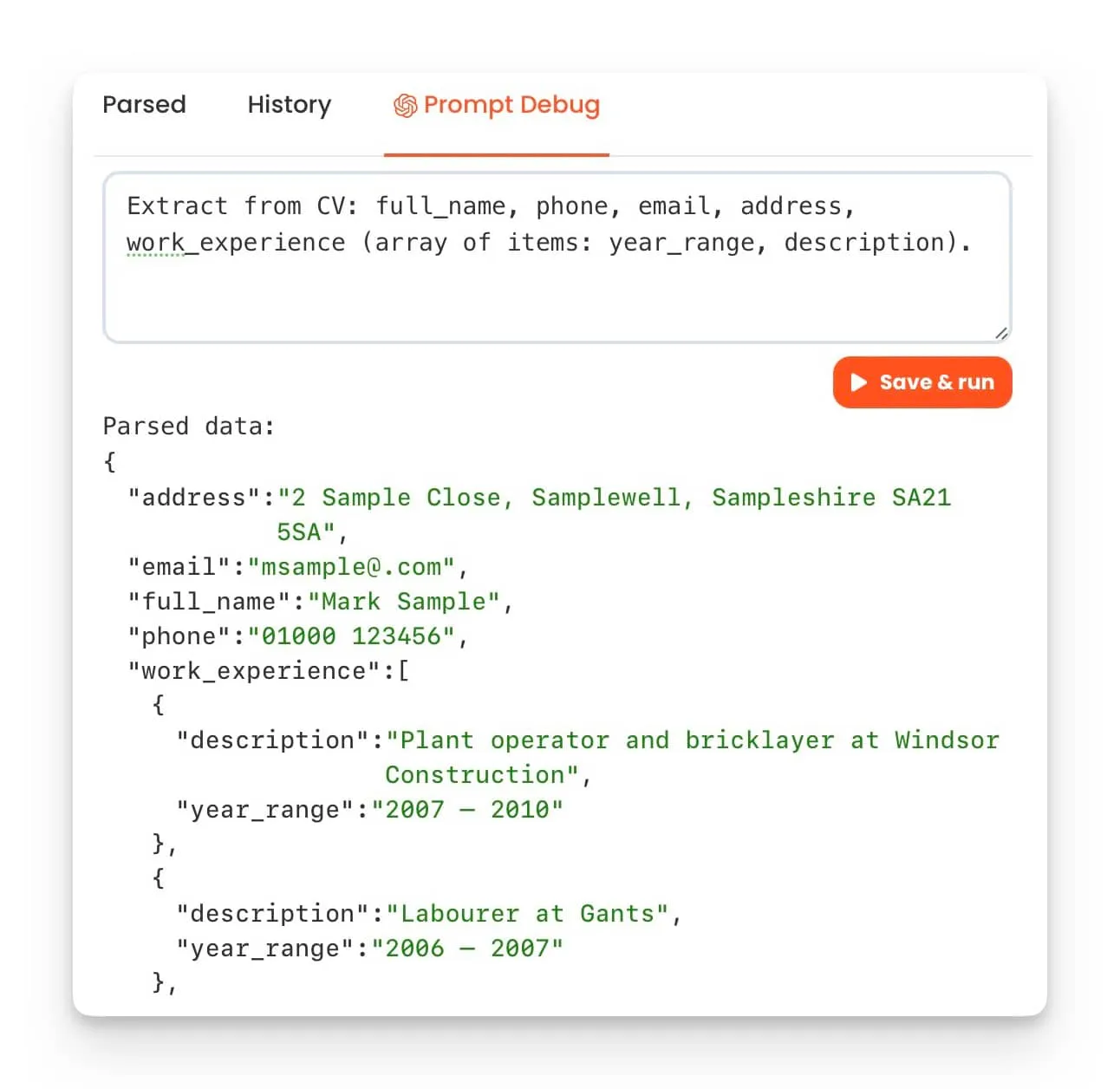

Gpt Parser Parsio Describe what you need — parsio's gpt parser does the rest. use plain english prompts to extract structured data from emails, pdfs, cvs, and unstructured documents. no templates or coding needed. export to google sheets and 6,000 apps. Gptparser is an ai parsing tool to create datasets for openai fine tuning. gptparser allows you to scrape and parse webpage text into individual json files in chat completions format.

Gpt Parser Parsio Revolutionize data extraction with the llm parser. convert emails, pdfs, and documents into structured data. export the parsed data in real time to any app. We support openai reasoning and tool call parser, as well as our sglang native api for tool call and reasoning. refer to reasoning parser and tool parser for more details. This notebook shows how to leverage gpt 4o to turn rich pdf documents such as slide decks or exports from web pages into usable content for your rag application. Omniparser is a comprehensive method for parsing user interface screenshots into structured elements, significantly enhancing the ability of multimodal models like gpt 4 to generate actions accurately grounded in corresponding regions of the interface.

Gpt Parser Parsio This notebook shows how to leverage gpt 4o to turn rich pdf documents such as slide decks or exports from web pages into usable content for your rag application. Omniparser is a comprehensive method for parsing user interface screenshots into structured elements, significantly enhancing the ability of multimodal models like gpt 4 to generate actions accurately grounded in corresponding regions of the interface. # # you should have received a copy of the gnu general public license # along with this program; if not, write to the free software # foundation, inc., 59 temple place, suite 330, boston, ma 02111 1307 usa # # using structures defined in (' en. .org wiki guid partition table') import sys,os,getopt import random,math import struct import hashlib import uuid import zlib if sys.version info < (2,5): sys.stdout.write ("\n\nerror: this script needs python version 2.5 or greater, detected as ") print sys.version info sys.exit () # error lba size = 512 primary gpt lba = 1 offset crc32 of header = 16 gpt header format = '<8siiiiqqqq16sqiii420x' guid partition entry format = '<16s16sqqq72s' ## gpt parser code start def get lba (fhandle, entry number, count): fhandle.seek (lba size*entry number) fbuf = fhandle.read (lba size*count) return fbuf def unsigned32 (n): return n & 0xffffffffl def get gpt header (fhandle, fbuf, lba): fbuf = get lba (fhandle, lba, 1) gpt header = struct.unpack (gpt header format, fbuf) crc32 header value = calc header crc32 (fbuf, gpt header [2]) return gpt header, crc32 header value, fbuf def make nop (byte): nop code = 0x00 pk nop code = struct.pack ('=b', nop code) nop = pk nop code*byte return nop def calc header crc32 (fbuf, header size): nop = make nop (4) clean header = fbuf [:offset crc32 of header] nop fbuf [offset crc32 of header 4:header size] crc32 header value = unsigned32 (zlib.crc32 (clean header)) return crc32 header value def an gpt header (gpt header, crc32 header value, gpt buf): md5 = hashlib.md5 () md5.update (gpt buf) md5 = md5.hexdigest () signature = gpt header [0] revision = gpt header [1] headersize = gpt header [2] crc32 header = gpt header [3] reserved = gpt header [4] currentlba = gpt header [5] backuplba = gpt header [6] first use lba for partitions = gpt header [7] last use lba for partitions = gpt header [8] disk guid = uuid.uuid (bytes le=gpt header [9]) part entry start lba = gpt header [10] num of part entry = gpt header [11] size of part entry = gpt header [12] crc32 of partition array = gpt header [13] print '' print '\t

Gpt Parser Parsio # # you should have received a copy of the gnu general public license # along with this program; if not, write to the free software # foundation, inc., 59 temple place, suite 330, boston, ma 02111 1307 usa # # using structures defined in (' en. .org wiki guid partition table') import sys,os,getopt import random,math import struct import hashlib import uuid import zlib if sys.version info < (2,5): sys.stdout.write ("\n\nerror: this script needs python version 2.5 or greater, detected as ") print sys.version info sys.exit () # error lba size = 512 primary gpt lba = 1 offset crc32 of header = 16 gpt header format = '<8siiiiqqqq16sqiii420x' guid partition entry format = '<16s16sqqq72s' ## gpt parser code start def get lba (fhandle, entry number, count): fhandle.seek (lba size*entry number) fbuf = fhandle.read (lba size*count) return fbuf def unsigned32 (n): return n & 0xffffffffl def get gpt header (fhandle, fbuf, lba): fbuf = get lba (fhandle, lba, 1) gpt header = struct.unpack (gpt header format, fbuf) crc32 header value = calc header crc32 (fbuf, gpt header [2]) return gpt header, crc32 header value, fbuf def make nop (byte): nop code = 0x00 pk nop code = struct.pack ('=b', nop code) nop = pk nop code*byte return nop def calc header crc32 (fbuf, header size): nop = make nop (4) clean header = fbuf [:offset crc32 of header] nop fbuf [offset crc32 of header 4:header size] crc32 header value = unsigned32 (zlib.crc32 (clean header)) return crc32 header value def an gpt header (gpt header, crc32 header value, gpt buf): md5 = hashlib.md5 () md5.update (gpt buf) md5 = md5.hexdigest () signature = gpt header [0] revision = gpt header [1] headersize = gpt header [2] crc32 header = gpt header [3] reserved = gpt header [4] currentlba = gpt header [5] backuplba = gpt header [6] first use lba for partitions = gpt header [7] last use lba for partitions = gpt header [8] disk guid = uuid.uuid (bytes le=gpt header [9]) part entry start lba = gpt header [10] num of part entry = gpt header [11] size of part entry = gpt header [12] crc32 of partition array = gpt header [13] print '' print '\t

Gpt Parser Parsio In the fast paced world of recruitment, the ability to efficiently parse through numerous resumes is invaluable. a gpt parser is a tool that utilizes the capabilities of generative pretrained transformer models, leveraging artificial intelligence to extract crucial data from resumes. Extract structured data from natural language, free text documents like leases and legal contracts with sensible using gpt 4.

Gpt Parser Parsio

Comments are closed.