Google Explores Diffusion Models To Replace Transformer Llms

Google Explores Diffusion Models To Replace Transformer Llms Large language models are the foundation of generative ai today. we’re using a technique called diffusion to explore a new kind of language model that gives users greater control, creativity, and speed in text generation. Google researchers propose diffusion based architecture as an alternative to transformer models for language tasks. early results show comparable performance with 40–60% lower inference costs and enhanced scalability.

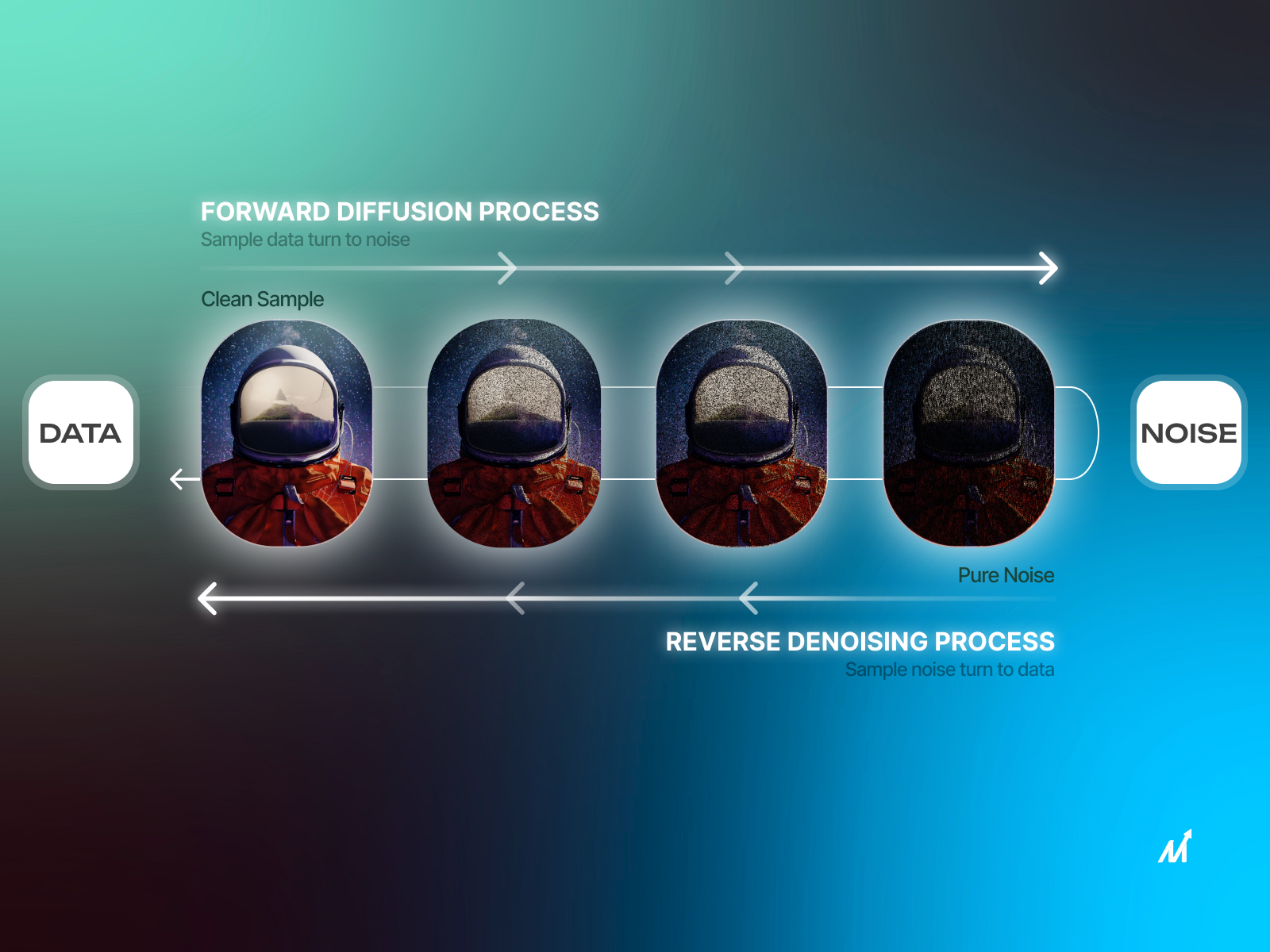

2023 Llm Grounded Diffusion Enhancing Prompt Understanding Of Text By dissecting the core mechanisms of diffusion and transformer models, a deeper understanding of their individual capabilities and the exciting potential of their integration can be achieved. Diffusion text models introduce promising new capabilities but must overcome practical and theoretical gaps before matching the robustness and ease of use of transformer llms. Gemini diffusion — google's noise to text model is roughly 5x faster and that actually matters google deepmind quietly published results for gemini diffusion, an experimental language model that generates text by refining noise rather than predicting tokens one at a time. This section explores innovative and specialized applications in which llms are combined with diffusion models, demonstrating the versatility and broad utility of these approaches across different contexts.

Diffusion Llms A New Era Of Large Language Models Gemini diffusion — google's noise to text model is roughly 5x faster and that actually matters google deepmind quietly published results for gemini diffusion, an experimental language model that generates text by refining noise rather than predicting tokens one at a time. This section explores innovative and specialized applications in which llms are combined with diffusion models, demonstrating the versatility and broad utility of these approaches across different contexts. The capabilities of large language models (llms) are widely regarded as relying on autoregressive models (arms). we challenge this notion by introducing llada, a diffusion model trained from scratch under the pre training and supervised fine tuning (sft) paradigm. From ddpm to llada 2.1 everything about diffusion based llms. masked diffusion, token editing, and moe scaling dissected across 4 parts. In this video, we break down google's brand new gemini diffusion model, a revolutionary experimental text generation model built using diffusion techniques—u. With the potential to transform language model development, diffusion based models represent a scalable and parallelizable alternative to traditional autoregressive architectures.

Multimodal Llms Explained Stable Diffusion Online The capabilities of large language models (llms) are widely regarded as relying on autoregressive models (arms). we challenge this notion by introducing llada, a diffusion model trained from scratch under the pre training and supervised fine tuning (sft) paradigm. From ddpm to llada 2.1 everything about diffusion based llms. masked diffusion, token editing, and moe scaling dissected across 4 parts. In this video, we break down google's brand new gemini diffusion model, a revolutionary experimental text generation model built using diffusion techniques—u. With the potential to transform language model development, diffusion based models represent a scalable and parallelizable alternative to traditional autoregressive architectures.

Comments are closed.