Goo Gle Launches Tensorflow Lite Developer Preview For Mobile Machine Learning

Google Launches Tensorflow Lite Developer Preview For Machine Learning With the introduction of tensorflow lite, google has taken a significant step toward optimizing machine learning on mobile platforms, enabling developers to deploy intelligent applications that offer seamless experiences. The name litert captures this multi framework vision for the future: enabling developers to start with any popular framework and run their model on device with exceptional performance. litert, part of the google ai edge suite of tools, is the runtime that lets you seamlessly deploy ml and ai models on android, ios, and embedded devices.

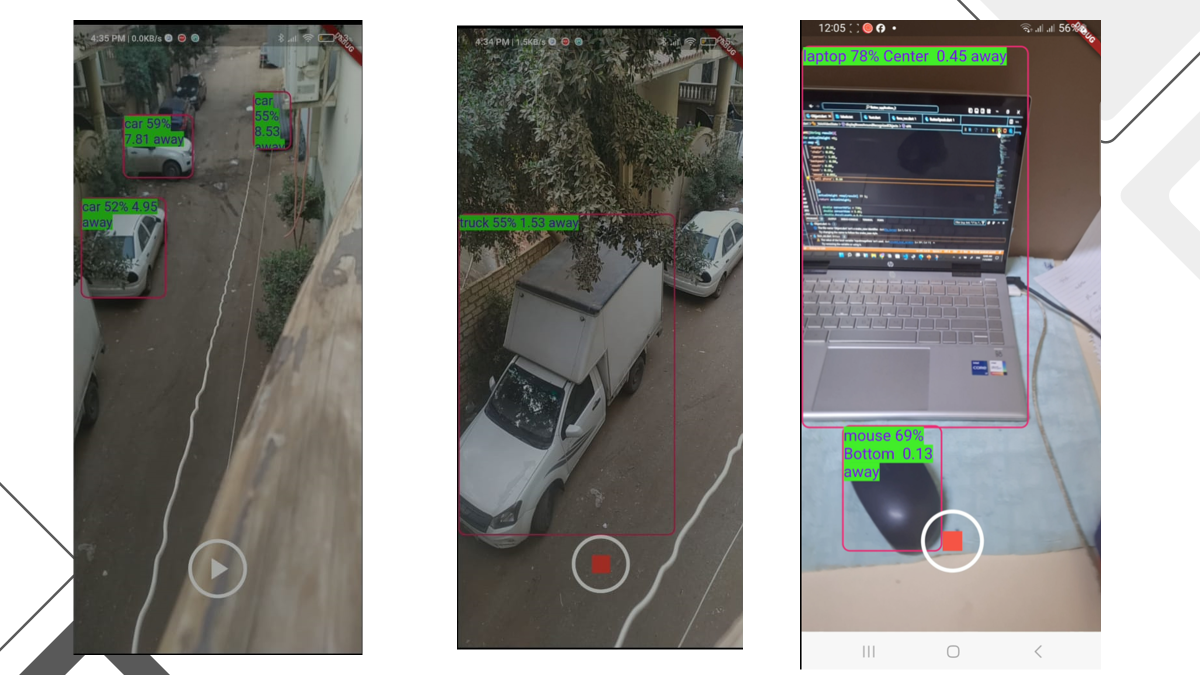

Google Launches Tensorflow Lite Developer Preview Today, we're happy to announce the developer preview of tensorflow lite, tensorflow’s lightweight solution for mobile and embedded devices!. As developers, we’re increasingly looking toward on device machine learning to meet these demands. that’s where tensorflow lite (tflite) enters the picture. It enables low latency inference of on device machine learning models with a small binary size and fast performance supporting hardware acceleration. Tensorflow lite enables low latency reasoning for machine learning model devices. in this article, google presents the tensorflow lite framework and some of its features.

Pdf Mobile Ai And Tensorflow Lite Developer Summit Mobile Ai It enables low latency inference of on device machine learning models with a small binary size and fast performance supporting hardware acceleration. Tensorflow lite enables low latency reasoning for machine learning model devices. in this article, google presents the tensorflow lite framework and some of its features. Tensorflow lite allows low latency inference of machine learning models on the device side. in this article, the tensorflow team will introduce us to the features of tensorflow lite and show a lightweight model available. Moving forward, litert serves as the universal on device inference framework, officially replacing tensorflow lite (tflite). this update streamlines the deployment of machine learning models to mobile and edge devices while expanding hardware and framework compatibility. Tensorflow lite, now named litert, is still the same high performance runtime for on device ai, but with an expanded vision to support models authored in pytorch, jax, and keras. Train and deploy your own large language model (llm) on android using keras and tensorflow lite. large language models (llms) have revolutionized tasks like text generation and language.

How Tensorflow Lite Optimizes Neural Networks For Mobile Machine Tensorflow lite allows low latency inference of machine learning models on the device side. in this article, the tensorflow team will introduce us to the features of tensorflow lite and show a lightweight model available. Moving forward, litert serves as the universal on device inference framework, officially replacing tensorflow lite (tflite). this update streamlines the deployment of machine learning models to mobile and edge devices while expanding hardware and framework compatibility. Tensorflow lite, now named litert, is still the same high performance runtime for on device ai, but with an expanded vision to support models authored in pytorch, jax, and keras. Train and deploy your own large language model (llm) on android using keras and tensorflow lite. large language models (llms) have revolutionized tasks like text generation and language.

With Tensorflow Lite Google Is Bringing On Device Machine Learning To Tensorflow lite, now named litert, is still the same high performance runtime for on device ai, but with an expanded vision to support models authored in pytorch, jax, and keras. Train and deploy your own large language model (llm) on android using keras and tensorflow lite. large language models (llms) have revolutionized tasks like text generation and language.

Tensorflow Lite Vs Pytorch Mobile For On Device Machine Learning By

Comments are closed.