Giulio Biroli Why Diffusion Models Dont Memorize

Tony Bonnaire A key challenge is understanding the mechanisms that prevent their memorization of training data and allow generalization. in this work, we investigate the role of the training dynamics in the transition from generalization to memorization. This work was awarded a best paper award at neurips 2025. why it matters? this work resolves the paradox of why overparameterized diffusion models generalize despite having the capacity to perfectly memorize training data.

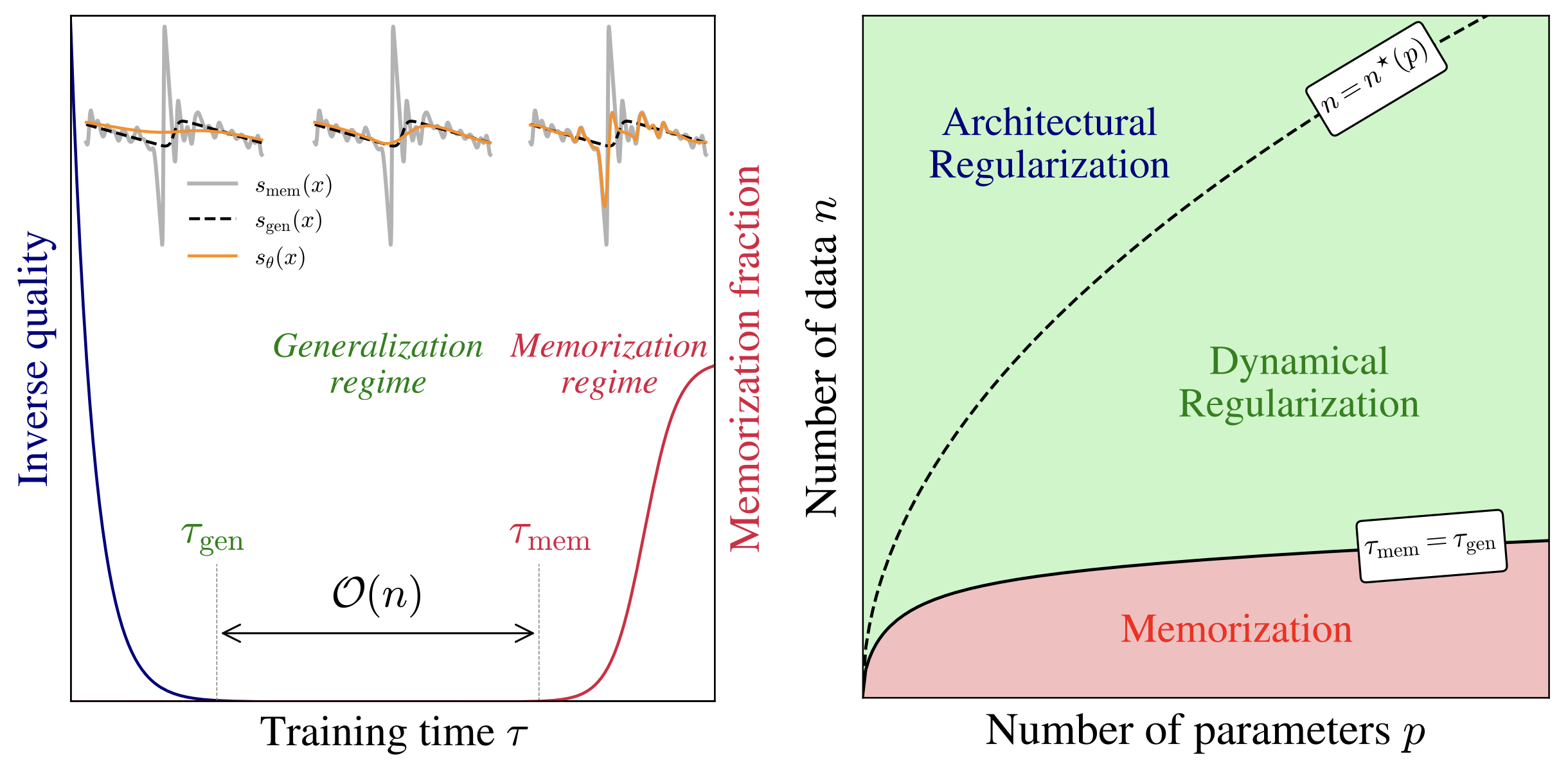

Featured Png Research reveals a dynamical regularization effect in diffusion models' training dynamics that prevents memorization and promotes generalization, supported by experiments and theoretical analysis. diffusion models have achieved remarkable success across a wide range of generative tasks. We have shown that the training dynamics of neural network based score functions display a form of implicit regularization that prevents memorization even in highly overparameterized diffusion models. This document provides detailed derivations and additional experiments supporting our paper, why diffusion models don’t memorize: the role of implicit dynamical regularization in training. A key challenge is understanding the mechanisms that prevent their memorization of training data and allow generalization. in this work, we investigate the role of the training dynamics in the transition from generalization to memorization.

Giulio Biroli Generative Ai Diffusion Models And Statistical Physics This document provides detailed derivations and additional experiments supporting our paper, why diffusion models don’t memorize: the role of implicit dynamical regularization in training. A key challenge is understanding the mechanisms that prevent their memorization of training data and allow generalization. in this work, we investigate the role of the training dynamics in the transition from generalization to memorization. A key challenge is understanding the mechanisms that prevent their memorization of training data and allow generalization. in this work, we investigate the role of the training dynamics in the transition from generalization to memorization. In this talk, i will focus on the role of the training dynamics in the transition from generalization to memorization. i will identify two sharply separated timescales in training. In a recent study, giulio biroli, tony bonnaire and raphaël urfin of lpens laboratory, in collaboration with marc mézard (bocconi university), uncovered the fundamental mechanism that enables generative models to escape memorization and acquire true creative capability. Implicit dynamical regularization during training gives diffusion models a generalization window that widens with the training set size, so stopping within this window prevents memorization.

Comments are closed.