Github Utkartist Multi Model Rag Multimodal Retrieval Augmented

Exploring The Future Of Multimodal Retrieval Augmented Generation Rag This project implements a multi modal retrieval augmented generation (rag) model, which integrates information retrieval with text generation to produce more accurate and contextually relevant outputs. This project demonstrates how to use multimodal rag (retrieval augmented generation) for electric vehicles, focusing on tesla cars. it utilizes a vector database to store and index images, enabling efficient retrieval and generation of information based on visual data.

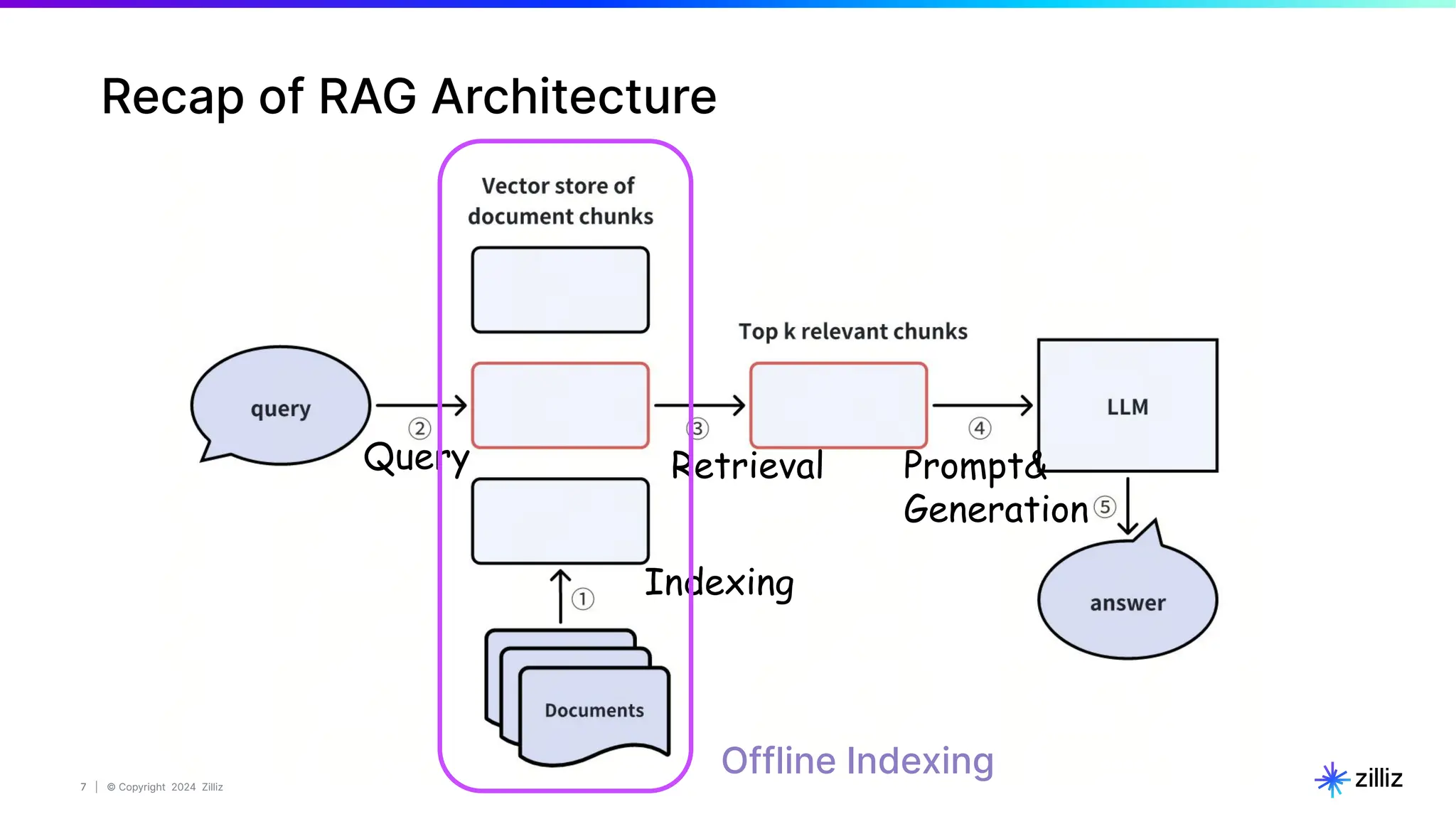

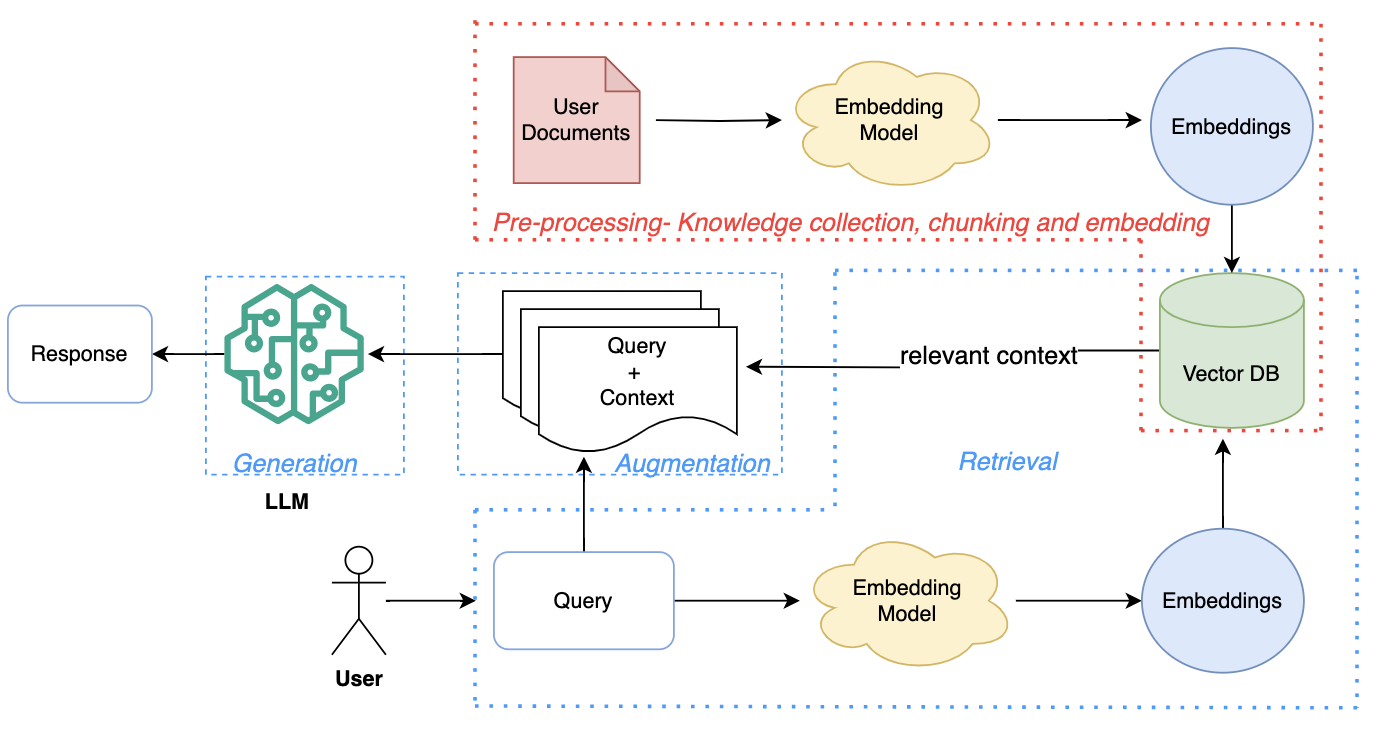

Multimodal Retrieval Augmented Generation Rag With Milvus Pdf Recent studies show mrag outperforms traditional rag, especially in scenarios requiring both visual and textual understanding. this survey reviews mrag's essential components, datasets, evaluation methods, and limitations, providing insights into its construction and improvement. Multimodal retrieval augmented generation (rag) is an advanced technique that combines text and image data to enhance the capabilities of large language models (llms) like gpt 4. We showed how to combine adaptive loading, multimodal prompting, controlled reasoning, tool augmented interaction, schema constrained outputs, lightweight rag, and session save resume patterns into one integrated system. we also inspected expert routing behavior and measured throughput to understand the model’s usability and performance. Multimodal retrieval augmented generation combines text, images, audio and video with retrieval to enhance generative models, enabling more accurate, context aware and informative responses beyond single modality systems.

Multimodal Retrieval Augmented Generation Rag With Vector Database Pdf We showed how to combine adaptive loading, multimodal prompting, controlled reasoning, tool augmented interaction, schema constrained outputs, lightweight rag, and session save resume patterns into one integrated system. we also inspected expert routing behavior and measured throughput to understand the model’s usability and performance. Multimodal retrieval augmented generation combines text, images, audio and video with retrieval to enhance generative models, enabling more accurate, context aware and informative responses beyond single modality systems. This survey offers a structured and comprehensive analysis of multimodal rag systems, covering datasets, metrics, benchmarks, evaluation, methodologies, and innovations in retrieval, fusion, augmentation, and generation. In this notebook, we demonstrate how to build a multimodal retrieval augmented generation (rag) system by combining the colpali retriever for document retrieval with the qwen2 vl vision language model (vlm). together, these models form a powerful rag system capable of enhancing query responses with both text based documents and visual data. This system will allow queries to return relevant images and text, serving as a retrieval mechanism for a multimodal retrieval augmented generation (rag) application. Learn how to build multimodal retrieval augmented generation (mm rag) systems that combine text, images, audio, and video. discover contrastive learning, any to any search with vector databases, and practical code examples using weaviate and openai gpt 4v.

Multi Model Rag Multi Modal Rag Ipynb At Main Utkartist Multi Model This survey offers a structured and comprehensive analysis of multimodal rag systems, covering datasets, metrics, benchmarks, evaluation, methodologies, and innovations in retrieval, fusion, augmentation, and generation. In this notebook, we demonstrate how to build a multimodal retrieval augmented generation (rag) system by combining the colpali retriever for document retrieval with the qwen2 vl vision language model (vlm). together, these models form a powerful rag system capable of enhancing query responses with both text based documents and visual data. This system will allow queries to return relevant images and text, serving as a retrieval mechanism for a multimodal retrieval augmented generation (rag) application. Learn how to build multimodal retrieval augmented generation (mm rag) systems that combine text, images, audio, and video. discover contrastive learning, any to any search with vector databases, and practical code examples using weaviate and openai gpt 4v.

Multimodal Retrieval Augmented Generation Rag This system will allow queries to return relevant images and text, serving as a retrieval mechanism for a multimodal retrieval augmented generation (rag) application. Learn how to build multimodal retrieval augmented generation (mm rag) systems that combine text, images, audio, and video. discover contrastive learning, any to any search with vector databases, and practical code examples using weaviate and openai gpt 4v.

.jpg)

Unlocking The Power Of Multimodal Ai What Is Multimodal Retrieval

Comments are closed.