Github Trainyolo Yolo Onnx Yolo Inference With Onnx Runtime

Github Kvnptl Yolo Inference Onnx Run Yolo Inference In C Or Welcome to the yolov8 onnx inference library, a lightweight and efficient solution for performing object detection with yolov8 using the onnx runtime. this library is designed for cloud deployment and embedded devices, providing minimal dependencies and easy installation via pip. Documentation for yolo onnx, a python library for running yolo models in onnx format with ultralytics.

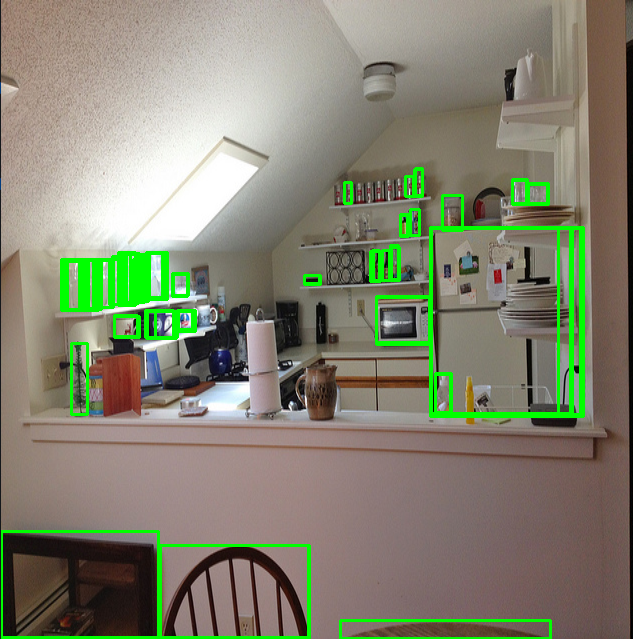

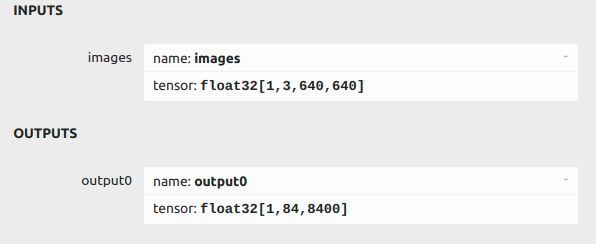

Github Kvnptl Yolo Inference Onnx Run Yolo Inference In C Or Learn how to export yolo26 models to onnx format for flexible deployment across various platforms with enhanced performance. In this tutorial, you’ll learn how to run yolo object detection models directly in your browser using onnx and webassembly (wasm). instead of relying on python scripts or gpu powered servers, this approach allows you to harness the power of modern browsers for real time inference — no backend needed. Tl;dr: yolo performs object detection in real time, onnx is a cross platform model accelerator, but i couldn’t find a tutorial for c , so i made this. A pipeless example that runs inference using the onnx runtime and yolo to detect objects in a video stream.

Deployment Of Yolov8 On Onnx Runtime C Xinhao Liu Tl;dr: yolo performs object detection in real time, onnx is a cross platform model accelerator, but i couldn’t find a tutorial for c , so i made this. A pipeless example that runs inference using the onnx runtime and yolo to detect objects in a video stream. Welcome to the yolov8 onnx inference library, a lightweight and efficient solution for performing object detection with yolov8 using the onnx runtime. this library is designed for cloud deployment and embedded devices, providing minimal dependencies and easy installation via pip. Yolo inference with onnx runtime . contribute to trainyolo yolo onnx development by creating an account on github. Trainyolo has 4 repositories available. follow their code on github. This short tutorial explores three different ways to export and run inference with yolov11 models using onnx — from raw decoding to ready to deploy end to end models.

Deployment Of Yolov8 On Onnx Runtime C Xinhao Liu Welcome to the yolov8 onnx inference library, a lightweight and efficient solution for performing object detection with yolov8 using the onnx runtime. this library is designed for cloud deployment and embedded devices, providing minimal dependencies and easy installation via pip. Yolo inference with onnx runtime . contribute to trainyolo yolo onnx development by creating an account on github. Trainyolo has 4 repositories available. follow their code on github. This short tutorial explores three different ways to export and run inference with yolov11 models using onnx — from raw decoding to ready to deploy end to end models.

Comments are closed.