Github Sungmo96 Image Interpolation Using Stable Diffusion

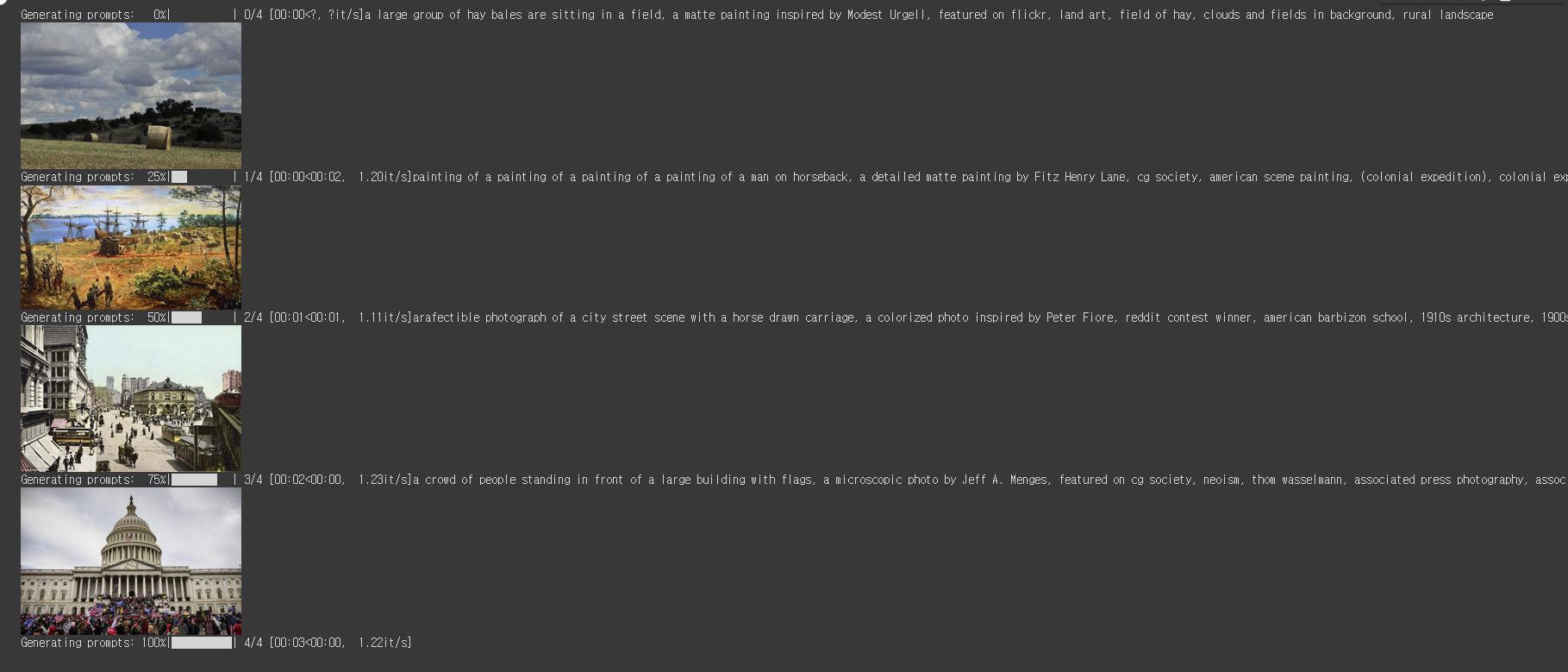

Github Sungmo96 Image Interpolation Using Stable Diffusion These prompts are then used to generate multiple images that would later be used to create a clip or a gif. we use slerp as our morphing interpolation method to get better results that would prevent from sudden jumps from one image to another. In this notebook, we will explore examples of image interpolation using stable diffusion and demonstrate how latent space walking can be implemented and utilized to create smooth.

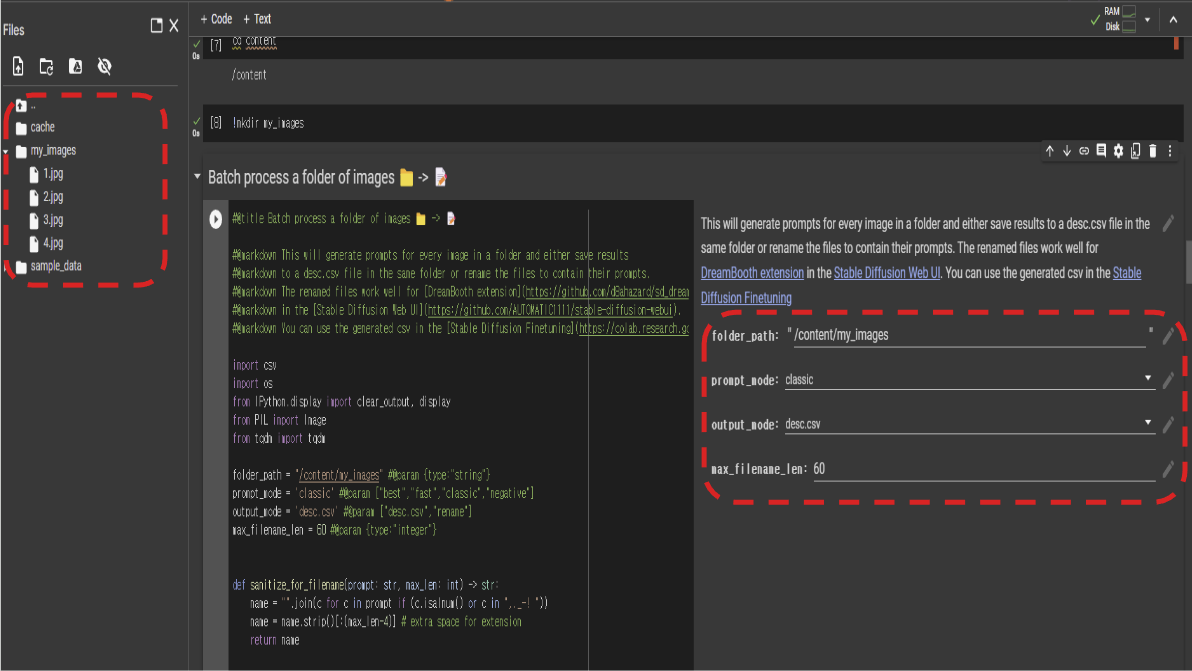

Github Sungmo96 Image Interpolation Using Stable Diffusion In this notebook, we will explore examples of image interpolation using stable diffusion and demonstrate how latent space walking can be implemented and utilized to create smooth transitions between images. By leveraging the powerful conditioning abilities of pre trained diffusion models, we can generate controllable and creative interpolations between images with diverse styles, layouts, and subjects. I am releasing my interpolate.py script ( github diceowl stablediffusionstuff), which can interpolate between two input images and two or more prompts. This blog post provides a comprehensive tutorial on creating morphing animations using the animate diff extension and frame interpolation. follow our step by step guide to achieve seamless animations from image sequences.

Github Sungmo96 Image Interpolation Using Stable Diffusion I am releasing my interpolate.py script ( github diceowl stablediffusionstuff), which can interpolate between two input images and two or more prompts. This blog post provides a comprehensive tutorial on creating morphing animations using the animate diff extension and frame interpolation. follow our step by step guide to achieve seamless animations from image sequences. We recommend around 25 steps for inference steps since the quality of images do not differ much with further inference. for interpolation steps, the more the better, but usually somewhere around 25 to 40 found to be suffice to create good quality clips.\n. Contribute to sungmo96 image interpolation using stable diffusion development by creating an account on github. Contribute to sungmo96 image interpolation using stable diffusion development by creating an account on github. Using the clip interrogator, we first obtain adequate prompts that match the images as our input.","2. these prompts are then used to generate multiple images that would later be used to create a clip or a gif.","3.

Comments are closed.