Github Smlbansal Visual Navigation Release Combining Optimal Control

Github Smlbansal Visual Navigation Release Combining Optimal Control In this codebase we explore "combining optimal control and learning for visual navigation in novel environments". we provide code to run our pretrained model based method as well as a comparable end to end method on geometric, point navigation tasks in the stanford building parser dataset. We deploy our simulation trained algorithm on a turtlebot 2 to test on real world navigational scenarios. each experiment is visualized from three different viewpoints, however the robot only sees the "first person view" (also labeled robot view).

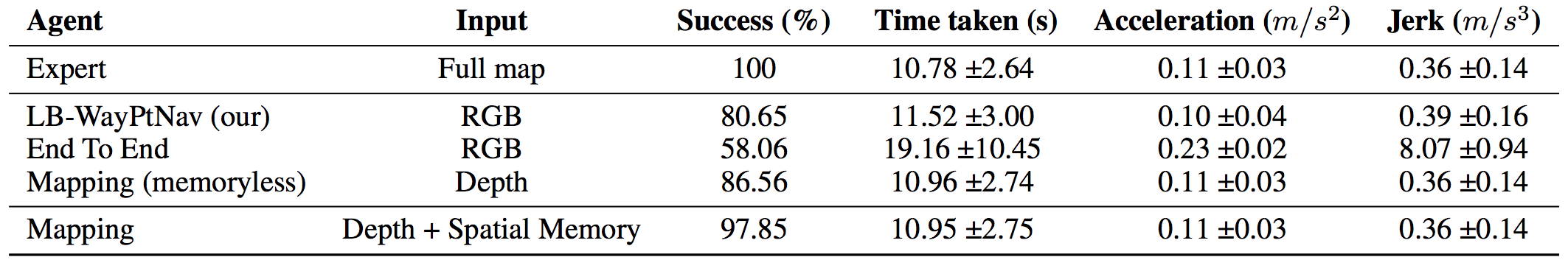

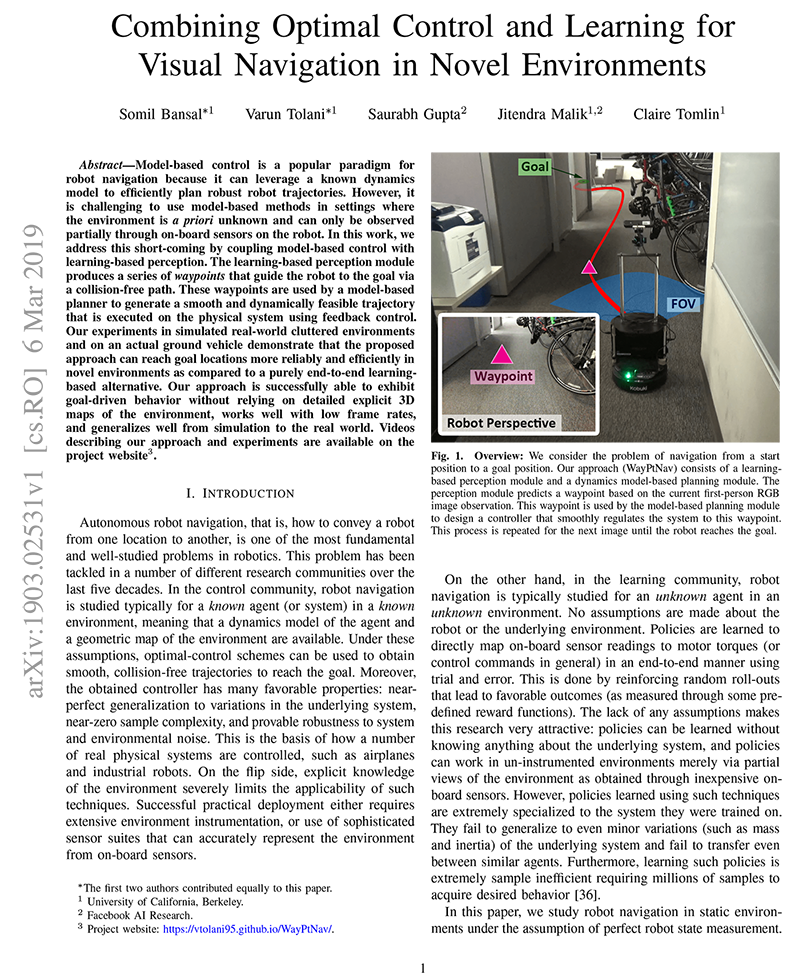

Optimal Control Learned Perception In this codebase we explore "combining optimal control and learning for visual navigation in novel environments". we provide code to run our pretrained model based method as well as a comparable end to end method on geometric, point navigation tasks in the stanford building parser dataset. In this work, we address this short coming by coupling model based control with learning based perception. the learning based perception module produces a series of waypoints that guide the robot to the goal via a collision free path. The hope is to combine the two approaches to leverage the robust performance of optimal control while navigating an unknown environment. Our approach, learning based waypoint for navigation around dynamic humans (lb wayptnav dh), uses two mod ules for navigation around humans: perception, and planning and control (see appendix viii a for more details).

Optimal Control Learned Perception The hope is to combine the two approaches to leverage the robust performance of optimal control while navigating an unknown environment. Our approach, learning based waypoint for navigation around dynamic humans (lb wayptnav dh), uses two mod ules for navigation around humans: perception, and planning and control (see appendix viii a for more details). We take a factorized approach to navigation that uses learning to make high level navigation decisions in unknown environments and leverages optimal control to produce smooth trajectories and a robust tracking controller. Output is a sequence of dynamically feasible waypoints and corresponding spline trajectories. optimal trajectories can be found in the training phase as this process is done in simulation with perfect knowledge of the environment. cost function for trajectory is t x j(z; u) = ji (zi; ui). We propose an approach that combines learning based perception with model based optimal control to navigate among humans based only on monocular, first person rgb images. The technical paper entitled "combining optimal control and learning for visual navigation in novel environments" by somil bansal et al., presents a framework for autonomous navigation that efficaciously integrates model based control with learning based perception components.

Comments are closed.