Github Reasoning Grasping Reasoning Grasping Github Io

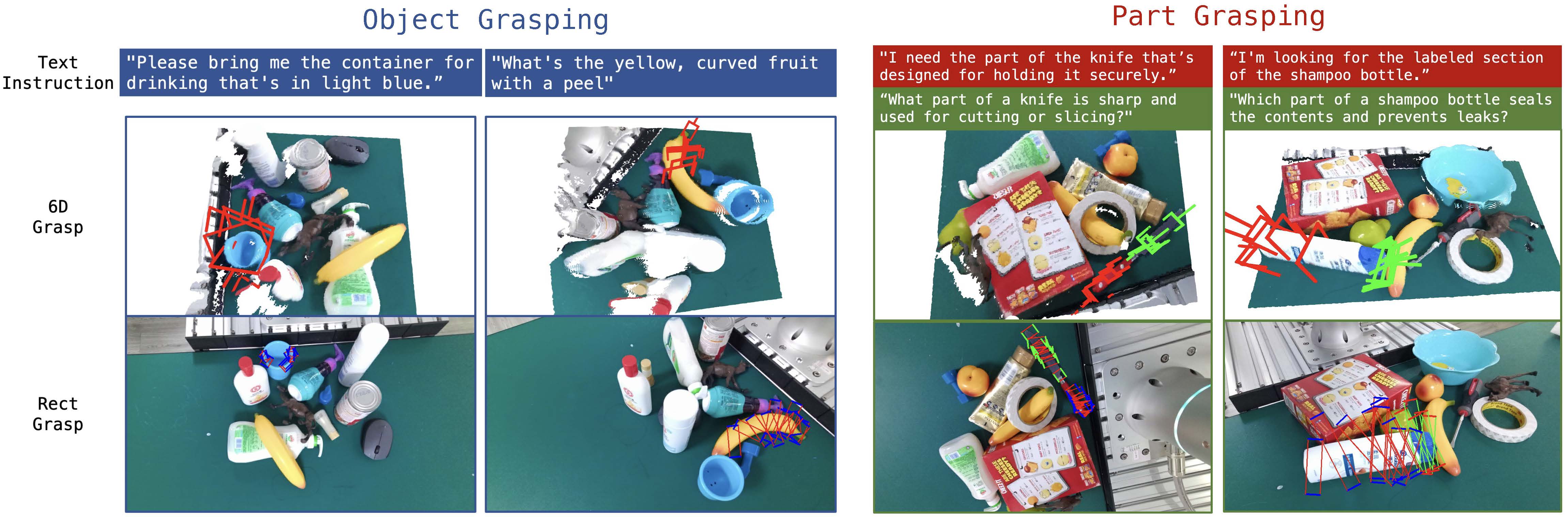

Github Reasoning Grasping Reasoning Grasping Github Io To accomplish this, we propose an end to end reasoning grasping model that integrates a multimodal large language model (llm) with a vision based robotic grasping framework. The resulting model interprets complex and implicit instructions, accurately predicts robotic grasping poses for target objects or specific parts within cluttered environments, and supports multi round conversations with users.

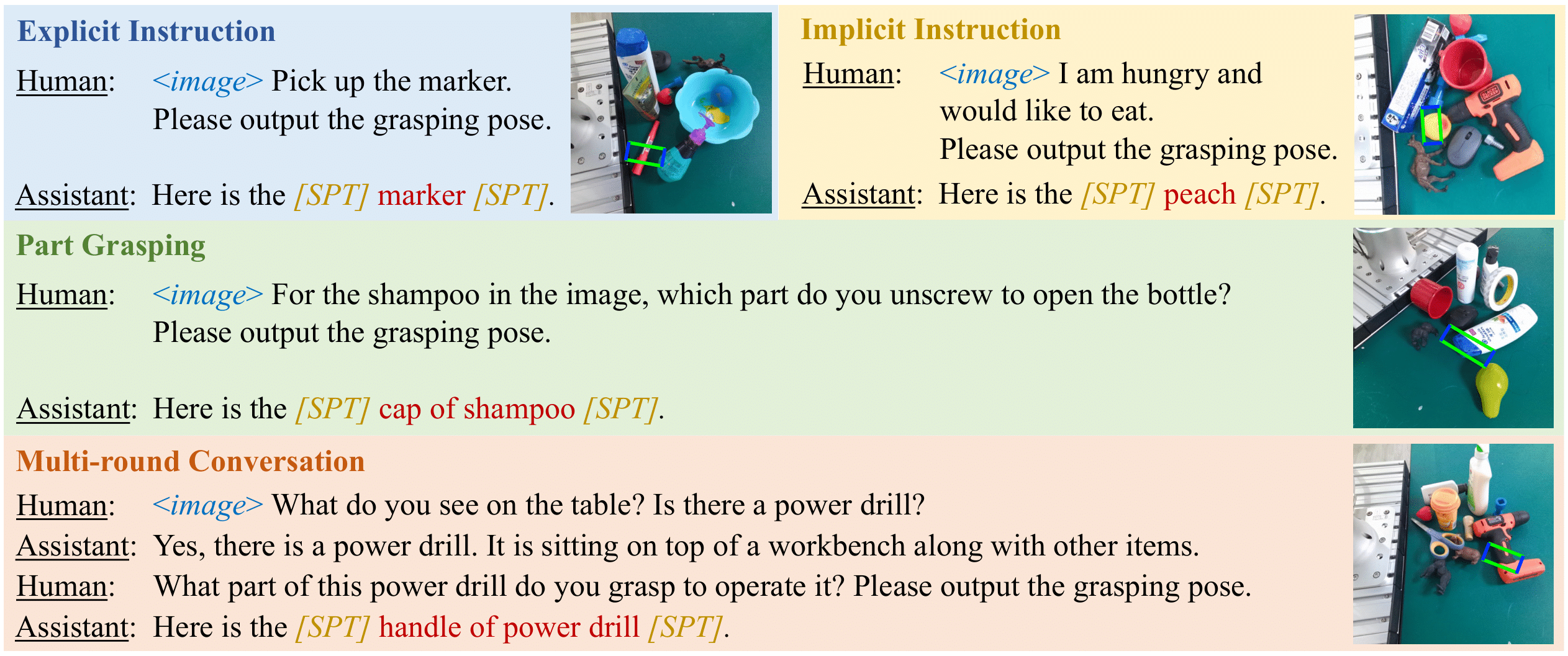

Reasoning Grasping Via Multimodal Large Language Model Popular repositories loading reasoning grasping.github.io reasoning grasping.github.io public javascript. Contribute to reasoning grasping reasoning grasping.github.io development by creating an account on github. Reasoning grasping.github.io public javascript • 0 • 0 • 0 • 0 •updated oct 14, 2024 oct 14, 2024. The reasoning phase analyzes the object's shape and structure based on its category and generates corresponding grasping strategies. figure illustrates examples of reasoning templates in the.

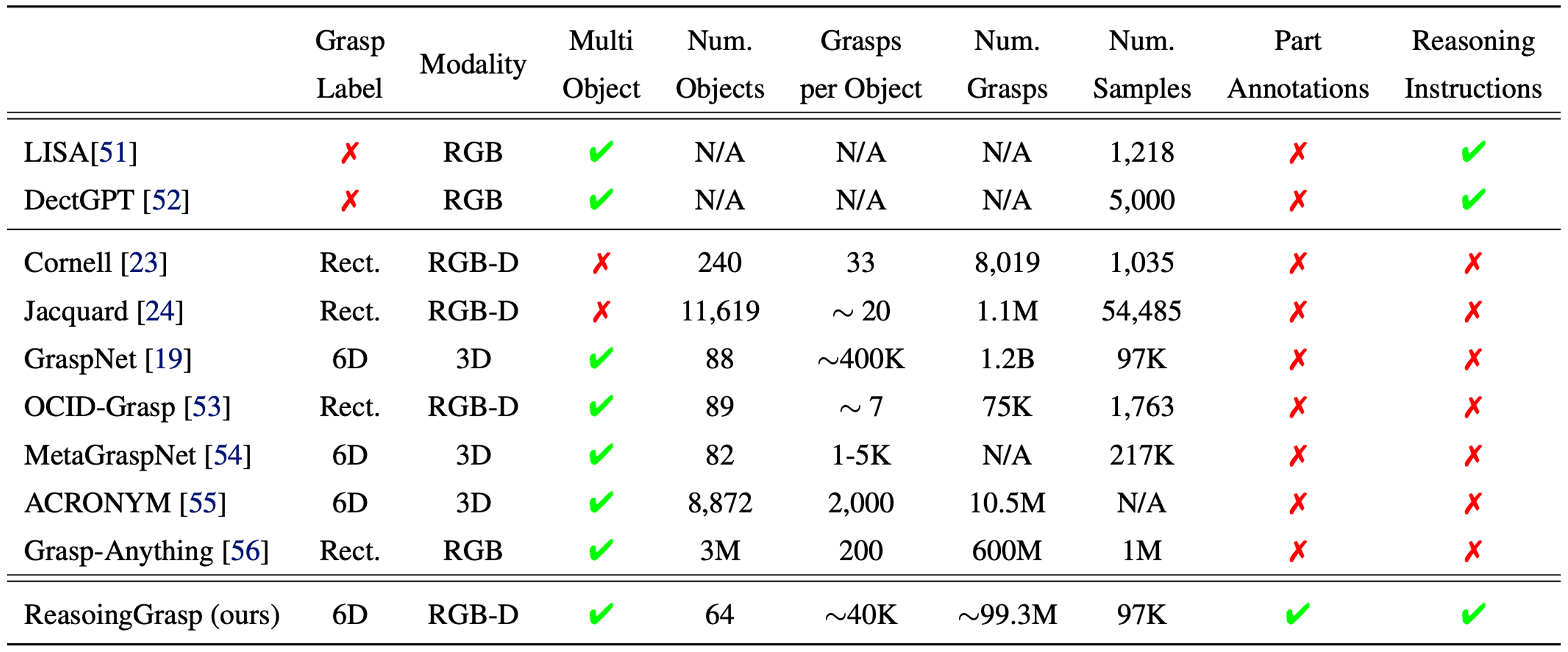

Reasoning Grasping Via Multimodal Large Language Model Reasoning grasping.github.io public javascript • 0 • 0 • 0 • 0 •updated oct 14, 2024 oct 14, 2024. The reasoning phase analyzes the object's shape and structure based on its category and generates corresponding grasping strategies. figure illustrates examples of reasoning templates in the. In this section, we present our model for reasoning grasping that utilizes the reasoning ability of multi modal llms by integrating the extracted features from its textual outputs into the robotic grasping prediction process. Reasoning grasping via multimodal large language model. shiyu jin, jinxuan xu, yutian lei, liangjun zhang. under review. [paper] reasoning tuning grasp: adapting multimodal large language models for robotic grasping. jinxuan xu, shiyu jin, yutian lei, yuqian zhang, liangjun zhang. workshop in corl 2023. under review. [paper] [website]. Experiments demonstrate state of the art performance in grasp reasoning and execution, highlighting the potential of vlms for instruction based reasoning and grasping. Performing robotic grasping from a cluttered bin based on human instructions is a challenging task, as it requires understanding both the nuances of free form language and the spatial relationships between objects.

Reasoning Grasping Via Multimodal Large Language Model In this section, we present our model for reasoning grasping that utilizes the reasoning ability of multi modal llms by integrating the extracted features from its textual outputs into the robotic grasping prediction process. Reasoning grasping via multimodal large language model. shiyu jin, jinxuan xu, yutian lei, liangjun zhang. under review. [paper] reasoning tuning grasp: adapting multimodal large language models for robotic grasping. jinxuan xu, shiyu jin, yutian lei, yuqian zhang, liangjun zhang. workshop in corl 2023. under review. [paper] [website]. Experiments demonstrate state of the art performance in grasp reasoning and execution, highlighting the potential of vlms for instruction based reasoning and grasping. Performing robotic grasping from a cluttered bin based on human instructions is a challenging task, as it requires understanding both the nuances of free form language and the spatial relationships between objects.

Comments are closed.