Github Oshapio Intermediate Layer Generalization Using Intermediate

Github Oshapio Intermediate Layer Generalization Using Intermediate We provide notebooks to reproduce the main experiments using a resnet18 model showing the utility of intermediate layers for ood generalization. the notebooks contain detailed instructions on how to run the experiments and visualize the results. We provide notebooks to reproduce the main experiments using a resnet18 model showing the utility of intermediate layers for ood generalization. the notebooks contain detailed instructions on how to run the experiments and visualize the results.

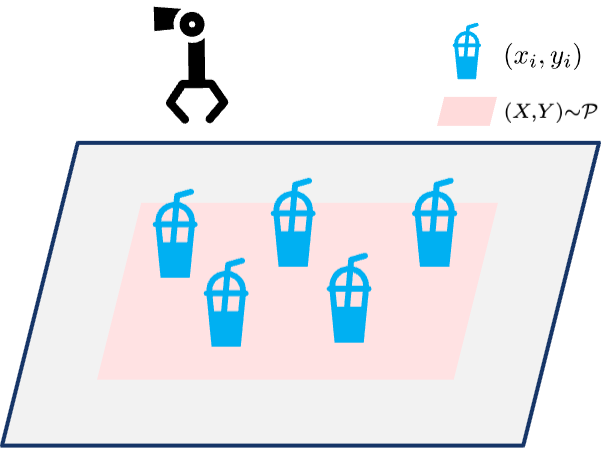

Yixiao Feng Using intermediate layers to improve ood generalization for vision tasks network graph · oshapio intermediate layer generalization. In this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. to this end, we introduce intermediate layer classifiers (ilcs). It has been suggested that these classifiers can still find useful features in the network's last layer that hold up under such shifts. in this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. We observe that (1) when ood data is available (‘few shot’), the best location to train the linear probe is at an intermediate layer (layer 6 for the resnet, and layer 7 for vit) and relying on the last layer gives suboptimal results.

Yixiao Wang It has been suggested that these classifiers can still find useful features in the network's last layer that hold up under such shifts. in this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. We observe that (1) when ood data is available (‘few shot’), the best location to train the linear probe is at an intermediate layer (layer 6 for the resnet, and layer 7 for vit) and relying on the last layer gives suboptimal results. In this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. In this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. to this end, we introduce intermediate layer classifiers (ilcs).

Puhao Li 李浦豪 In this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. In this work, we question the use of last layer representations for out of distribution (ood) generalisation and explore the utility of intermediate layers. to this end, we introduce intermediate layer classifiers (ilcs).

Sample Lesson Video Git Intermediate 1 Personal Workflow And Github

Github Kbshal Oshogpt Oshogpt Using Ai To Share Osho S Wisdom

Comments are closed.