Github Nidhi 93 Svm Decision Trees And Boosting Algorithm This

Github Nidhi 93 Svm Decision Trees And Boosting Algorithm This This project is about the implementation of three supervised learning methods namely, support vector machine (svm), decision tree, and boosting on two distinct data sets i.e. sgemm gpu kernel performance data set. This project is about the implementation of three supervised learning methods namely, support vector machine (svm), decision tree, and boosting on two distinct data sets i.e. sgemm gpu kernel performance data set.

A Gradient Boosting Decision Tree Algorithm Combining Synthetic This project is about the implementation of three supervised learning methods namely, support vector machine (svm), decision tree, and boosting on two distinct data sets i.e. sgemm gpu kernel performance data set. This project is about the implementation of three supervised learning methods namely, support vector machine (svm), decision tree, and boosting on two distinct data sets i.e. sgemm gpu kernel performance data set. This project is about the implementation of three supervised learning methods namely, support vector machine (svm), decision tree, and boosting on two distinct data sets i.e. sgemm gpu kernel performance data set. Now that we have an understanding of how this can be used in our generated examples, let's try to use a decision tree to predict the classes on the breast cancer dataset that we introduced at.

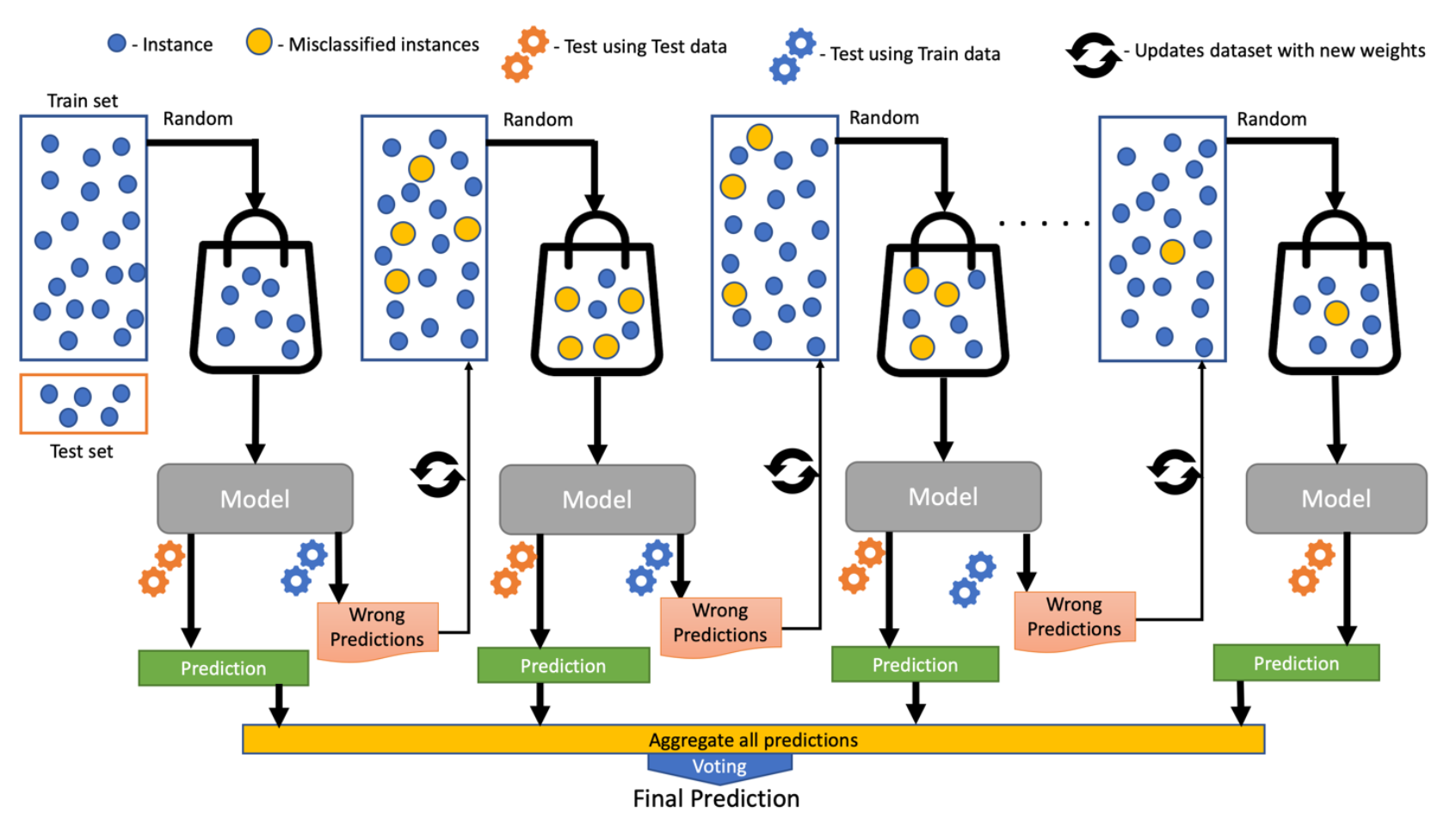

Github Reemapeer 3 Svm And Decision Tree Algorithm Performance This project is about the implementation of three supervised learning methods namely, support vector machine (svm), decision tree, and boosting on two distinct data sets i.e. sgemm gpu kernel performance data set. Now that we have an understanding of how this can be used in our generated examples, let's try to use a decision tree to predict the classes on the breast cancer dataset that we introduced at. In this post, we dive into the world of supervised learning, comparing the performance of four popular algorithms: k nearest neighbors (knn), support vector machines (svm), neural networks (nn), and decision trees with boosting (specifically, adaboost). Adaboost uses simple decision trees with one split known as the decision stumps of weak learners. gradient boosting can use a wide range of base learners such as decision trees and linear models. adaboost is more sensitive to noisy data and outliers due to aggressive weighting. This tutorial will explain boosted trees in a self contained and principled way using the elements of supervised learning. we think this explanation is cleaner, more formal, and motivates the model formulation used in xgboost. Binary classification for kaggle competition: svm, lightgbm, decision tree, gradient boosting, feature engineering, and catboost. in this article, i will code for the kaggle coding.

Github Agapio7 Decision Trees And Xgboost Algorithm Documentation In this post, we dive into the world of supervised learning, comparing the performance of four popular algorithms: k nearest neighbors (knn), support vector machines (svm), neural networks (nn), and decision trees with boosting (specifically, adaboost). Adaboost uses simple decision trees with one split known as the decision stumps of weak learners. gradient boosting can use a wide range of base learners such as decision trees and linear models. adaboost is more sensitive to noisy data and outliers due to aggressive weighting. This tutorial will explain boosted trees in a self contained and principled way using the elements of supervised learning. we think this explanation is cleaner, more formal, and motivates the model formulation used in xgboost. Binary classification for kaggle competition: svm, lightgbm, decision tree, gradient boosting, feature engineering, and catboost. in this article, i will code for the kaggle coding.

Comments are closed.