Github Jprellberg Learned Weight Sharing Deep Multi Task Learning By

Github Jprellberg Learned Weight Sharing Deep Multi Task Learning By This is the code release for the paper: prellberg j., oliver k. (2020) learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent. Jprellberg has 11 repositories available. follow their code on github.

Adaptive Weight Assignment Scheme For Multi Task Learning Pdf Deep Deep multi task learning by optimizing weight sharing between networks using nes and sgd learned weight sharing run training.py at master · jprellberg learned weight sharing. In deep multi task learning, weights of task specific networks are shared between tasks to improve performance on each single one. since the question, which weights to share between layers, is difficult to answer, human designed architectures often share everything but a last task specific layer. In deep multi task learning, weights of task specific networks are shared between tasks to improve performance on each single one. since the question, which wei. This is the code release for the paper: prellberg j., oliver k. (2020) learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent. in 2020 international joint conference on neural networks, ijcnn 2020, glasgow, uk, july 19 24, 2020. ieee, 2020. in print arxiv.org abs 2003.10159.

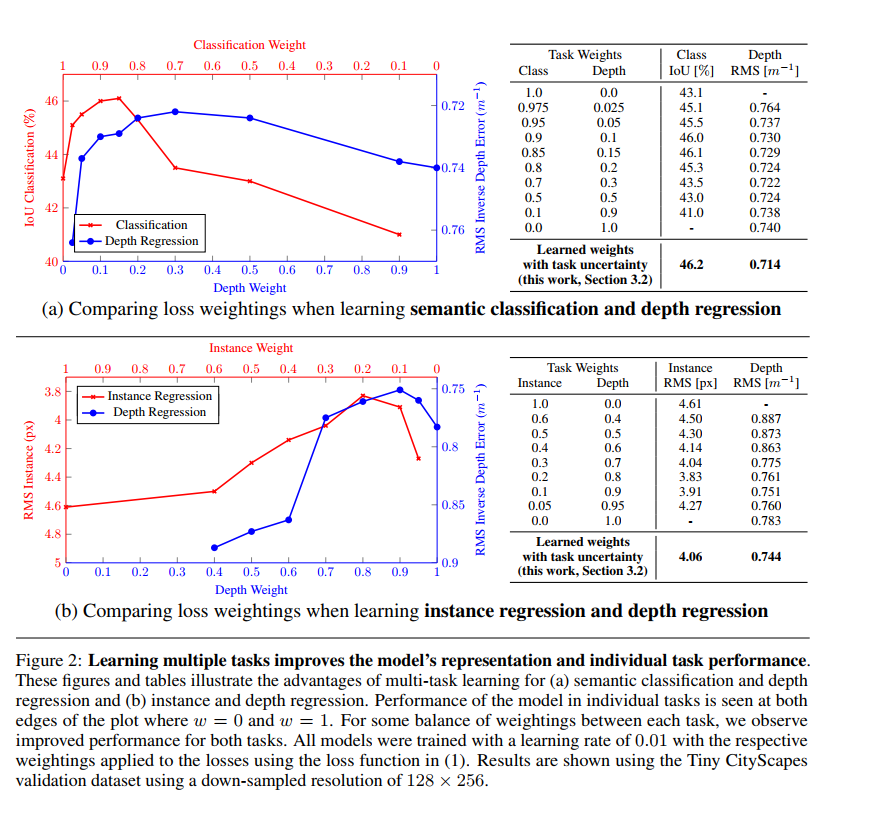

Multi Task Learning Using Uncertainty To Weigh Losses For Scene In deep multi task learning, weights of task specific networks are shared between tasks to improve performance on each single one. since the question, which wei. This is the code release for the paper: prellberg j., oliver k. (2020) learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent. in 2020 international joint conference on neural networks, ijcnn 2020, glasgow, uk, july 19 24, 2020. ieee, 2020. in print arxiv.org abs 2003.10159. To demonstrate the effectiveness of the proposed dst algorithm, we perform multi task learning on three applications and two architectures. This work develops a multi task dnn for learning representations across multiple tasks, not only leveraging large amounts of cross task data, but also benefiting from a regularization effect that leads to more general representations to help tasks in new domains. Bibliographic details on learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent. Learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent.

Github Hosseinshn Basic Multi Task Learning This Is A Repository For To demonstrate the effectiveness of the proposed dst algorithm, we perform multi task learning on three applications and two architectures. This work develops a multi task dnn for learning representations across multiple tasks, not only leveraging large amounts of cross task data, but also benefiting from a regularization effect that leads to more general representations to help tasks in new domains. Bibliographic details on learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent. Learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent.

Github Jessiyang0 Multi Task Learning Model This Work Proposes A Bibliographic details on learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent. Learned weight sharing for deep multi task learning by natural evolution strategy and stochastic gradient descent.

Github Znsngk Distributed Deep Learning Task Management System 基于

Comments are closed.