Github Jed Z Ngram Text Prediction %e7%94%a8 N Gram %e8%af%ad%e8%a8%80%e6%a8%a1%e5%9e%8b%e8%bf%9b%e8%a1%8c%e6%96%b0%e9%97%bb%e6%96%87%e6%9c%ac%e5%86%85%e5%ae%b9%e9%a2%84%e6%b5%8b Https

Github Jed Z Ngram Text Prediction 用 N Gram 语言模型进行新闻文本内容预测 Https 语言模型与新闻的内容预测,分为 4 个阶段: 从主流新闻网站科技频道抓取新闻内容; 数据预处理; 实现 n gram 语言模型; 在测试集上检验模型。 博客文章: jeddd article python ngram language prediction. 用 n gram 语言模型进行新闻文本内容预测。 jeddd article python ngram language prediction pulse · jed z ngram text prediction.

Github Rfdharma Ngram Textgenerator Story 用 n gram 语言模型进行新闻文本内容预测。 jeddd article python ngram language prediction ngram text prediction readme.md at master · jed z ngram text prediction. A framework for developing n gram models for text prediction. it provides data cleaning, data sampling, extracting tokens from text, model generation, model evaluation and word prediction. Traditionally, we can use n grams to generate language models to predict which word comes next given a history of words. we'll use the lm module in nltk to get a sense of how non neural. T his article covers the step by step python implementation of n gram to predict the probability of a given sentence given a dataset.

Github Bragdond Naive Bayes Ngram Text Classifier Nlp Basic Naive Traditionally, we can use n grams to generate language models to predict which word comes next given a history of words. we'll use the lm module in nltk to get a sense of how non neural. T his article covers the step by step python implementation of n gram to predict the probability of a given sentence given a dataset. If we want to train a bigram model, we need to turn this text into bigrams. here's what the first sentence of our text would look like if we use the ngrams function from nltk for this. The n gram language modelling with nltk in python is a powerful and accessible tool for natural language processing tasks. this method, utilizing the nltk library, allows for the efficient creation and analysis of n gram models, which are essential in understanding and predicting language patterns. Language modeling involves determining the probability of a sequence of words. it is fundamental to many natural language processing (nlp) applications such as speech recognition, machine translation and spam filtering where predicting or ranking the likelihood of phrases and sentences is crucial. Predicting next words using n gram model by ayako nagao last updated almost 3 years ago comments (–) share hide toolbars.

Https Www Hana Mart Products Lelart 2023 F0 9f A6 84 E6 96 B0 E6 If we want to train a bigram model, we need to turn this text into bigrams. here's what the first sentence of our text would look like if we use the ngrams function from nltk for this. The n gram language modelling with nltk in python is a powerful and accessible tool for natural language processing tasks. this method, utilizing the nltk library, allows for the efficient creation and analysis of n gram models, which are essential in understanding and predicting language patterns. Language modeling involves determining the probability of a sequence of words. it is fundamental to many natural language processing (nlp) applications such as speech recognition, machine translation and spam filtering where predicting or ranking the likelihood of phrases and sentences is crucial. Predicting next words using n gram model by ayako nagao last updated almost 3 years ago comments (–) share hide toolbars.

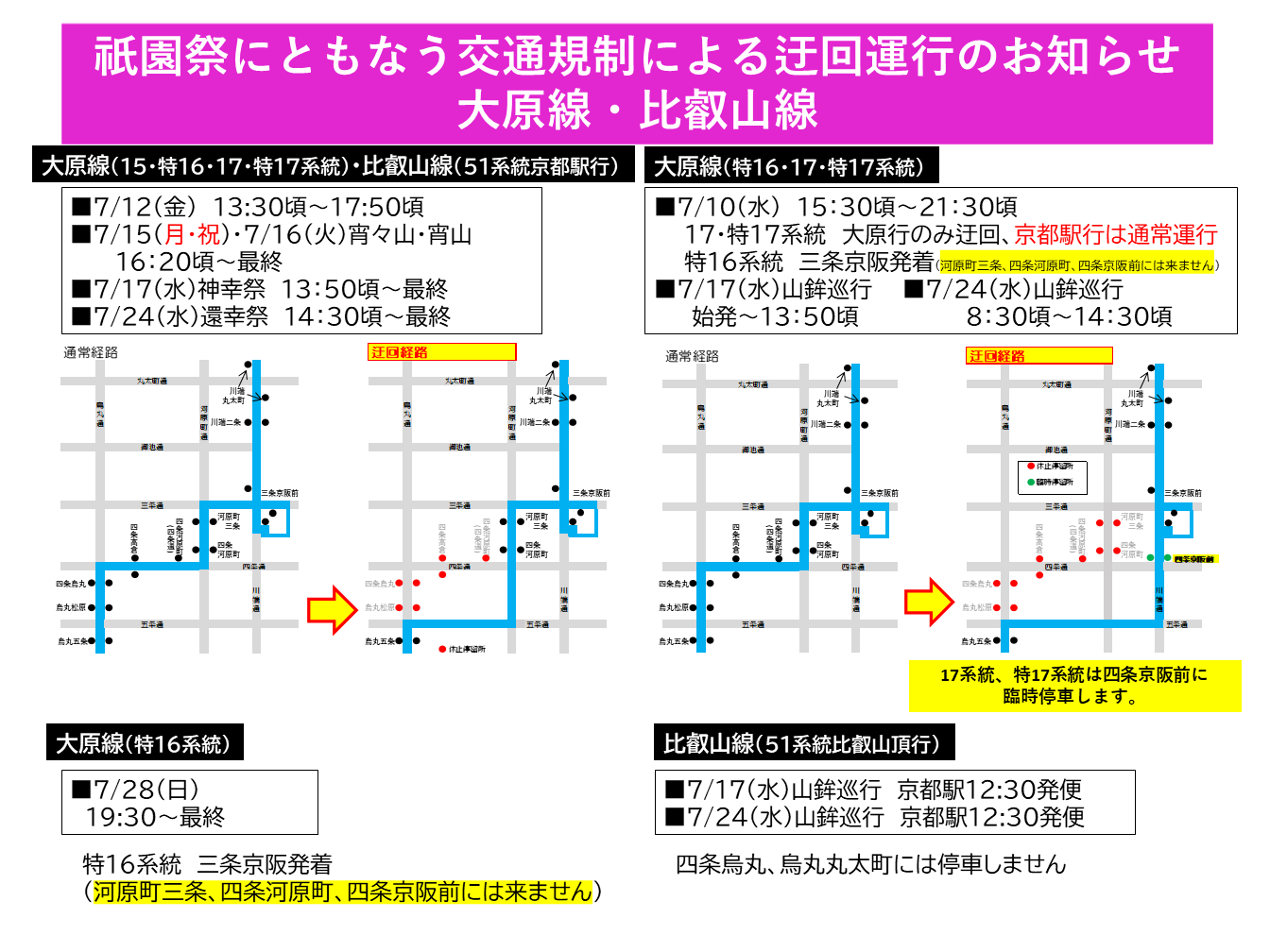

Https Www Kyotobus Jp News E5 A4 A7 E5 8e 9f E3 83 Bb E6 Af 94 E5 8f Language modeling involves determining the probability of a sequence of words. it is fundamental to many natural language processing (nlp) applications such as speech recognition, machine translation and spam filtering where predicting or ranking the likelihood of phrases and sentences is crucial. Predicting next words using n gram model by ayako nagao last updated almost 3 years ago comments (–) share hide toolbars.

Comments are closed.