Github Infinitusposs Multi Agent Path Finding Mapf With Heuristics

Github Infinitusposs Multi Agent Path Finding Mapf With Heuristics \n","renderedfileinfo":null,"shortpath":null,"tabsize":8,"topbannersinfo":{"overridingglobalfundingfile":false,"globalpreferredfundingpath":null,"repoowner":"infinitusposs","reponame":"multi agent path finding mapf with heuristics","showinvalidcitationwarning":false,"citationhelpurl":" docs.github en github creating cloning and. Contribute to infinitusposs multi agent path finding mapf with heuristics development by creating an account on github.

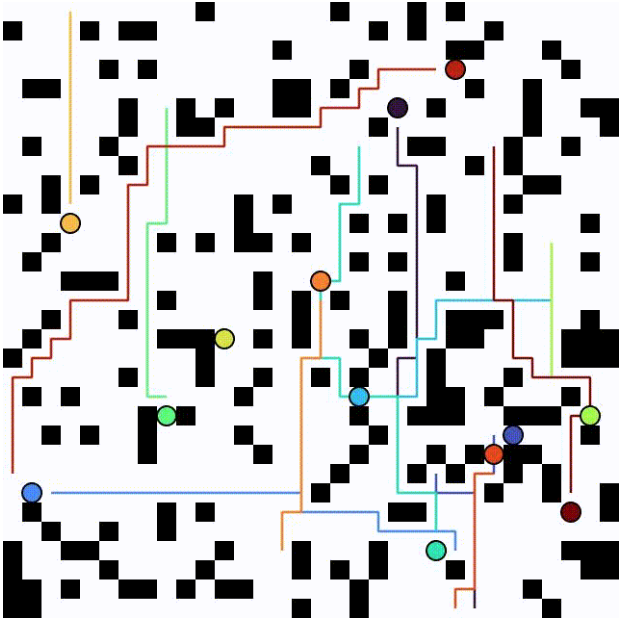

Github Acforvs Multi Agent Pathfinding Heuristic Search Vs Learning Anonymous multi agent path finding (mapf) with conflict based search and space time a*. Algorithm for prioritized multi agent path finding (mapf) in grid worlds. moves into arbitrary directions are allowed (each agent is allowed to follow any angle path on the grid). A multi agent pick and delivery (mapd) solver with fifo constraint based on a windowed pbs method. In one shot mapf, the goal is to compute collision free paths for agents from their starting positions to target locations while minimizing a predefined objective, such as makespan or path length.

Multi Agent Path Finding Github Topics Github A multi agent pick and delivery (mapd) solver with fifo constraint based on a windowed pbs method. In one shot mapf, the goal is to compute collision free paths for agents from their starting positions to target locations while minimizing a predefined objective, such as makespan or path length. The mechanism is evaluated in a lifelong robotic warehouse multi agent pickup and delivery scenario with kinematic orientation constraints. unlike established, decentralised heuristics, the proposed karma mechanism regulates the temporal distribution of replanning effort across agents, improving fairness while maintaining efficiency. Multi agent path finding (mapf) formalises this problem of computing conflict free trajectories for multiple agents from given start to goal locations on a graph representation of the environment [5]. accordingly, mapf has attracted significant research interest across artificial intelligence, operations research, robotics, and control theory. Our optimal, bounded suboptimal, and suboptimal mapf algorithms can find solutions for a few hundred, a thousand, and a few thousand agents, respectively, within just a minute. This paper presents a karma based negotiation mechanism that enhances fairness and scalability in decentralized multi agent path finding for warehouse systems.

Github Anirvan Krishna Multi Agent Path Finding Multi Agent Path The mechanism is evaluated in a lifelong robotic warehouse multi agent pickup and delivery scenario with kinematic orientation constraints. unlike established, decentralised heuristics, the proposed karma mechanism regulates the temporal distribution of replanning effort across agents, improving fairness while maintaining efficiency. Multi agent path finding (mapf) formalises this problem of computing conflict free trajectories for multiple agents from given start to goal locations on a graph representation of the environment [5]. accordingly, mapf has attracted significant research interest across artificial intelligence, operations research, robotics, and control theory. Our optimal, bounded suboptimal, and suboptimal mapf algorithms can find solutions for a few hundred, a thousand, and a few thousand agents, respectively, within just a minute. This paper presents a karma based negotiation mechanism that enhances fairness and scalability in decentralized multi agent path finding for warehouse systems.

Multi Agent Path Finding Jaein Lim Our optimal, bounded suboptimal, and suboptimal mapf algorithms can find solutions for a few hundred, a thousand, and a few thousand agents, respectively, within just a minute. This paper presents a karma based negotiation mechanism that enhances fairness and scalability in decentralized multi agent path finding for warehouse systems.

What Changes Are Required To Make It Feasible For The 3d Space Issue

Comments are closed.