Github Eaglew Describing A Knowledge Base Code For Describing A

Github Eaglew Describing A Knowledge Base Code For Describing A Code for describing a knowledge base. contribute to eaglew describing a knowledge base development by creating an account on github. Code for describing a knowledge base. contribute to eaglew describing a knowledge base development by creating an account on github.

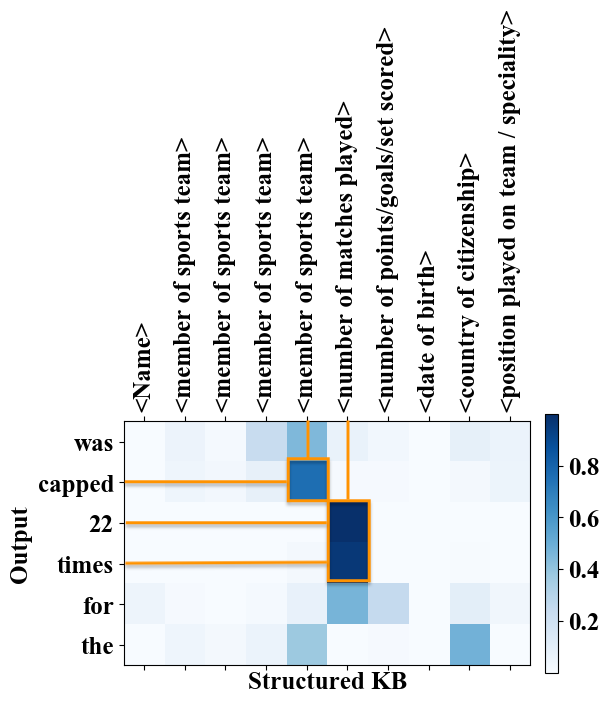

Github Eaglew Describing A Knowledge Base Code For Describing A We aim to automatically generate natural language narratives about an input structured knowledge base (kb). Describing a knowledge base: paper and code. we aim to automatically generate natural language descriptions about an input structured knowledge base (kb). We aim to automatically generate natural language descriptions about an input structured knowledge base (kb). Accelerated text is a no code natural language generation platform. it will help you construct document plans which define how your data is converted to textual descriptions varying in wording and structure.

Github Eaglew Describing A Knowledge Base Code For Describing A We aim to automatically generate natural language descriptions about an input structured knowledge base (kb). Accelerated text is a no code natural language generation platform. it will help you construct document plans which define how your data is converted to textual descriptions varying in wording and structure. Karpathy's llm knowledge base on april 3, 2026, andrej karpathy posted something on x that resonated well beyond the usual ai news cycle. he wasn't announcing a new model or a benchmark result. he was describing a change in how he personally uses llms — a shift from generating code to generating knowledge structure. he called it the karpathy llm knowledge base, a form of ai powered personal. Quickstart preprocessing: put the person and animal dataset under the describing a knowledge base folder. unzip it. randomly split the data into train, dev and test by runing split.py under utils folder. We aim to automatically generate natural language descriptions about an input structured knowledge base (kb). In this paper, we propose a model, called "bi directional block self attention network (bi blosan)", for rnn cnn free sequence encoding. it requires as little memory as rnn but with all the merits.

Comments are closed.