Github Dylanahola Chess Learning Model

Github Nikoskalogeropoulos Chess Contribute to dylanahola chess learning model development by creating an account on github. Chess with deep reinforcement learning the results the chess engine learns faster and starts performing better earlier when the search space is smaller.

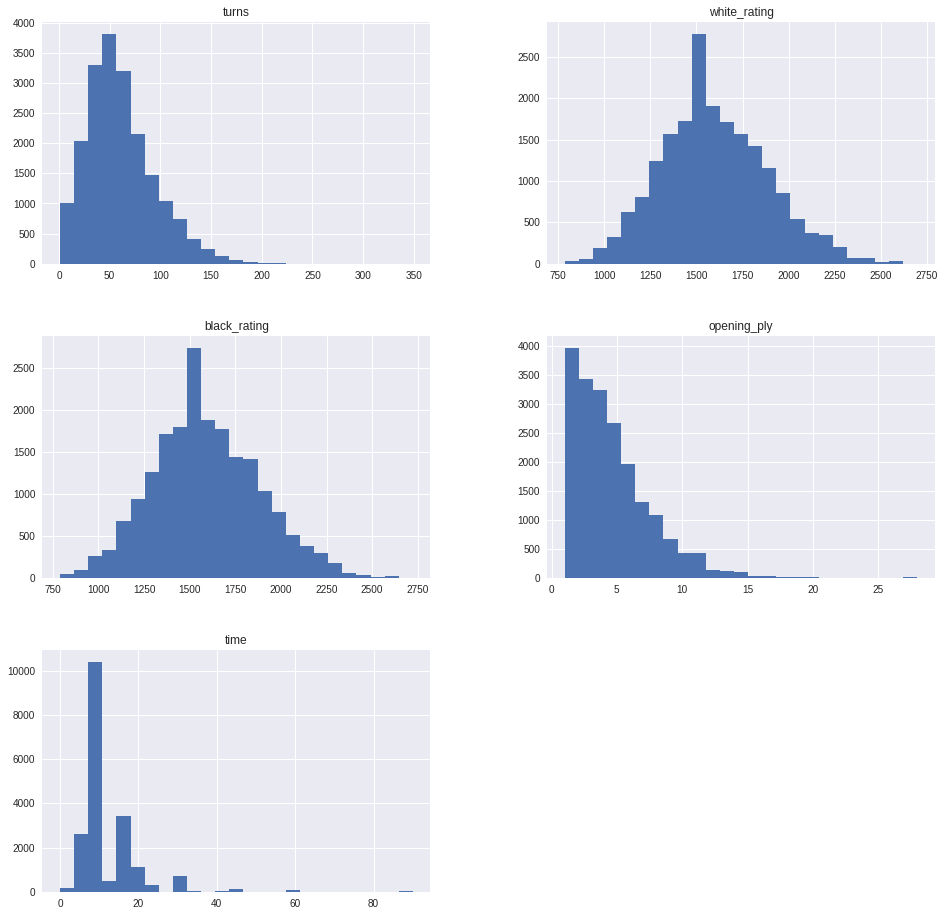

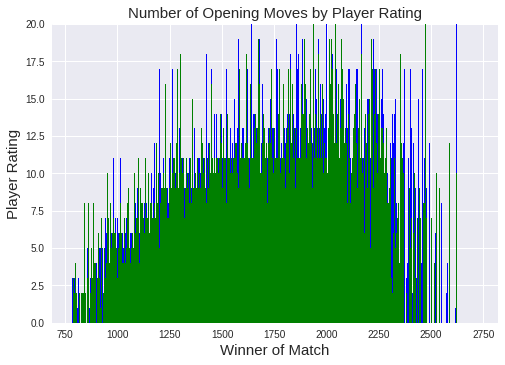

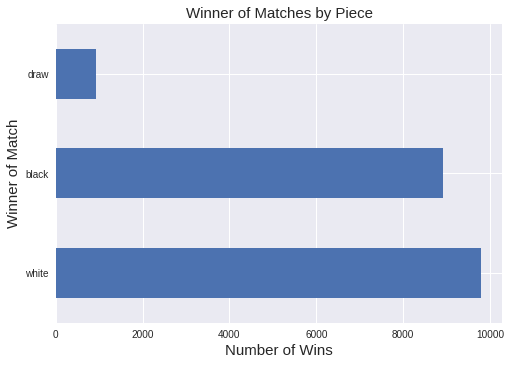

Github Gitsuki Deep Learning Chess A Python Chess Engine Featuring This study demonstrates the potential of data mining and machine learning techniques in uncovering patterns and insights in chess, contributing to growing research. I really enjoy chess and decided to build a project where i could enjoy that interest with my data science skills.\n#i found this chess dataset on kaggle (found here: kaggle datasnaek chess) and decided to build a machine learning model to see how well it could predict the winner outcome of matches. Data scientist | minneapolis, mn interested in using data science to solve problems. dylanahola. Welcome to the documentation for the dlchess (deep learning for chess) project. dlchess is a uci compatible chess engine and reinforcement learning system. it implements an alphazero style model that combines monte carlo tree search (mcts) with a neural network for policy and value prediction.

Github Dylanahola Chess Learning Model Data scientist | minneapolis, mn interested in using data science to solve problems. dylanahola. Welcome to the documentation for the dlchess (deep learning for chess) project. dlchess is a uci compatible chess engine and reinforcement learning system. it implements an alphazero style model that combines monte carlo tree search (mcts) with a neural network for policy and value prediction. Normal chess engines work with the minimax algorithm: the engine tries to find the best move by creating a tree of all possible moves to a certain depth, and cutting down paths that lead to bad positions (alpha beta pruning). it evaluates a position based on which pieces are on the board. Contribute to dylanahola chess learning model development by creating an account on github. \""," ],"," \"text plain\": ["," \" id opening ply\\n\","," \"0 tzjhllje 5\\n\","," \"1 l1nxvwae 4\\n\","," \"2 miicvqhh 3\\n\","," \"3 kwkvrqyl 3\\n\","," \"4 9txo1auz 5\\n\","," \"5 msodv9wj 4\\n\","," \"6 qwu9rasv 10\\n\","," \"7 rvn0n3vk 5\\n\","," \"8 dwf3djho 6\\n\","," \"9 afomwnlg 4\\n\","," \"\\n\","," \" [10 rows x 16 columns]\""," ]"," },"," \"metadata\": {"," \"tags\": []"," },"," \"execution count\": 3"," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"id\": \"wck9x5jkqgb \""," },"," \"source\": ["," \"#i am a huge chess nerd and i found this cool data set and would like to try and make a model to predict the winner of games based on the data.\""," ],"," \"execution count\": 4,"," \"outputs\": []"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"colab\": {"," \"base uri\": \" localhost:8080 \""," },"," \"id\": \"gjqqatibexjp\","," \"outputid\": \"6812bbaa 87be 42c7 af8b daf89bb72c7e\""," },"," \"source\": ["," \"df.info ()\""," ],"," \"execution count\": 5,"," \"outputs\": ["," {"," \"output type\": \"stream\","," \"text\": ["," \" \\n\","," \"rangeindex: 20058 entries, 0 to 20057\\n\","," \"data columns (total 16 columns):\\n\","," \" # column non null count dtype \\n\","," \" \\n\","," \" 0 id 20058 non null object \\n\","," \" 1 rated 20058 non null bool \\n\","," \" 2 created at 20058 non null float64\\n\","," \" 3 last move at 20058 non null float64\\n\","," \" 4 turns 20058 non null int64 \\n\","," \" 5 victory status 20058 non null object \\n\","," \" 6 winner 20058 non null object \\n\","," \" 7 increment code 20058 non null object \\n\","," \" 8 white id 20058 non null object \\n\","," \" 9 white rating 20058 non null int64 \\n\","," \" 10 black id 20058 non null object \\n\","," \" 11 black rating 20058 non null int64 \\n\","," \" 12 moves 20058 non null object \\n\","," \" 13 opening eco 20058 non null object \\n\","," \" 14 opening name 20058 non null object \\n\","," \" 15 opening ply 20058 non null int64 \\n\","," \"dtypes: bool (1), float64 (2), int64 (4), object (9)\\n\","," \"memory usage: 2.3 mb\\n\""," ],"," \"name\": \"stdout\""," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"colab\": {"," \"base uri\": \" localhost:8080 \""," },"," \"id\": \"p5of53wzqfra\","," \"outputid\": \"6bb6c0e8 7f9d 4285 b07f 47741e5f8c2c\""," },"," \"source\": ["," \"df.duplicated ().any ()\""," ],"," \"execution count\": 6,"," \"outputs\": ["," {"," \"output type\": \"execute result\","," \"data\": {"," \"text plain\": ["," \"true\""," ]"," },"," \"metadata\": {"," \"tags\": []"," },"," \"execution count\": 6"," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"id\": \"o6te9mafqosc\""," },"," \"source\": ["," \"df.drop duplicates. Chess game is a modal based on a deep reinforcement learning approach to master the chess game. you can train your own reinforced agent and then deploy it online and oppose him.

Github Dylanahola Chess Learning Model Normal chess engines work with the minimax algorithm: the engine tries to find the best move by creating a tree of all possible moves to a certain depth, and cutting down paths that lead to bad positions (alpha beta pruning). it evaluates a position based on which pieces are on the board. Contribute to dylanahola chess learning model development by creating an account on github. \""," ],"," \"text plain\": ["," \" id opening ply\\n\","," \"0 tzjhllje 5\\n\","," \"1 l1nxvwae 4\\n\","," \"2 miicvqhh 3\\n\","," \"3 kwkvrqyl 3\\n\","," \"4 9txo1auz 5\\n\","," \"5 msodv9wj 4\\n\","," \"6 qwu9rasv 10\\n\","," \"7 rvn0n3vk 5\\n\","," \"8 dwf3djho 6\\n\","," \"9 afomwnlg 4\\n\","," \"\\n\","," \" [10 rows x 16 columns]\""," ]"," },"," \"metadata\": {"," \"tags\": []"," },"," \"execution count\": 3"," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"id\": \"wck9x5jkqgb \""," },"," \"source\": ["," \"#i am a huge chess nerd and i found this cool data set and would like to try and make a model to predict the winner of games based on the data.\""," ],"," \"execution count\": 4,"," \"outputs\": []"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"colab\": {"," \"base uri\": \" localhost:8080 \""," },"," \"id\": \"gjqqatibexjp\","," \"outputid\": \"6812bbaa 87be 42c7 af8b daf89bb72c7e\""," },"," \"source\": ["," \"df.info ()\""," ],"," \"execution count\": 5,"," \"outputs\": ["," {"," \"output type\": \"stream\","," \"text\": ["," \" \\n\","," \"rangeindex: 20058 entries, 0 to 20057\\n\","," \"data columns (total 16 columns):\\n\","," \" # column non null count dtype \\n\","," \" \\n\","," \" 0 id 20058 non null object \\n\","," \" 1 rated 20058 non null bool \\n\","," \" 2 created at 20058 non null float64\\n\","," \" 3 last move at 20058 non null float64\\n\","," \" 4 turns 20058 non null int64 \\n\","," \" 5 victory status 20058 non null object \\n\","," \" 6 winner 20058 non null object \\n\","," \" 7 increment code 20058 non null object \\n\","," \" 8 white id 20058 non null object \\n\","," \" 9 white rating 20058 non null int64 \\n\","," \" 10 black id 20058 non null object \\n\","," \" 11 black rating 20058 non null int64 \\n\","," \" 12 moves 20058 non null object \\n\","," \" 13 opening eco 20058 non null object \\n\","," \" 14 opening name 20058 non null object \\n\","," \" 15 opening ply 20058 non null int64 \\n\","," \"dtypes: bool (1), float64 (2), int64 (4), object (9)\\n\","," \"memory usage: 2.3 mb\\n\""," ],"," \"name\": \"stdout\""," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"colab\": {"," \"base uri\": \" localhost:8080 \""," },"," \"id\": \"p5of53wzqfra\","," \"outputid\": \"6bb6c0e8 7f9d 4285 b07f 47741e5f8c2c\""," },"," \"source\": ["," \"df.duplicated ().any ()\""," ],"," \"execution count\": 6,"," \"outputs\": ["," {"," \"output type\": \"execute result\","," \"data\": {"," \"text plain\": ["," \"true\""," ]"," },"," \"metadata\": {"," \"tags\": []"," },"," \"execution count\": 6"," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"id\": \"o6te9mafqosc\""," },"," \"source\": ["," \"df.drop duplicates. Chess game is a modal based on a deep reinforcement learning approach to master the chess game. you can train your own reinforced agent and then deploy it online and oppose him.

Github Dylanahola Chess Learning Model \""," ],"," \"text plain\": ["," \" id opening ply\\n\","," \"0 tzjhllje 5\\n\","," \"1 l1nxvwae 4\\n\","," \"2 miicvqhh 3\\n\","," \"3 kwkvrqyl 3\\n\","," \"4 9txo1auz 5\\n\","," \"5 msodv9wj 4\\n\","," \"6 qwu9rasv 10\\n\","," \"7 rvn0n3vk 5\\n\","," \"8 dwf3djho 6\\n\","," \"9 afomwnlg 4\\n\","," \"\\n\","," \" [10 rows x 16 columns]\""," ]"," },"," \"metadata\": {"," \"tags\": []"," },"," \"execution count\": 3"," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"id\": \"wck9x5jkqgb \""," },"," \"source\": ["," \"#i am a huge chess nerd and i found this cool data set and would like to try and make a model to predict the winner of games based on the data.\""," ],"," \"execution count\": 4,"," \"outputs\": []"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"colab\": {"," \"base uri\": \" localhost:8080 \""," },"," \"id\": \"gjqqatibexjp\","," \"outputid\": \"6812bbaa 87be 42c7 af8b daf89bb72c7e\""," },"," \"source\": ["," \"df.info ()\""," ],"," \"execution count\": 5,"," \"outputs\": ["," {"," \"output type\": \"stream\","," \"text\": ["," \" \\n\","," \"rangeindex: 20058 entries, 0 to 20057\\n\","," \"data columns (total 16 columns):\\n\","," \" # column non null count dtype \\n\","," \" \\n\","," \" 0 id 20058 non null object \\n\","," \" 1 rated 20058 non null bool \\n\","," \" 2 created at 20058 non null float64\\n\","," \" 3 last move at 20058 non null float64\\n\","," \" 4 turns 20058 non null int64 \\n\","," \" 5 victory status 20058 non null object \\n\","," \" 6 winner 20058 non null object \\n\","," \" 7 increment code 20058 non null object \\n\","," \" 8 white id 20058 non null object \\n\","," \" 9 white rating 20058 non null int64 \\n\","," \" 10 black id 20058 non null object \\n\","," \" 11 black rating 20058 non null int64 \\n\","," \" 12 moves 20058 non null object \\n\","," \" 13 opening eco 20058 non null object \\n\","," \" 14 opening name 20058 non null object \\n\","," \" 15 opening ply 20058 non null int64 \\n\","," \"dtypes: bool (1), float64 (2), int64 (4), object (9)\\n\","," \"memory usage: 2.3 mb\\n\""," ],"," \"name\": \"stdout\""," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"colab\": {"," \"base uri\": \" localhost:8080 \""," },"," \"id\": \"p5of53wzqfra\","," \"outputid\": \"6bb6c0e8 7f9d 4285 b07f 47741e5f8c2c\""," },"," \"source\": ["," \"df.duplicated ().any ()\""," ],"," \"execution count\": 6,"," \"outputs\": ["," {"," \"output type\": \"execute result\","," \"data\": {"," \"text plain\": ["," \"true\""," ]"," },"," \"metadata\": {"," \"tags\": []"," },"," \"execution count\": 6"," }"," ]"," },"," {"," \"cell type\": \"code\","," \"metadata\": {"," \"id\": \"o6te9mafqosc\""," },"," \"source\": ["," \"df.drop duplicates. Chess game is a modal based on a deep reinforcement learning approach to master the chess game. you can train your own reinforced agent and then deploy it online and oppose him.

Comments are closed.