Github Dylanadlard Matrix Multiplications

Github Kaanolgu Matrix Multiplications Vitis 2020 1 Acceleration Contribute to dylanadlard matrix multiplications development by creating an account on github. The multiplication of the entire matrices is reformulated as a series of smaller block wise multiplications, followed by summations of intermediate results. this technique facilitates the mapping of large scale matrix multiplications onto systolic arrays by decomposing the computation into independent blocks that align with the dimensions of.

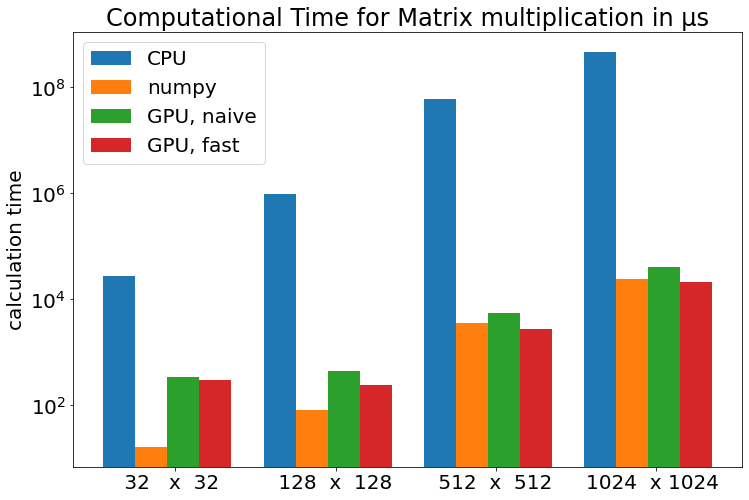

Github Dylanadlard Matrix Multiplications Improvement with array slicing how fast is matrix multiplication with array slicing in two nested loops compared to element wise product in three nested loops?. Contribute to dylanadlard matrix multiplications development by creating an account on github. Dylanadlard has 8 repositories available. follow their code on github. Github is where people build software. more than 100 million people use github to discover, fork, and contribute to over 420 million projects.

Github Adinaamzarescu Optimization Of Matrix Multiplications C Dylanadlard has 8 repositories available. follow their code on github. Github is where people build software. more than 100 million people use github to discover, fork, and contribute to over 420 million projects. Library for specialized dense and sparse matrix operations, and deep learning primitives. Matrix multiplication optimization for neural networks a comprehensive c implementation demonstrating progressive optimizations of matrix multiplication from naive to highly optimized approaches, with a focus on neural network applications. Running big llms is expensive because storing the weights and doing the multiplications dominates time and memory. quantization shrinks weights to 1 bit or 2 bit, but when each weight is just 1 number, too many different weights collapse into the same tiny set and accuracy drops fast. These are libraries providing fast implementations of eg matrix multiplications or dot products. they are sometimes tailored to one specific (family of) cpus, like intel’s mkl or apple’s accelerate.

Github Kyledukart Matrixmultiplication Library for specialized dense and sparse matrix operations, and deep learning primitives. Matrix multiplication optimization for neural networks a comprehensive c implementation demonstrating progressive optimizations of matrix multiplication from naive to highly optimized approaches, with a focus on neural network applications. Running big llms is expensive because storing the weights and doing the multiplications dominates time and memory. quantization shrinks weights to 1 bit or 2 bit, but when each weight is just 1 number, too many different weights collapse into the same tiny set and accuracy drops fast. These are libraries providing fast implementations of eg matrix multiplications or dot products. they are sometimes tailored to one specific (family of) cpus, like intel’s mkl or apple’s accelerate.

Github Whehdwns Matrix Multiplication Computer Architecture Project Running big llms is expensive because storing the weights and doing the multiplications dominates time and memory. quantization shrinks weights to 1 bit or 2 bit, but when each weight is just 1 number, too many different weights collapse into the same tiny set and accuracy drops fast. These are libraries providing fast implementations of eg matrix multiplications or dot products. they are sometimes tailored to one specific (family of) cpus, like intel’s mkl or apple’s accelerate.

Github Muhammad Dah Matrix Multiplication

Comments are closed.