Github Ardhendubehera Cap

Github Ardhendubehera Cap Contribute to ardhendubehera cap development by creating an account on github. We evaluate our approach using six state of the art (sota) backbone networks and eight benchmark datasets. our method significantly outperforms the sota approaches on six datasets and is very competitive with the remaining two. our cap is designed to encode spatial arrangements and visual appearance of the parts effectively.

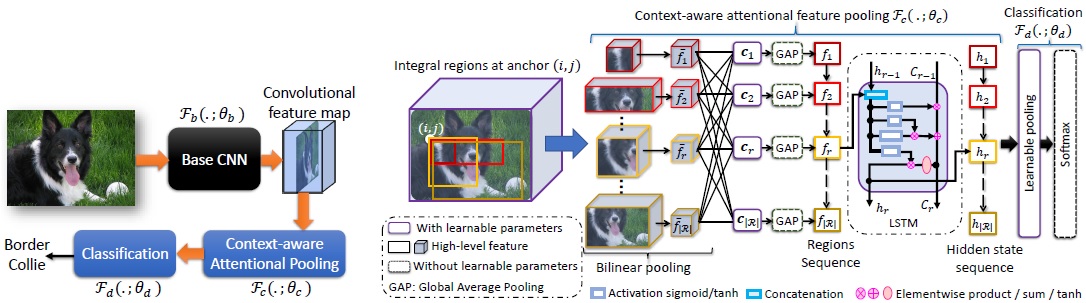

Github Ardhendubehera Cap Our work: to describe objects in a conventional way as in cnns as well as maintaining their visual appearance, we design a context aware attentional pooling (cap) to encode spatial arrangements and visual appearance of the parts ef fectively. Contribute to ardhendubehera cap development by creating an account on github. Contribute to ardhendubehera cap development by creating an account on github. Context aware attentional pooling (cap) for fine grained visual classification in this document, we have included the remaining quanti. ative and qualitative results, which we could not include in the main document. remaining results of table 2: the performance comparison (accuracy in %) using the remainin. two datasets (stanford dogs and .

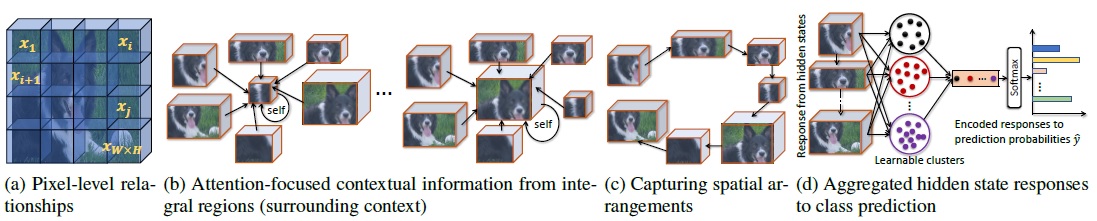

Ardhendu Behera Zachary Wharton Pradeep Hewage And Asish Bera Contribute to ardhendubehera cap development by creating an account on github. Context aware attentional pooling (cap) for fine grained visual classification in this document, we have included the remaining quanti. ative and qualitative results, which we could not include in the main document. remaining results of table 2: the performance comparison (accuracy in %) using the remainin. two datasets (stanford dogs and . Our work: to describe objects in a conventional way as in cnns as well as maintaining their visual appearance, we design a context aware attentional pooling (cap) to encode spatial arrangements and visual appearance of the parts effectively. The object scene is not straightforward. to address this, we propose a novel context aware attentional pooling (cap) that effectively captures subtle changes via sub pixel gradients, and learns to attend informative integral regions and their im portance in discriminating different subcategories without re quiring the bounding box. Deep learning, computer vision and ai. ardhendubehera has 13 repositories available. follow their code on github. Main idea:context aware attentional pooling (cap) to consider the intrinsic consistency between the informativeness of integral regions and their spatial structures to capture the semantic correlation among them.

Ardhendu Behera Zachary Wharton Pradeep Hewage And Asish Bera Our work: to describe objects in a conventional way as in cnns as well as maintaining their visual appearance, we design a context aware attentional pooling (cap) to encode spatial arrangements and visual appearance of the parts effectively. The object scene is not straightforward. to address this, we propose a novel context aware attentional pooling (cap) that effectively captures subtle changes via sub pixel gradients, and learns to attend informative integral regions and their im portance in discriminating different subcategories without re quiring the bounding box. Deep learning, computer vision and ai. ardhendubehera has 13 repositories available. follow their code on github. Main idea:context aware attentional pooling (cap) to consider the intrinsic consistency between the informativeness of integral regions and their spatial structures to capture the semantic correlation among them.

Comments are closed.