Github Adversarial Attacks On Deeplearning Llms

2410 18215 Advancing Nlp Security By Leveraging Llms As Adversarial Our project presents the first large scale, unified empirical study of adversarial attacks and defenses across key computer vision and language modeling tasks—including image classification, segmentation, object detection, nlp, llms, and automatic speech recognition. A comprehensive database of large language model (llm) attack vectors and security vulnerabilities, including the latest 2025 research on agentic exploits, rag attacks, and advanced ml security threats.

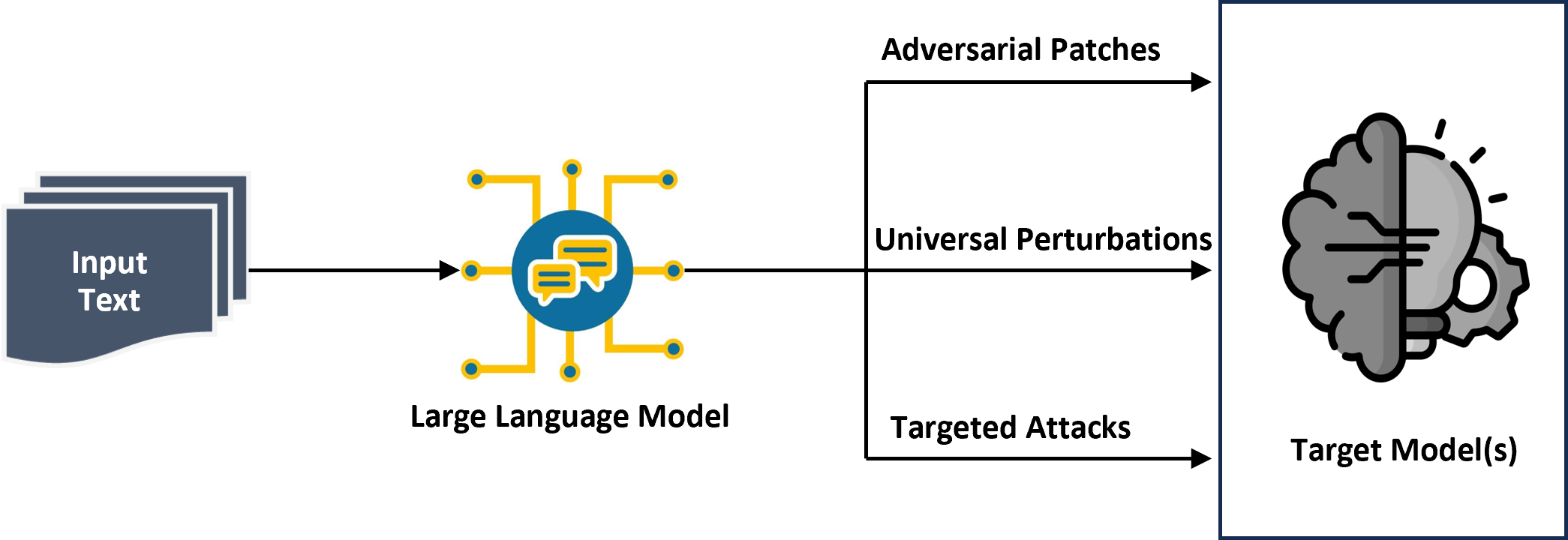

Best Llm Security Tools Open Source Frameworks In 2026 Contribute to adversarial attacks on deeplearning llms development by creating an account on github. This is a work in progress repository for finding adversarial strings of tokens to influence large language models (llms) in a variety of ways, as part of investigating generalization and robustness of llm activation probes. Adversarial attacks are techniques that craft intentionally perturbed inputs to mislead machine learning models into producing incorrect outputs. they are central to research in ai robustness, security, and trustworthiness. This tutorial offers a comprehensive overview of vulnerabilities in large language models (llms) that are exposed by adversarial attacks—an emerging interdisciplinary field in trustworthy ml that combines perspectives from natural language processing (nlp) and cybersecurity.

Re Evaluating Deep Learning Attacks And Defenses In Cybersecurity Systems Adversarial attacks are techniques that craft intentionally perturbed inputs to mislead machine learning models into producing incorrect outputs. they are central to research in ai robustness, security, and trustworthiness. This tutorial offers a comprehensive overview of vulnerabilities in large language models (llms) that are exposed by adversarial attacks—an emerging interdisciplinary field in trustworthy ml that combines perspectives from natural language processing (nlp) and cybersecurity. Adversarial attacks are just like jailbreaks but are solved using numerical optimization. in terms of complexity, adversarial attacks > jailbreaks > prompt injection. This repository contains tools and data for conducting denial of service (dos) attacks on large language models (llms) by exploiting their safety rules. the objective is to design adversarial prompts that intentionally trigger safety mechanisms, causing models to deny service by rejecting user inputs as unsafe. However, such safety measures are vulnerable to adversarial attacks, which add maliciously designed token sequences to a prompt to make an llm produce harmful content despite being well aligned. Adversarial attacks are inputs that trigger the model to output something undesired. much early literature focused on classification tasks, while recent effort starts to investigate more into outputs of generative models.

Comments are closed.