Github Achoda3 Hadoop Map Reduce

Hadoop Map Reduce Pdf Apache Hadoop Map Reduce Contribute to achoda3 hadoop map reduce development by creating an account on github. Mapreduce is the processing engine of hadoop. while hdfs is responsible for storing massive amounts of data, mapreduce handles the actual computation and analysis.

Hadoop Mapreduce Pdf Map Reduce Apache Hadoop Hadoop mapreduce is a software framework for easily writing applications which process vast amounts of data (multi terabyte data sets) in parallel on large clusters (thousands of nodes) of commodity hardware in a reliable, fault tolerant manner. Contribute to achoda3 hadoop map reduce development by creating an account on github. Contribute to achoda3 hadoop map reduce development by creating an account on github. Github is where people build software. more than 100 million people use github to discover, fork, and contribute to over 420 million projects.

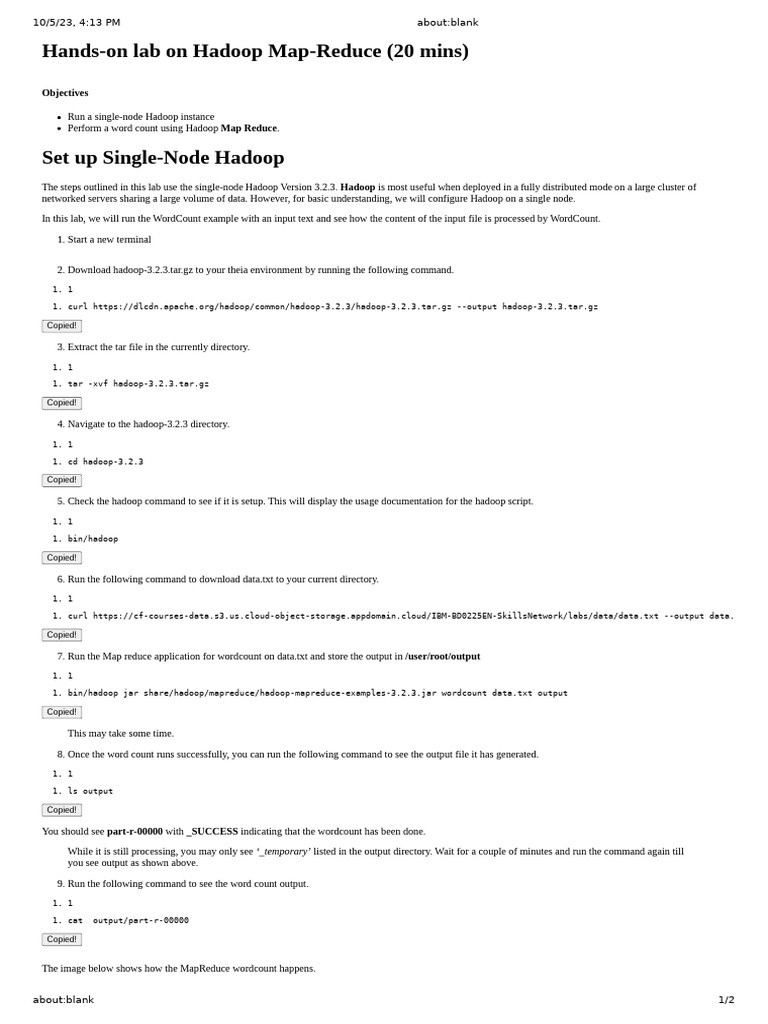

Hadoop Map Reduce Concept Pdf Apache Hadoop Map Reduce Contribute to achoda3 hadoop map reduce development by creating an account on github. Github is where people build software. more than 100 million people use github to discover, fork, and contribute to over 420 million projects. This contain how to install hadoop on google colab and how to run map reduce in hadoop. Contribute to garage education hadoop map reduce development by creating an account on github. Apache hadoop is an open source implementation of a distributed mapreduce system. a hadoop cluster consists of a name node and a number of data nodes. the name node holds the distributed file system metadata and layout, and it organizes executions of jobs on the cluster. Hadoop mapreduce is a software framework for easily writing applications which process vast amounts of data (multi terabyte data sets) in parallel on large clusters (thousands of nodes) of commodity hardware in a reliable, fault tolerant manner.

Comments are closed.