Generative Ai Storage Embeddings

Embeddings Generative Ai For Australian Businesses Learn how language models represent and store the meaning of words with lists of numbers called embeddings.this video is a part of the exploring generative a. This will help you get started with google generative ai embedding models using langchain. for detailed documentation on googlegenerativeaiembeddings features and configuration options, please refer to the api reference.

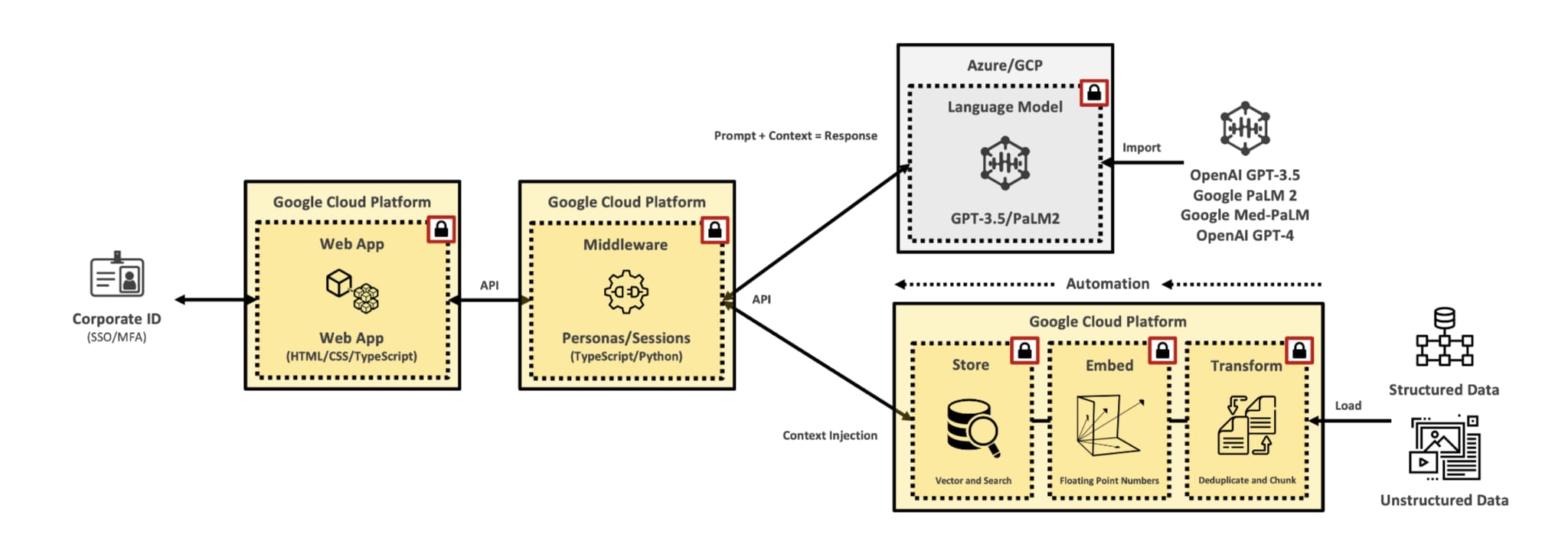

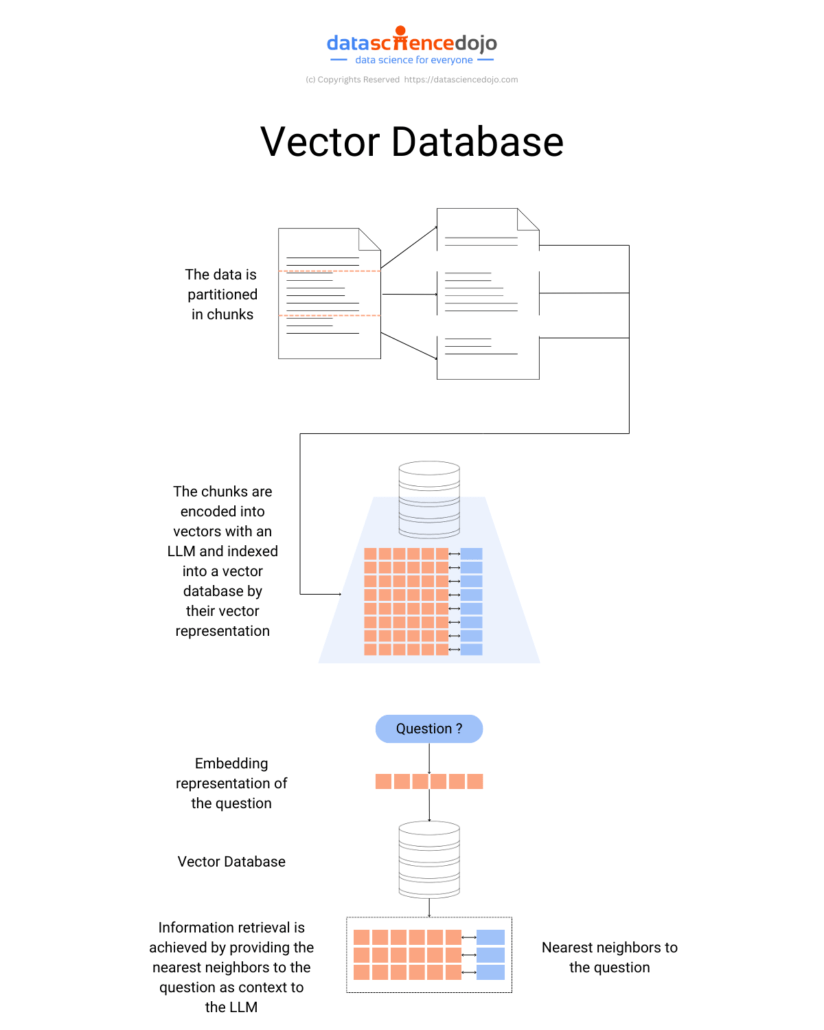

Generative Ai Embeddings Designed for developers, data enthusiasts, and ai explorers, this post builds on technical overview of generative ai and sets the stage for practical applications, like your first creative api project. Embeddings serve as the bridge between raw data and machine understanding in generative ai. by translating diverse data types into meaningful numerical representations, embeddings empower. The gemini api offers embedding models to generate embeddings for text, images, video, and other content. these resulting embeddings can then be used for tasks such as semantic search, classification, and clustering, providing more accurate, context aware results than keyword based approaches. The document explores the role of vector stores in enabling generative ai systems to manage and retrieve embeddings from unstructured data such as text, images, and audio.

Revolutionizing Generative Ai With Ai Embeddings The gemini api offers embedding models to generate embeddings for text, images, video, and other content. these resulting embeddings can then be used for tasks such as semantic search, classification, and clustering, providing more accurate, context aware results than keyword based approaches. The document explores the role of vector stores in enabling generative ai systems to manage and retrieve embeddings from unstructured data such as text, images, and audio. Embeddings are vector representations of data used in generative ai to convert complex information into a format that machines can process. these vectors capture semantic relationships within data, allowing ai models to understand context and generate relevant outputs. Using a discriminating fe to identify shifts between data sampled from different generative models, we find evidence of ‘generative dna’ (gdna) within collected samples that allows us to easily distinguish real and synthetic data and even synthetic data created by different generative models. This slide and article describe discusses creating, storing, and utilizing embeddings for search, context retrieval, and various applications beyond rag and llms. The storage aspect of language models involves saving these meanings as numerical representations called embeddings, which indicate the strength of associations between words. this numerical approach allows chatbots to approximate meanings and generate new words.

Understanding Embeddings For Generative Ai Unstructured Embeddings are vector representations of data used in generative ai to convert complex information into a format that machines can process. these vectors capture semantic relationships within data, allowing ai models to understand context and generate relevant outputs. Using a discriminating fe to identify shifts between data sampled from different generative models, we find evidence of ‘generative dna’ (gdna) within collected samples that allows us to easily distinguish real and synthetic data and even synthetic data created by different generative models. This slide and article describe discusses creating, storing, and utilizing embeddings for search, context retrieval, and various applications beyond rag and llms. The storage aspect of language models involves saving these meanings as numerical representations called embeddings, which indicate the strength of associations between words. this numerical approach allows chatbots to approximate meanings and generate new words.

Understanding Embeddings For Generative Ai Unstructured This slide and article describe discusses creating, storing, and utilizing embeddings for search, context retrieval, and various applications beyond rag and llms. The storage aspect of language models involves saving these meanings as numerical representations called embeddings, which indicate the strength of associations between words. this numerical approach allows chatbots to approximate meanings and generate new words.

Dynamic Key Role Of Vector Embeddings In Generative Ai

Comments are closed.