Gameplayqa Testing Mllm Reasoning In 3d Games

Github Phellonchen Awesome Mllm Reasoning Latest Advances On We introduce gameplayqa, a framework for evaluating agentic centric perception and reasoning through video understanding. specifically, we densely annotate multiplayer 3d gameplay videos at 1.22 labels second, with time synced, concurrent captions of states, actions, and events structured around a triadic system of self, other agents, and the. We present a novel post training paradigm, visual game learning or vigal, where mllms develop out of domain generalization of multimodal reasoning through playing arcade like games.

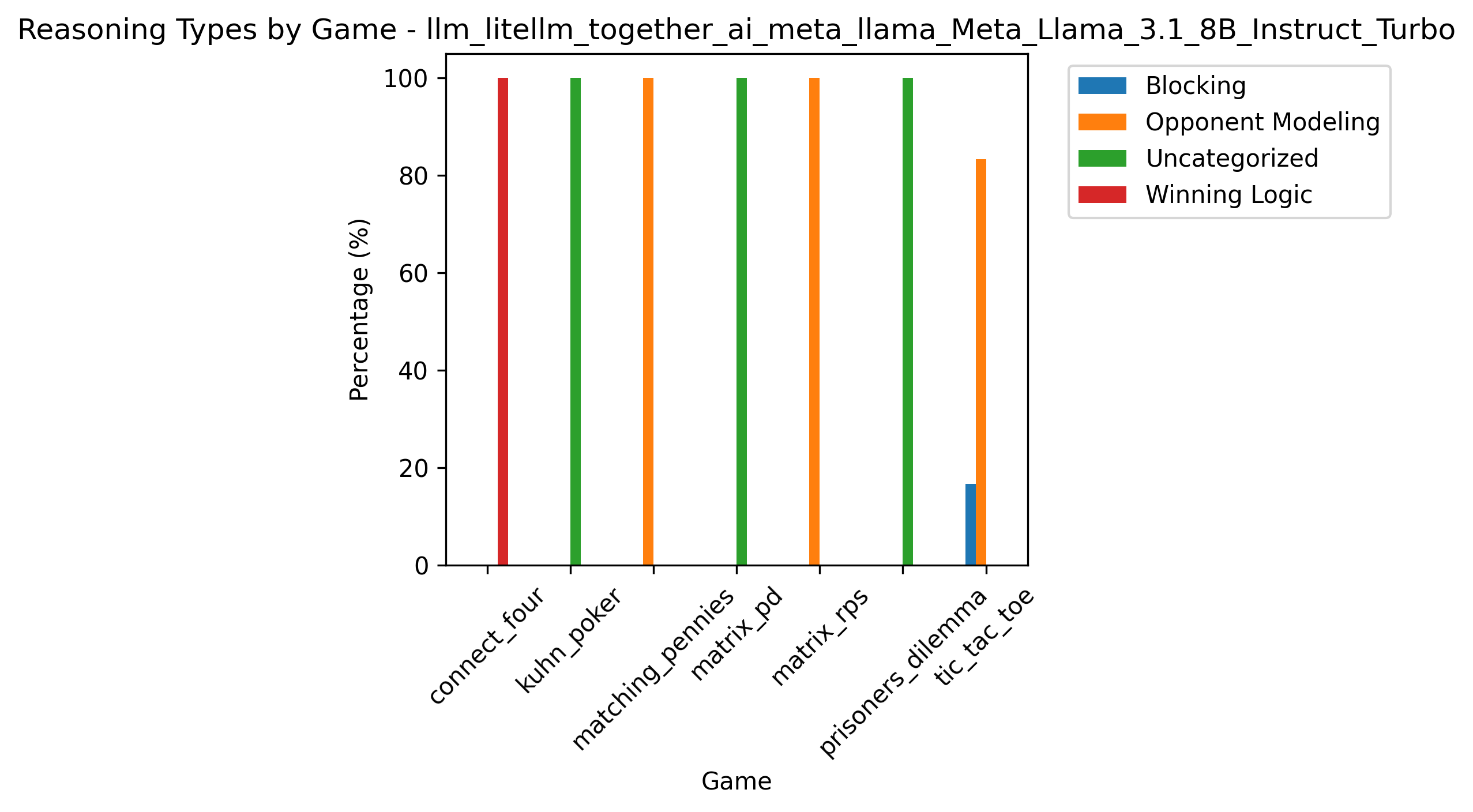

Dc3 Qa Testing For Games Teract with humans according to the game rules. since these games are designed to require strong reasoning skills, the game performanc of llms reflects their reasoning capabilities. we first explain the games and how they are associated with llm reasoning capabilities, follow. This repo is a live list of papers on game playing and large multimodality model "a survey on game playing agents and large models: methods, applications, and challenges". Motivated by literature suggesting that gameplay promotes transferable reasoning skills, we propose a novel post training method, visual game learning (vigal), where mllms develop generalizable reasoning skills through playing arcade like games. It is a dynamic benchmark in which models play live computer games against human opponents to test deductive and inductive reasoning. the benchmark comprises three games, collects over 2,000 gameplay sessions, and analyses the resulting data to measure fine grained reasoning capabilities.

An Introduction To Quality Assurance Testing In Video Games Transperfect Motivated by literature suggesting that gameplay promotes transferable reasoning skills, we propose a novel post training method, visual game learning (vigal), where mllms develop generalizable reasoning skills through playing arcade like games. It is a dynamic benchmark in which models play live computer games against human opponents to test deductive and inductive reasoning. the benchmark comprises three games, collects over 2,000 gameplay sessions, and analyses the resulting data to measure fine grained reasoning capabilities. Researchers from university college london's dark lab used nvidia nim microservices to benchmark the deepseek r1 model with their balrog game based benchmark suite, evaluating its agentic capabilities on challenging interactive tasks. Training free test time reasoning framework transforming vlms into active viewpoint reasoners through coarse to fine 3d exploration, 11.56% on openeqa. | task: spatial reasoning. Here is a curated list of papers about 3d related tasks empowered by large language models (llms). it contains various tasks including 3d understanding, reasoning, generation, and embodied agents.

Improving Game Testing With Reinforcement Learning R Mlforgames Researchers from university college london's dark lab used nvidia nim microservices to benchmark the deepseek r1 model with their balrog game based benchmark suite, evaluating its agentic capabilities on challenging interactive tasks. Training free test time reasoning framework transforming vlms into active viewpoint reasoners through coarse to fine 3d exploration, 11.56% on openeqa. | task: spatial reasoning. Here is a curated list of papers about 3d related tasks empowered by large language models (llms). it contains various tasks including 3d understanding, reasoning, generation, and embodied agents.

Game Reasoning Arena Inside The Mind Of Ai How Llms Think Strategize Here is a curated list of papers about 3d related tasks empowered by large language models (llms). it contains various tasks including 3d understanding, reasoning, generation, and embodied agents.

Comments are closed.