Game Changer Controlnet Control Any Stable Diffusion Model

Controlnet And Stable Diffusion A Game Changer For Ai 58 Off Controlnet is a neural network that controls image generation in stable diffusion by adding extra conditions. details can be found in the article adding conditional control to text to image diffusion models by lvmin zhang and coworkers. I show how to install automatic1111 web ui & controlnet extension installation from scratch in this video. moreover i show how to make amazing qr codes and inpainting and out painting of controlnet which are very similar to photoshop generative fill and midjourney zoom out.

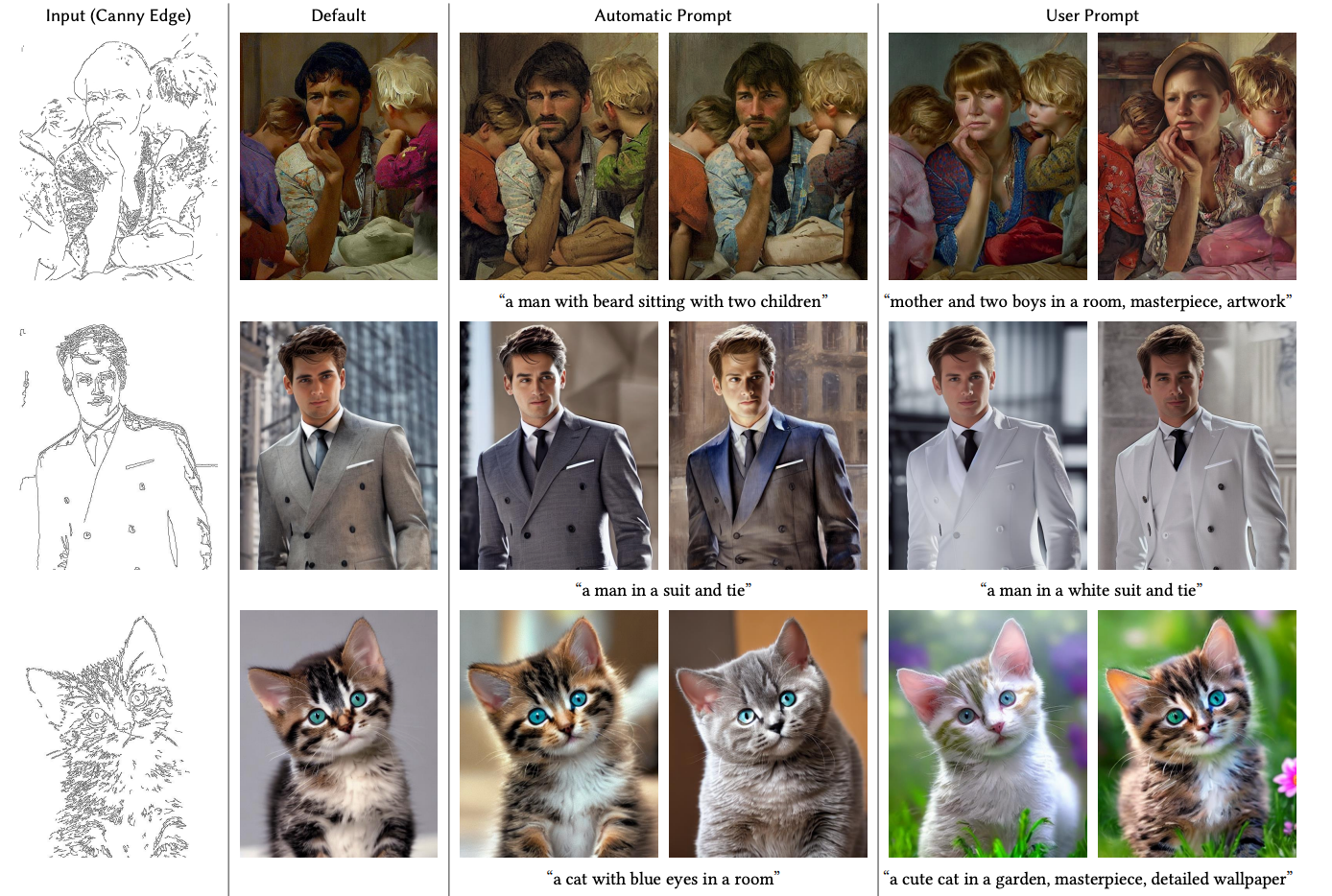

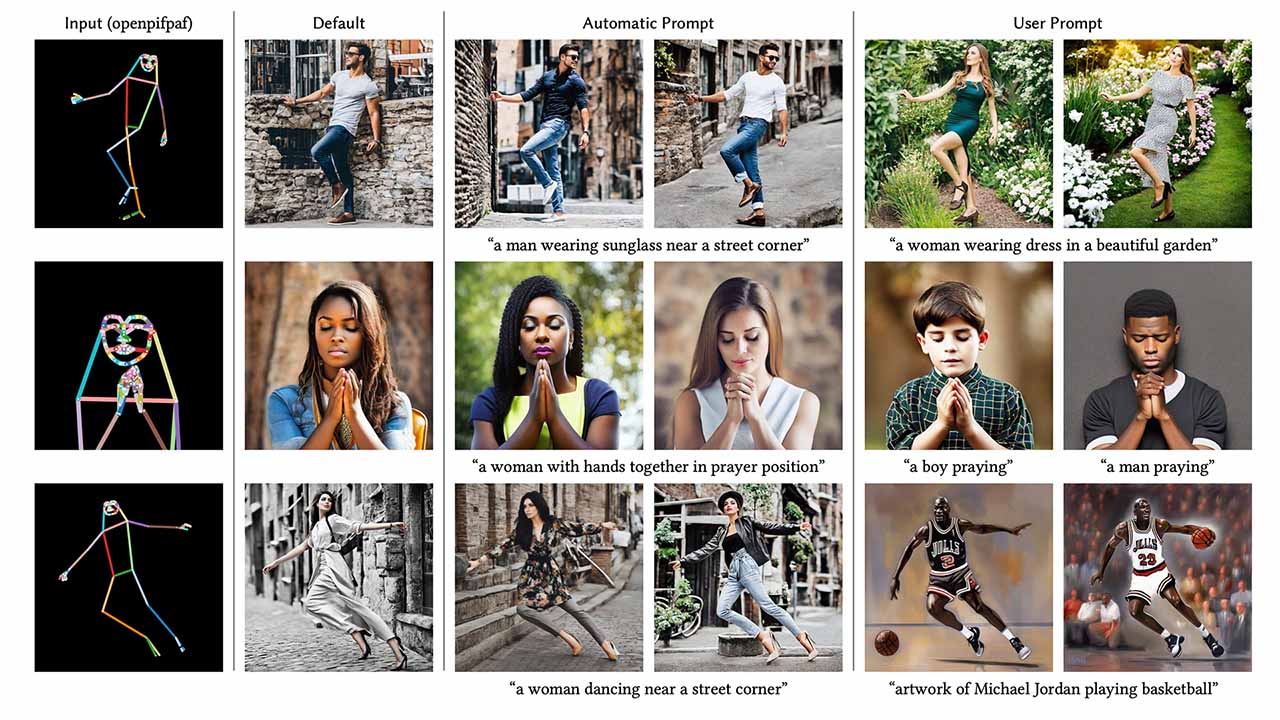

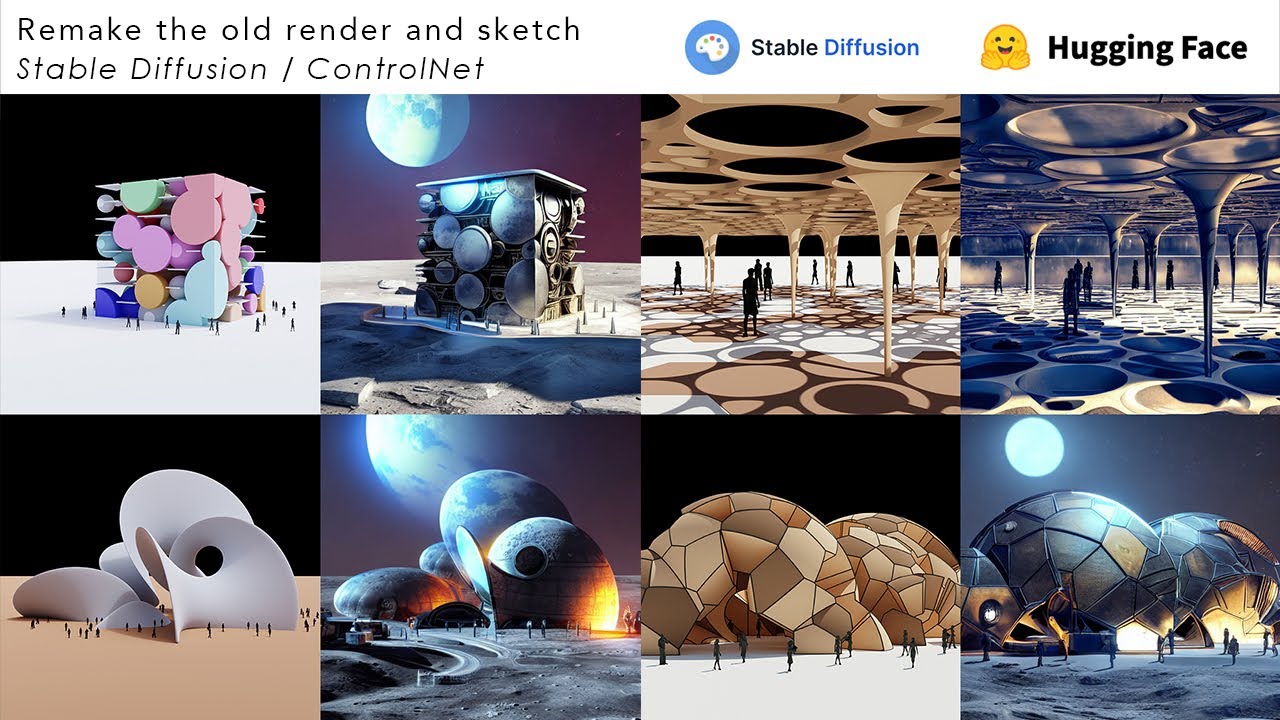

Controlnet And Stable Diffusion A Game Changer For Ai 58 Off Controlnet is a neural network structure to control diffusion models by adding extra conditions, a game changer for ai image generation. it brings unprecedented levels of control to stable diffusion. the revolutionary thing about controlnet is its solution to the problem of spatial consistency. It allows 99% control of the subject to be drawn by stable diffusion. demo platform: amd 6700xt on ubuntu (linux). Whether you seek to control the pose, expressions, placement, style, coloring, depth, orientation, or any other characteristic, there’s a controlnet for it. With a controlnet model, you can provide an additional control image to condition and control stable diffusion generation. for example, if you provide a depth map, the controlnet model generates an image that’ll preserve the spatial information from the depth map.

Controlnet And Stable Diffusion A Game Changer For Ai 58 Off Whether you seek to control the pose, expressions, placement, style, coloring, depth, orientation, or any other characteristic, there’s a controlnet for it. With a controlnet model, you can provide an additional control image to condition and control stable diffusion generation. for example, if you provide a depth map, the controlnet model generates an image that’ll preserve the spatial information from the depth map. Controlnet is a powerful model for stable diffusion which you can install and run on any webui like automatic1111 or comfyui etc. using this we can generate images with multiple passes, and generate images by combining frames of different image poses. Make sure that you select runtime change runtime type > gpu. the requirements installation and the setup of the conda environment takes currently around 10 minutes. this cell need to run before. This blog post provides a step by step guide to installing controlnet for stable diffusion, emphasizing its features, installation process, and usage. Unlock the power of stable diffusion with local image generation and controlnet. master advanced ai art techniques for creative control and high quality output.

Comments are closed.