Finetune Llms To Teach Them Anything With Huggingface And Pytorch Step By Step Tutorial

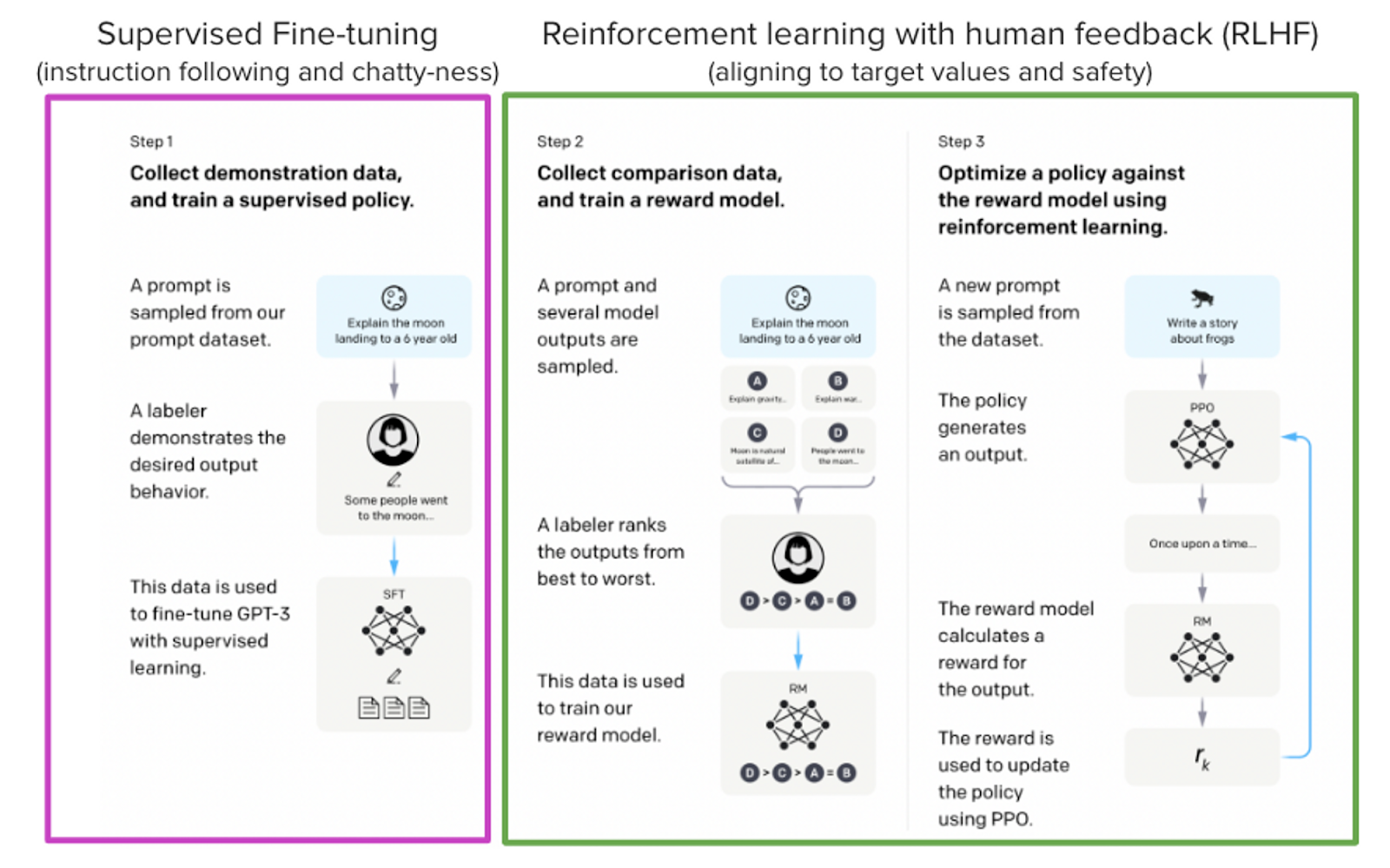

Free Video Fine Tuning Llms With Huggingface And Pytorch A Step By That's what you'll do in chapter 0, the "tl;dr" chapter that will guide you through the entire journey of fine tuning an llm: quantization, low rank adapters, dataset formatting, training, and querying the model. Our first step is to install hugging face libraries and pyroch, including trl, transformers and datasets. if you haven't heard of trl yet, don't worry. it is a new library on top of.

Finetune Llms On Your Own Consumer Hardware Using Tools From Pytorch This blog post contains "chapter 0: tl;dr" of my latest book a hands on guide to fine tuning large language models with pytorch and hugging face. in this blog post, we'll get right to it and fine tune a small language model, microsoft's phi 3 mini 4k instruct, to translate english into yoda speak. Today, we're going to delve into the exciting world of fine tuning language model libraries (llms) locally. this guide is designed for ai enthusiasts familiar with python and common ai tools. Fine tuning large language models (llms) doesn’t have to be intimidating. in this article, you’ll learn how to fine tune a transformer model from scratch using hugging face. Learn to fine tune large language models (llms) locally through a comprehensive step by step tutorial that demonstrates using meta's llama 3.2 1b instruct model with huggingface transformers and pytorch.

Finetune Llms On Your Own Consumer Hardware Using Tools From Pytorch Fine tuning large language models (llms) doesn’t have to be intimidating. in this article, you’ll learn how to fine tune a transformer model from scratch using hugging face. Learn to fine tune large language models (llms) locally through a comprehensive step by step tutorial that demonstrates using meta's llama 3.2 1b instruct model with huggingface transformers and pytorch. We demonstrate how to finetune a 7b parameter model on a typical consumer gpu (nvidia t4 16gb) with lora and tools from the pytorch and hugging face ecosystem with complete reproducible google colab notebook. This in depth tutorial is about fine tuning llms locally with huggingface transformers and pytorch. The only guide you need to fine tune open llms in 2025, including qlora, spectrum, flash attention, liger kernels and more. This article will examine how to fine tune an llm from hugging face, covering model selection, the fine tuning process, and an example implementation. the initial step before fine tuning is choosing an appropriate pre trained llm.

Comments are closed.