Fine Tuning Models The Art Of Fine Tuning Models Has By Saba

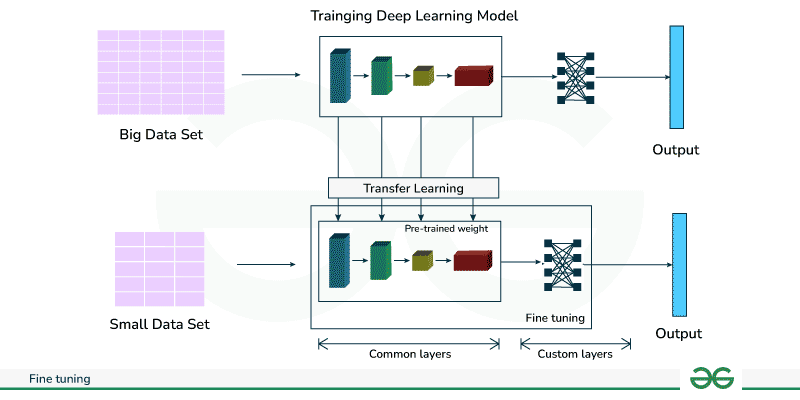

Fine Tuning Models Prompts Stable Diffusion Online In a world where data and tasks are diverse, fine tuning models offer a path towards harnessing the latent potential within pre trained architectures for a multitude of applications. Unlike pre training, where models learn to predict the next word based on general text, fine tuning allows us to train the model on a smaller dataset specifically tailored to following.

Fine Tuning Models For Classification Tasks Ai Kaptan Abstract transformer based models have consistently demonstrated superior accuracy compared to various traditional models across a range of downstream tasks. however, due to their large nature, training or fine tuning them for specific tasks has heavy computational and memory demands. The analysis differentiates between various fine tuning methodologies, including supervised, unsupervised, and instruction based approaches, underscoring their respective implications for specific tasks. a structured seven stage pipeline for llm fine tuning is introduced, covering the complete lifecycle from data preparation to model deployment. Explore the transformative power of ai through fine tuning large language models like gpt 3, bert, and llama 2. discover how customization enhances model performance and application specificity, marking a significant leap in data analysis and ai integration. Unlike conventional methods, this paradigm enables efficient fine tuning even with limited labeled data, making it particularly valuable for social science research where annotated samples are scarce.

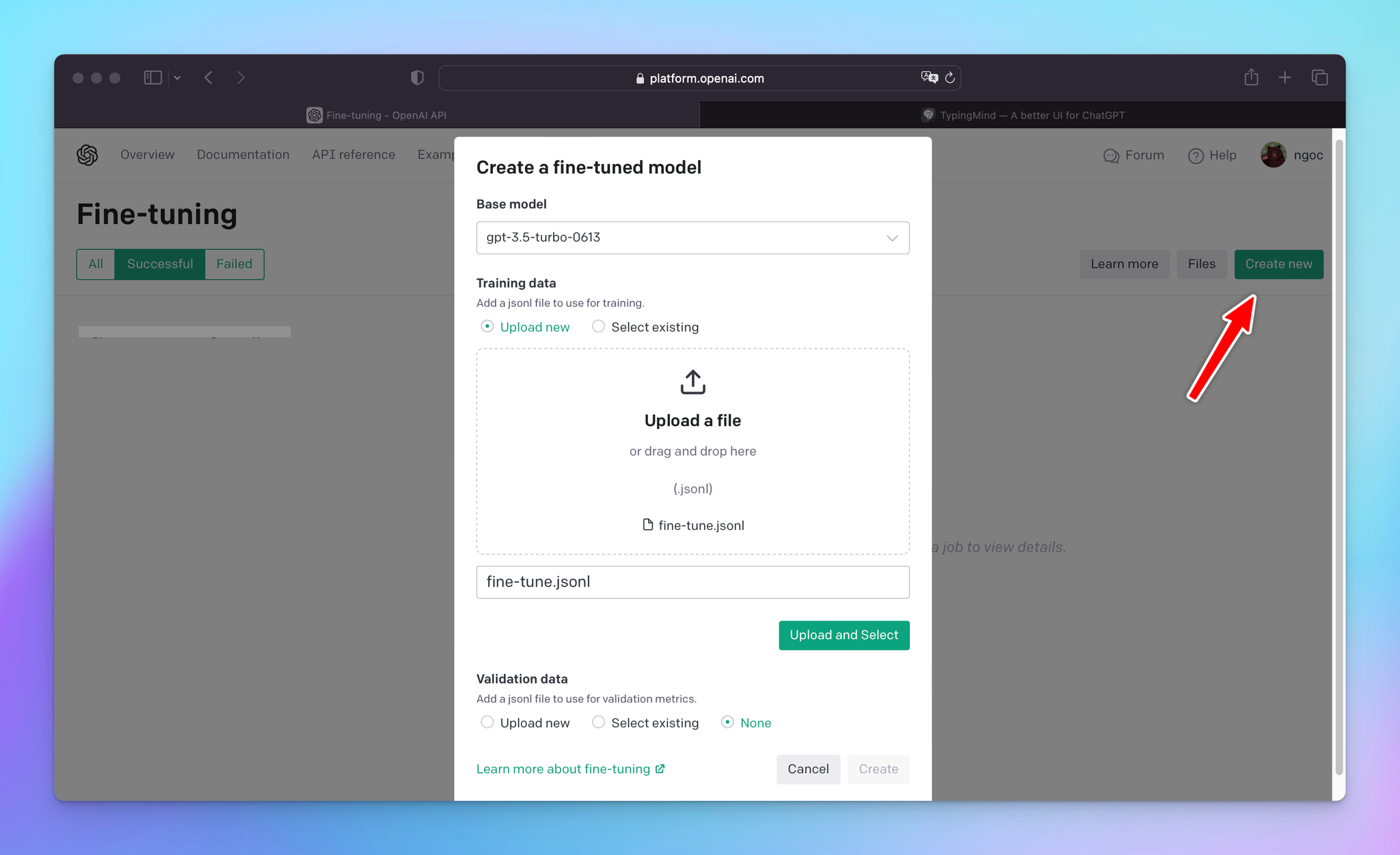

Openai Fine Tuning Models Explore the transformative power of ai through fine tuning large language models like gpt 3, bert, and llama 2. discover how customization enhances model performance and application specificity, marking a significant leap in data analysis and ai integration. Unlike conventional methods, this paradigm enables efficient fine tuning even with limited labeled data, making it particularly valuable for social science research where annotated samples are scarce. Fine tuning (sft) is the dominant paradigm for adapting large pre trained models —spanning diffusion models, llms, and vision language architectures—to downstream tasks or domain specific behaviors. This page provides a step by step guide to fine tuning the deepseek ai deepseek r1 distill qwen 1.5b model using the sfttrainer. by following these steps, you can adapt the model to perform specific tasks more effectively. before diving into implementation, it’s important to understand when sft is the right choice for your project. Unlock the power of large language models with fine tuning. learn its importance, process, best practices, and future trends. case studies included. Fine tuning isn't just theoretical it's transforming industries. we've used it to create models that handle complex documents with tables, forms, and graphics elements that often confuse standard language models.

Comments are closed.