Fine Tuning Large Language Models Llms In 2024 Superannotate

Fine Tuning Large Language Models Llms In 2024 While the foundational training of llms offers a broad understanding of language, it’s the fine tuning process that molds these models into specialized tools capable of understanding niche topics and delivering more precise results. Why this works better: instead of letting llms do the work, this method leverages proven learning principles spaced repetition, active recall, and elaborative rehearsal.

Fine Tuning Large Language Models Llms In 2024 This report examines the fine tuning of large language models (llms), integrating theoretical insights with practical applications. it outlines the historical evolution of llms from traditional natural language processing (nlp) models to their pivotal role in ai. In this review, we outline some of the major methodologic approaches and techniques that can be used to fine tune llms for specialized use cases and enumerate the general steps required for carrying out llm fine tuning. Stay ahead of the curve in 2024 with our website on fine tuning large language models (llms). explore the latest techniques and advancements to optimize your language processing. Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset.

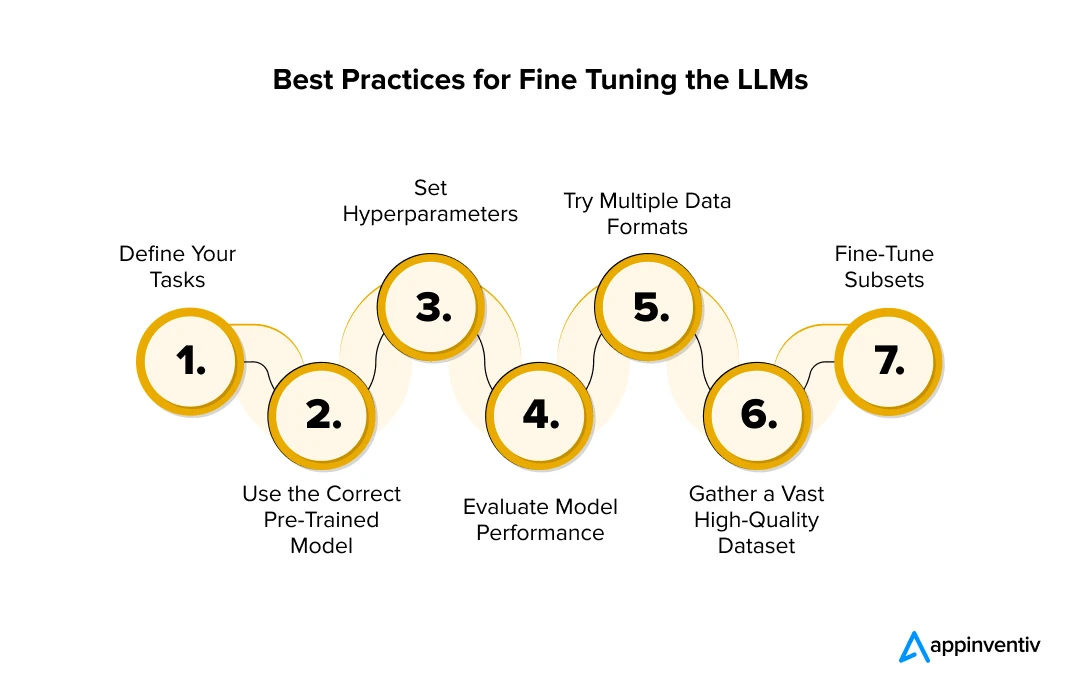

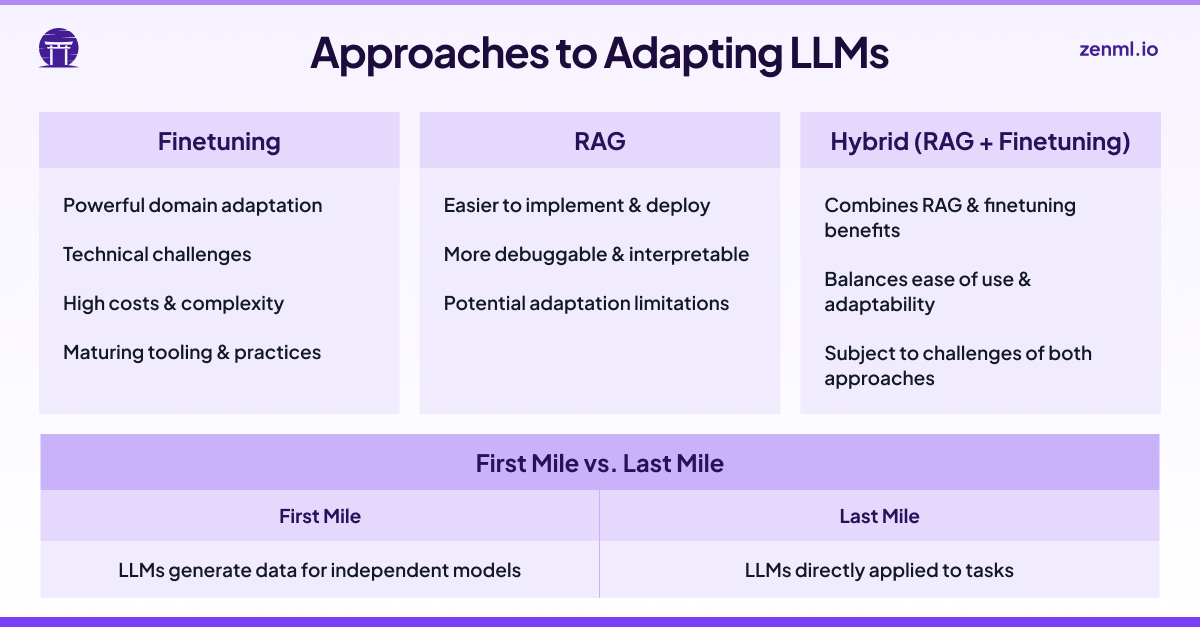

Fine Tuning Large Language Models Llms In 2024 Stay ahead of the curve in 2024 with our website on fine tuning large language models (llms). explore the latest techniques and advancements to optimize your language processing. Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset. This is the 5th article in a series on using large language models (llms) in practice. in this post, we will discuss how to fine tune (ft) a pre trained llm. In recent years, large language models (llms) have been a hot topic in artificial intelligence research., profoundly impacting many fields., including education. In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. In essence, we can use pretrained large language models for new tasks in two main ways: in context learning and finetuning. in this article, we will briefly go over what in context learning means, and then we will go over the various ways we can finetune llms.

Fine Tuning Large Language Models Llms In 2024 This is the 5th article in a series on using large language models (llms) in practice. in this post, we will discuss how to fine tune (ft) a pre trained llm. In recent years, large language models (llms) have been a hot topic in artificial intelligence research., profoundly impacting many fields., including education. In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. In essence, we can use pretrained large language models for new tasks in two main ways: in context learning and finetuning. in this article, we will briefly go over what in context learning means, and then we will go over the various ways we can finetune llms.

Fine Tuning Large Language Models Llms In 2024 In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. In essence, we can use pretrained large language models for new tasks in two main ways: in context learning and finetuning. in this article, we will briefly go over what in context learning means, and then we will go over the various ways we can finetune llms.

Comments are closed.