Figure 4 From Memory Level And Thread Level Parallelism Aware Gpu

Thread Level Parallelism Pdf Thread Computing Central To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs.

Pdf Memory Level And Thread Level Parallelism Aware Gpu Architecture To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel pro grams. An analytical model for a gpu architecture with memory level and thread level parallelism awareness sunpyo hong, hyesoon kim. An analytical model for a gpu architecture with memory level and thread level parallelism awareness free download as pdf file (.pdf), text file (.txt) or read online for free. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively.

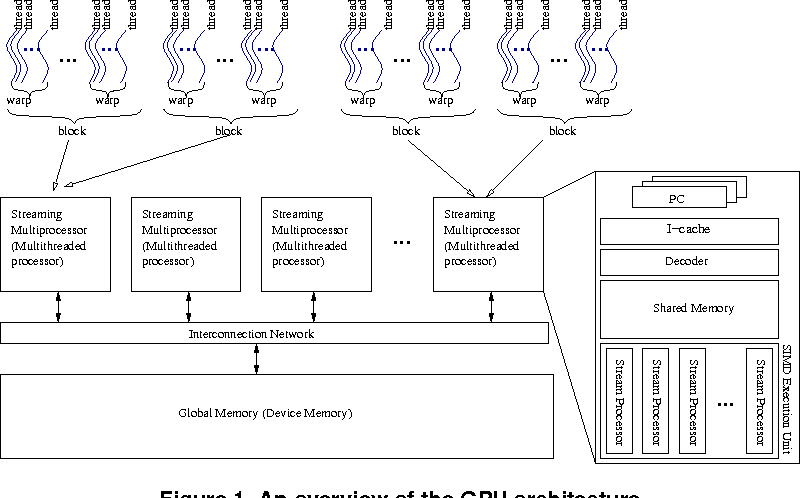

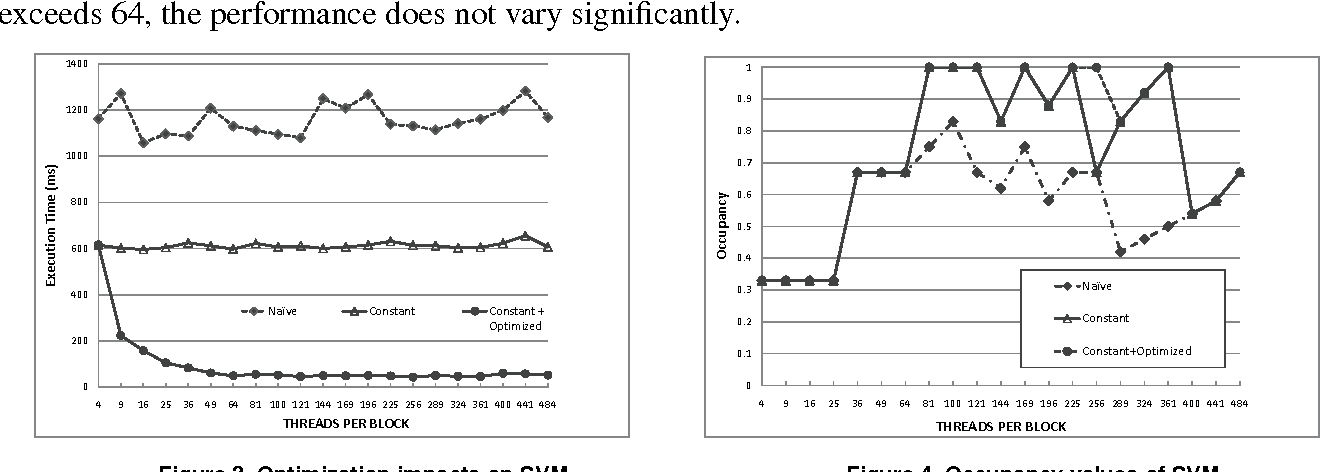

Figure 1 From Memory Level And Thread Level Parallelism Aware Gpu An analytical model for a gpu architecture with memory level and thread level parallelism awareness free download as pdf file (.pdf), text file (.txt) or read online for free. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively. Programming thousands of massively parallel threads is a big challenge for software engineers, but un derstanding the performance bottlenecks of those parallel programs on gpu architectures to improve application performance is even more difficult. This thesis presents a comprehensive analysis of memory access patterns that fully incorporates the influence of thread mapping and explains the memory behavior of kernels running on gpu hardware, and presents an algorithmic methodology to address memory inefficiency issues.

Figure 4 From Memory Level And Thread Level Parallelism Aware Gpu Programming thousands of massively parallel threads is a big challenge for software engineers, but un derstanding the performance bottlenecks of those parallel programs on gpu architectures to improve application performance is even more difficult. This thesis presents a comprehensive analysis of memory access patterns that fully incorporates the influence of thread mapping and explains the memory behavior of kernels running on gpu hardware, and presents an algorithmic methodology to address memory inefficiency issues.

Comments are closed.