Figure 2 From Understanding How Memory Level Parallelism Affects The

Instruction Level Parallelism Pdf Parallel Computing Central It is shown that exploiting memory level parallelism (mlp) is an effective approach for improving the performance of memory bound commercial applications and that microarchitecture has a profound impact on achievable mlp. As the gap between processor and memory performance increases, performance loss due to long latency memory accesses become a primary problem. memory level paral.

Memory Level Parallelism Semantic Scholar In this paper, we show that exploiting memory level parallelism (mlp) is an effective approach for improving the performance of these applications and that microarchitecture has a profound im pact on achievable mlp. In this paper, we show that exploiting memory level parallelism (mlp) is an effective approach for improving the performance of these applications and that microarchitecture has a profound. In the context of parallel computing, level parallelism is frequently associated with loop level parallelism, where iterations of a loop are distributed across multiple processors, allowing several iterations to be executed simultaneously. The first few memory accesses that miss in l1 all fit in the mshr table and are hence considered to execute in parallel. all subsequent main memory accesses that would overflow the mshr table have to wait until one of the outstanding accesses is resolved.

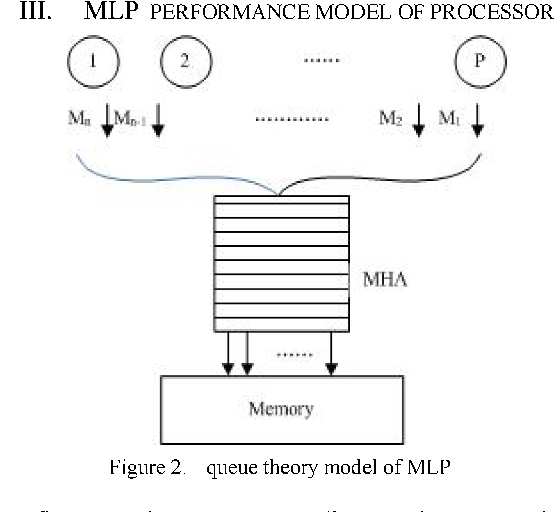

Figure 2 From Understanding How Memory Level Parallelism Affects The In the context of parallel computing, level parallelism is frequently associated with loop level parallelism, where iterations of a loop are distributed across multiple processors, allowing several iterations to be executed simultaneously. The first few memory accesses that miss in l1 all fit in the mshr table and are hence considered to execute in parallel. all subsequent main memory accesses that would overflow the mshr table have to wait until one of the outstanding accesses is resolved. In this paper, we propose compiler support that optimizes both the latencies of last level cache (llc) hits and the latencies of llc misses. our approach tries to achieve this goal by improving the parallelism exhibited by llc hits and llc misses. In computer architecture, memory level parallelism (mlp) is the ability to have pending multiple memory operations, in particular cache misses or translation lookaside buffer (tlb) misses, at the same time. in a single processor, mlp may be considered a form of instruction level parallelism (ilp). All these microarchitectures generate a large number of concurrent memory accesses. these accesses need support at two different levels, namely at the load store queue (lsq) and at the cache hierarchy level. first, they need a lsq that provides efficient address disambiguation and forwarding. We construct mlp stacks from a rigorous analysis of parallelism in memory requests across the memory hierarchy, and connecting it to program’s cpi and run time.

The Combination Of Thread Level Parallelism And Data Level Parallelism In this paper, we propose compiler support that optimizes both the latencies of last level cache (llc) hits and the latencies of llc misses. our approach tries to achieve this goal by improving the parallelism exhibited by llc hits and llc misses. In computer architecture, memory level parallelism (mlp) is the ability to have pending multiple memory operations, in particular cache misses or translation lookaside buffer (tlb) misses, at the same time. in a single processor, mlp may be considered a form of instruction level parallelism (ilp). All these microarchitectures generate a large number of concurrent memory accesses. these accesses need support at two different levels, namely at the load store queue (lsq) and at the cache hierarchy level. first, they need a lsq that provides efficient address disambiguation and forwarding. We construct mlp stacks from a rigorous analysis of parallelism in memory requests across the memory hierarchy, and connecting it to program’s cpi and run time.

2 Setting The Parallelism Level Download Scientific Diagram All these microarchitectures generate a large number of concurrent memory accesses. these accesses need support at two different levels, namely at the load store queue (lsq) and at the cache hierarchy level. first, they need a lsq that provides efficient address disambiguation and forwarding. We construct mlp stacks from a rigorous analysis of parallelism in memory requests across the memory hierarchy, and connecting it to program’s cpi and run time.

Comments are closed.