Figure 2 From Large Language Models Help Humans Verify Truthfulness

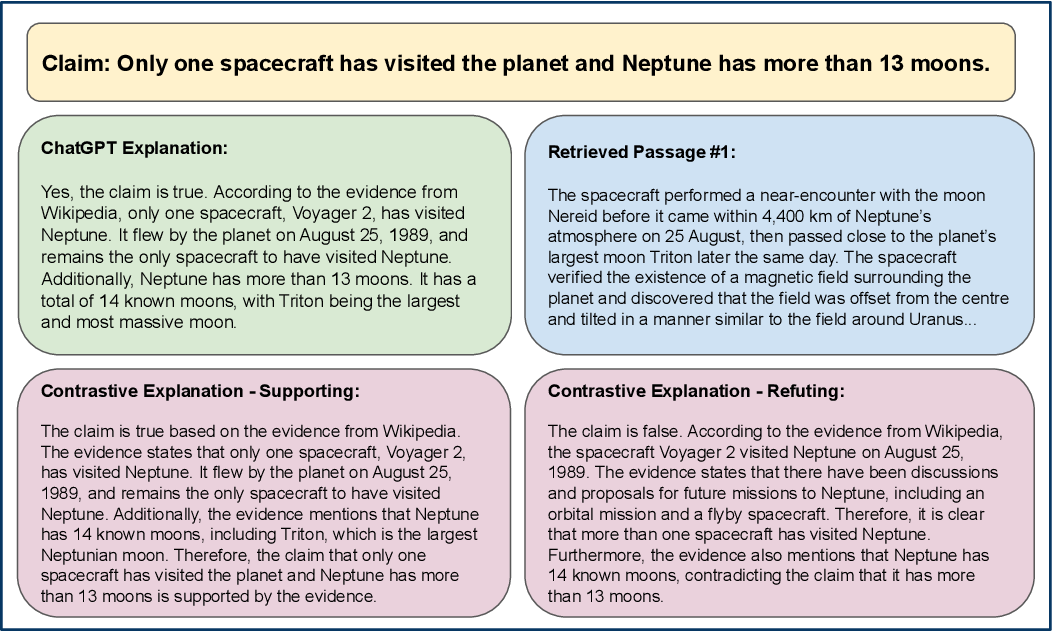

Figure 3 From Large Language Models Help Humans Verify Truthfulness Large language models (llms) are increasingly used for accessing information on the web. their truthfulness and factuality are thus of great interest. to help users make the right decisions about the information they get, llms should not only provide information but also help users fact check it. We conduct human experiments with 80 crowdworkers to compare language models with search engines (information retrieval systems) at facilitating fact checking. we prompt llms to validate a given claim and provide corresponding explanations.

A Survey Of Safety And Trustworthiness Of Large Language Models Through This study highlights that natural language explanations by llms may not be a reliable replacement for reading the retrieved passages, especially in high stakes settings where over relying on wrong ai explanations could lead to critical consequences. In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. Large language models (llms) can produce erroneous responses that sound fluent and convincing, raising the risk that users will rely on these responses as if they were correct. In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users.

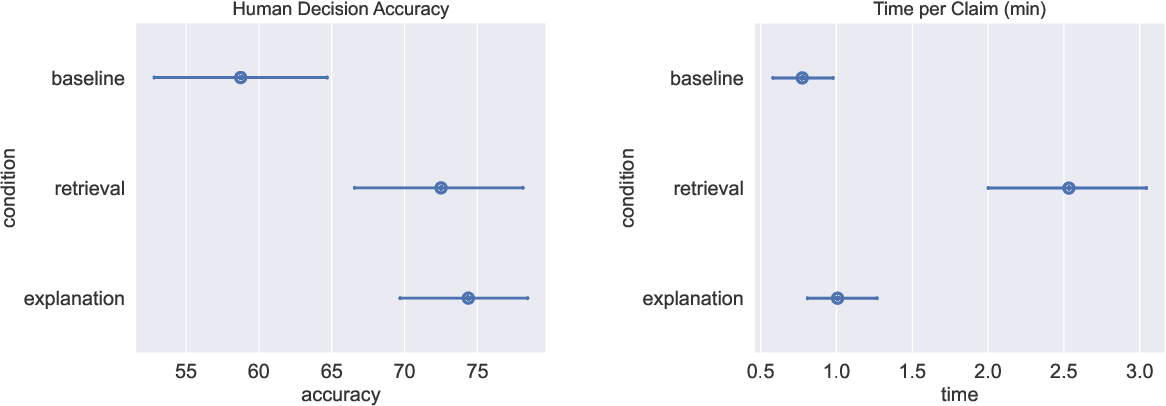

Figure 1 From Large Language Models Help Humans Verify Truthfulness Large language models (llms) can produce erroneous responses that sound fluent and convincing, raising the risk that users will rely on these responses as if they were correct. In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. we prompt llms to validate a given claim and provide corresponding explanations. Our experiments with 80 crowdworkers compare language models with search engines (information retrieval systems) at facilitating fact checking. we prompt llms to validate a given claim and provide corresponding explanations. In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. Large language models (llms) effectively assist users in fact checking via explanations, but users over rely on them when explanations are incorrect. providing contrastive information partially reduces this over reliance but does not outperform search engines.

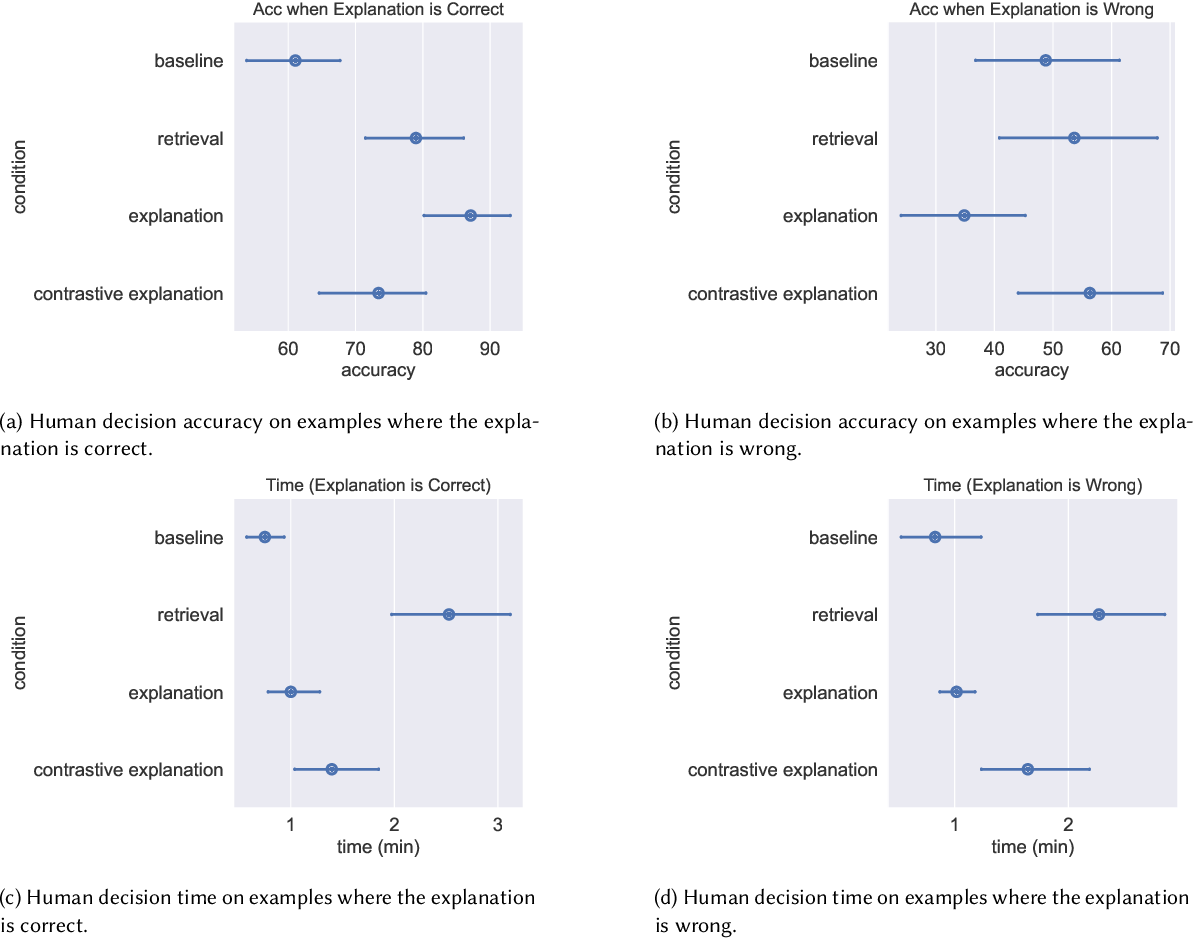

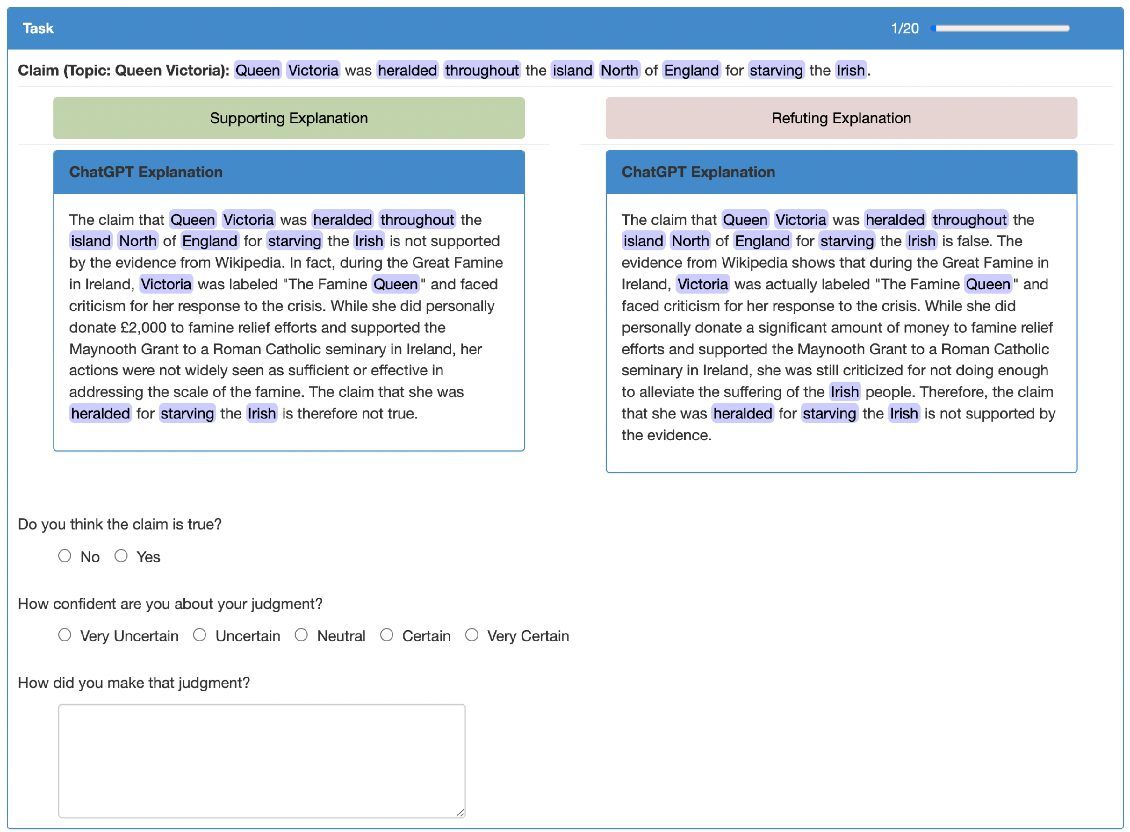

Figure 5 From Large Language Models Help Humans Verify Truthfulness In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. we prompt llms to validate a given claim and provide corresponding explanations. Our experiments with 80 crowdworkers compare language models with search engines (information retrieval systems) at facilitating fact checking. we prompt llms to validate a given claim and provide corresponding explanations. In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. Large language models (llms) effectively assist users in fact checking via explanations, but users over rely on them when explanations are incorrect. providing contrastive information partially reduces this over reliance but does not outperform search engines.

Figure 2 From Large Language Models Help Humans Verify Truthfulness In this paper, we conduct experiments with 80 crowdworkers in total to compare language models with search engines (information retrieval systems) at facilitating fact checking by human users. Large language models (llms) effectively assist users in fact checking via explanations, but users over rely on them when explanations are incorrect. providing contrastive information partially reduces this over reliance but does not outperform search engines.

Comments are closed.