Figure 1 From Reverse Engineering Self Supervised Learning Semantic

Self Supervised Learning Generative Or Contrastive Pdf Artificial This work scrutinizes various self supervised learning approaches from an information theoretic perspective, introducing a unified framework that encapsulates the self supervised information theoretic learning problem and aiming for a better understanding through this proposed unified approach. Remarkably, we show that learned representations align with semantic classes across various hierarchical levels, and this alignment increases during training and when moving deeper into the.

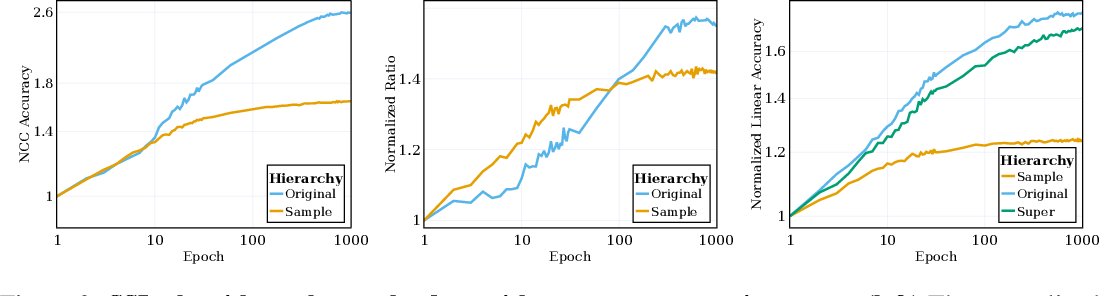

Self Supervised Representation Learning Introduction Advances And Our study reveals an intriguing aspect of the ssl training process: it inherently facilitates the clustering of samples with respect to semantic labels, which is surprisingly driven by the ssl objective's regularization term. Figure 1: vicreg clusters the data with respect to semantic targets the ncc train accuracy of an ssl trained res 10 250 on tiny imagenet, measured at the sample level and original classes (both un normalized and normalized). In essence, our findings suggest that while self supervised learning directly targets sample level clustering, the majority of the training time is spent on orchestrating data clustering according to semantic classes across various hierarchies. Our study reveals an intriguing process within the ssl training: an inherent facilitation of semantic label based clustering, which is surprisingly driven by the regularization component of the ssl objective.

Reverse Engineering Self Supervised Learning In essence, our findings suggest that while self supervised learning directly targets sample level clustering, the majority of the training time is spent on orchestrating data clustering according to semantic classes across various hierarchies. Our study reveals an intriguing process within the ssl training: an inherent facilitation of semantic label based clustering, which is surprisingly driven by the regularization component of the ssl objective. This paper analyzes self supervised learning (ssl) representations, revealing that ssl training inherently clusters samples based on semantic labels due to the regularization term in the ssl objective. Self supervised learning (ssl) is a powerful tool in machine learning, but understanding the learned representations and their underlying mechanisms remains a challenge. We examine our semantic genesis with all the publicly available pre trained models, by either self supervision or fully supervision, on the six distinct target tasks, covering both classification and segmentation in various medical modalities (i.e., ct, mri, and x ray). Unlike supervised learning, which relies on labeled data, ssl employs self defined signals to establish a proxy objective. the model is pre trained using this proxy objective and then fine tuned on the supervised downstream task.

Figure 1 From Reverse Engineering Self Supervised Learning Semantic This paper analyzes self supervised learning (ssl) representations, revealing that ssl training inherently clusters samples based on semantic labels due to the regularization term in the ssl objective. Self supervised learning (ssl) is a powerful tool in machine learning, but understanding the learned representations and their underlying mechanisms remains a challenge. We examine our semantic genesis with all the publicly available pre trained models, by either self supervision or fully supervision, on the six distinct target tasks, covering both classification and segmentation in various medical modalities (i.e., ct, mri, and x ray). Unlike supervised learning, which relies on labeled data, ssl employs self defined signals to establish a proxy objective. the model is pre trained using this proxy objective and then fine tuned on the supervised downstream task.

Figure 1 From Reverse Engineering Self Supervised Learning Semantic We examine our semantic genesis with all the publicly available pre trained models, by either self supervision or fully supervision, on the six distinct target tasks, covering both classification and segmentation in various medical modalities (i.e., ct, mri, and x ray). Unlike supervised learning, which relies on labeled data, ssl employs self defined signals to establish a proxy objective. the model is pre trained using this proxy objective and then fine tuned on the supervised downstream task.

Comments are closed.