Figure 1 From Extracting Memory Level Parallelism Through

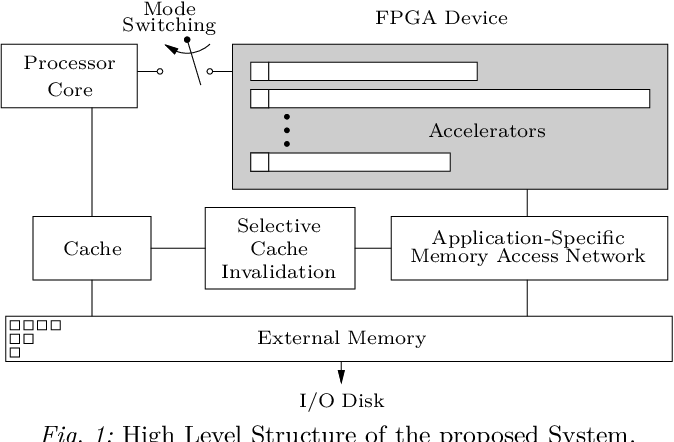

301 Moved Permanently This paper proposes a new fpga based embedded computer architecture, which focuses on how to construct an application specific memory access network capable of extracting the maximum amount of memory level parallelism on a per application basis. This paper proposes a new fpga based embedded computer architecture, which focuses on how to construct an application specific memory access network capable of.

Memory Level Parallelism Semantic Scholar This paper proposes a new fpga based embedded computer architecture, which focuses on how to construct an application specific memory access network capable of extracting the maximum amount of. To study parallel numerical algo rithms, we will first aim to establish a basic understanding of parallel computers and formulate a theoretical model for the scalability of parallel algorithms. Thread level parallelism problems for executing instructions from multiple threads at the same time the instructions in each thread might use the same register names each thread has its own program counter virtual memory management allows for the execution of multiple threads and sharing of the main memory when to switch between different threads:. In this paper, we show that exploiting memory level parallelism (mlp) is an effective approach for improving the performance of these applications and that microarchitecture has a profound im pact on achievable mlp.

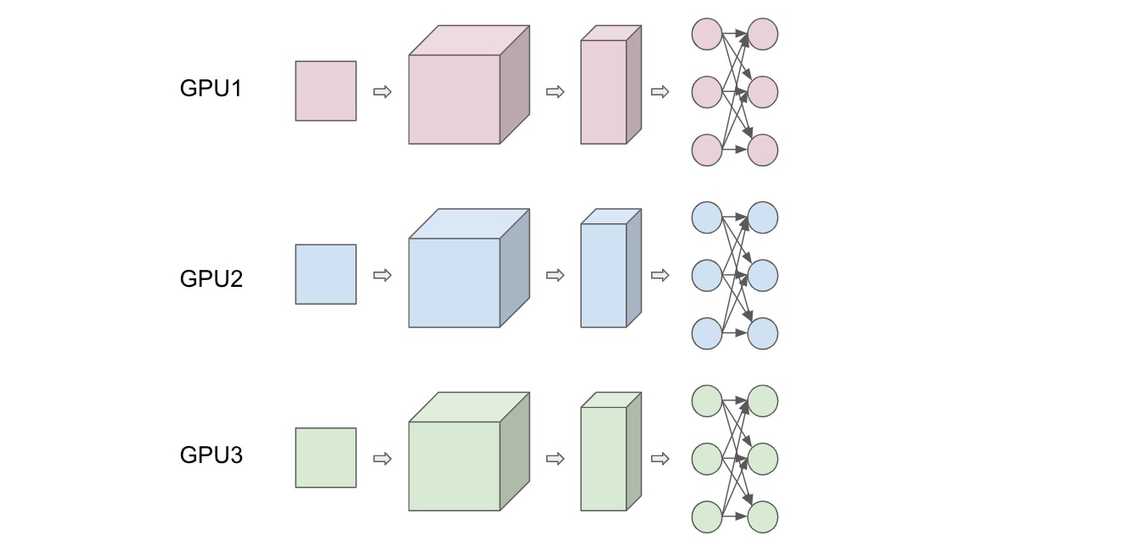

The Combination Of Thread Level Parallelism And Data Level Parallelism Thread level parallelism problems for executing instructions from multiple threads at the same time the instructions in each thread might use the same register names each thread has its own program counter virtual memory management allows for the execution of multiple threads and sharing of the main memory when to switch between different threads:. In this paper, we show that exploiting memory level parallelism (mlp) is an effective approach for improving the performance of these applications and that microarchitecture has a profound im pact on achievable mlp. In computer architecture, memory level parallelism (mlp) is the ability to have pending multiple memory operations, in particular cache misses or translation lookaside buffer (tlb) misses, at the same time. in a single processor, mlp may be considered a form of instruction level parallelism (ilp). This collaboration is enabled by a shared memory model in which the same address in memory points to the same data on all the cores on a chip—this lets the cores reference, read, and write the same data just as a single core cpu would. Abstract: this paper presents a comprehensive survey of techniques for modeling and improving memory level parallelism (mlp), an increasingly important factor in enhancing modern processor performance. Modern out of order processors have increased capacity to exploit instruction level parallelism (ilp) and memory level parallelism (mlp), e.g., by using wide superscalar pipelines and vector execution units, as well as deep bufers for in flight memory requests.

Figure 1 From Extracting Memory Level Parallelism Through In computer architecture, memory level parallelism (mlp) is the ability to have pending multiple memory operations, in particular cache misses or translation lookaside buffer (tlb) misses, at the same time. in a single processor, mlp may be considered a form of instruction level parallelism (ilp). This collaboration is enabled by a shared memory model in which the same address in memory points to the same data on all the cores on a chip—this lets the cores reference, read, and write the same data just as a single core cpu would. Abstract: this paper presents a comprehensive survey of techniques for modeling and improving memory level parallelism (mlp), an increasingly important factor in enhancing modern processor performance. Modern out of order processors have increased capacity to exploit instruction level parallelism (ilp) and memory level parallelism (mlp), e.g., by using wide superscalar pipelines and vector execution units, as well as deep bufers for in flight memory requests.

Comments are closed.