Feature Request Implement Stable Video Diffusion Model Svd Issue

How To Generate Svd Video With Sd Forge Stable Diffusion Art Yes this is one of the most requested features now and it has been one month, and i see no progress. we have the right to show interest, but not the right to demand the developers to do it. In this paper, we identify and evaluate three different stages for successful training of video ldms: text to image pretraining, video pretraining, and high quality video finetuning.

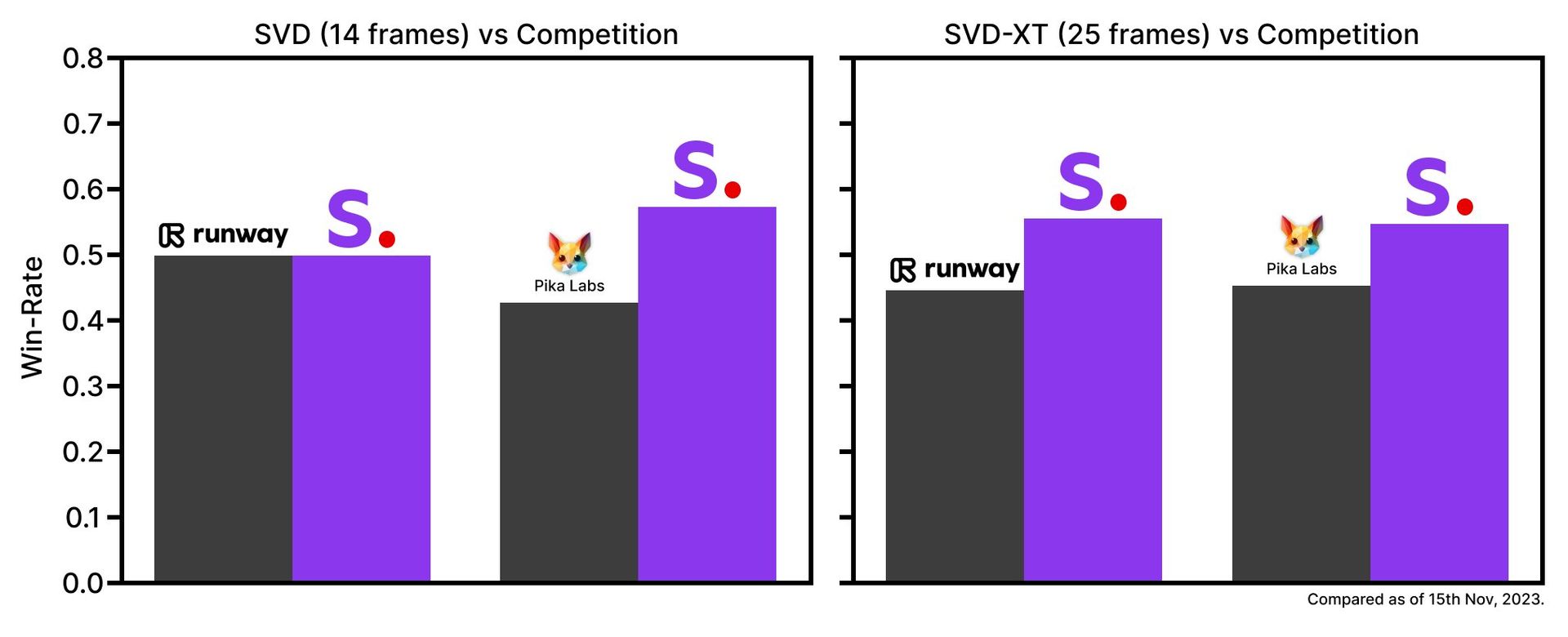

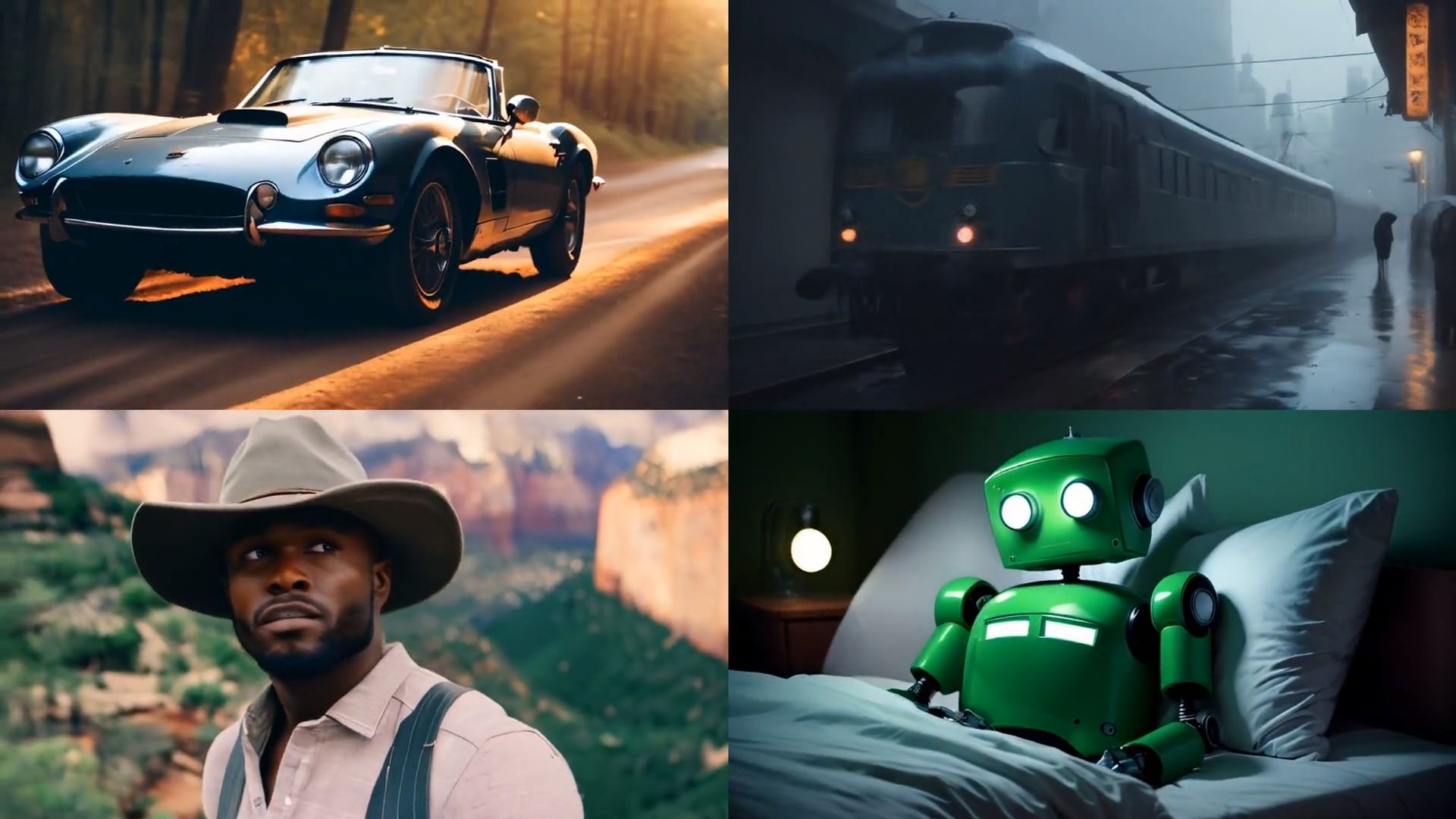

Stable Video Diffusion Svd Transforms Static Images To Dynamic Shorts Stable video diffusion (svd) is a generative diffusion model that leverages a single image as a conditioning frame to synthesize video sequences. this model was trained to generate 25 frames at resolution 576x1024 given a context frame of the same size, fine tuned from svd image to video [14 frames]. Complete api documentation for stable video diffusion. code examples in python, javascript, and curl. integrate this ai model into your applications with segmind's serverless api. In this paper, we identify and evaluate three different stages for successful training of video ldms: text to image pretraining, video pretraining, and high quality video finetuning. Our video model can be easily adapted to various downstream tasks, including multi view synthesis from a single image with finetuning on multi view datasets. we are planning a variety of models that build on and extend this base, similar to the ecosystem that has built around stable diffusion.

Stable Video Diffusion Svd Transforms Static Images To Dynamic Shorts In this paper, we identify and evaluate three different stages for successful training of video ldms: text to image pretraining, video pretraining, and high quality video finetuning. Our video model can be easily adapted to various downstream tasks, including multi view synthesis from a single image with finetuning on multi view datasets. we are planning a variety of models that build on and extend this base, similar to the ecosystem that has built around stable diffusion. Recently, latent diffusion models trained for 2d image synthesis have been turned into generative video models by inserting temporal layers and finetuning them on small, high quality video datasets. We are releasing stable video diffusion, an image to video model, for research purposes: svd: this model was trained to generate 14 frames at resolution 576x1024 given a context frame of the same size. I have actually tested using the special vae decoder from the svd model with animatediff outputs and it helps output quality quite a bit, removing a lot of noise. We are releasing stable video diffusion, an image to video model, for research purposes: svd: this model was trained to generate 14 frames at resolution 576x1024 given a context frame of the same size.

Stable Video Diffusion Tutorial Mastering Svd In Forge Ui R Recently, latent diffusion models trained for 2d image synthesis have been turned into generative video models by inserting temporal layers and finetuning them on small, high quality video datasets. We are releasing stable video diffusion, an image to video model, for research purposes: svd: this model was trained to generate 14 frames at resolution 576x1024 given a context frame of the same size. I have actually tested using the special vae decoder from the svd model with animatediff outputs and it helps output quality quite a bit, removing a lot of noise. We are releasing stable video diffusion, an image to video model, for research purposes: svd: this model was trained to generate 14 frames at resolution 576x1024 given a context frame of the same size.

Comments are closed.