Fantastic Kl Divergence And How To Actually Compute It

Kl Divergence Made Easy Kullback–leibler (kl) divergence measures the difference between two probability distributions. but where does that come from? in this video, we provide an overview of kl divergence and. Watch?v=txe23653jru kullback–leibler (kl) divergence measures the difference between two probability distributions. but where does that come from?.

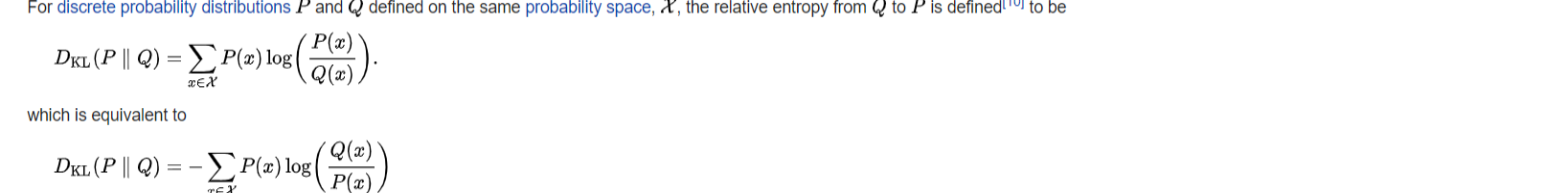

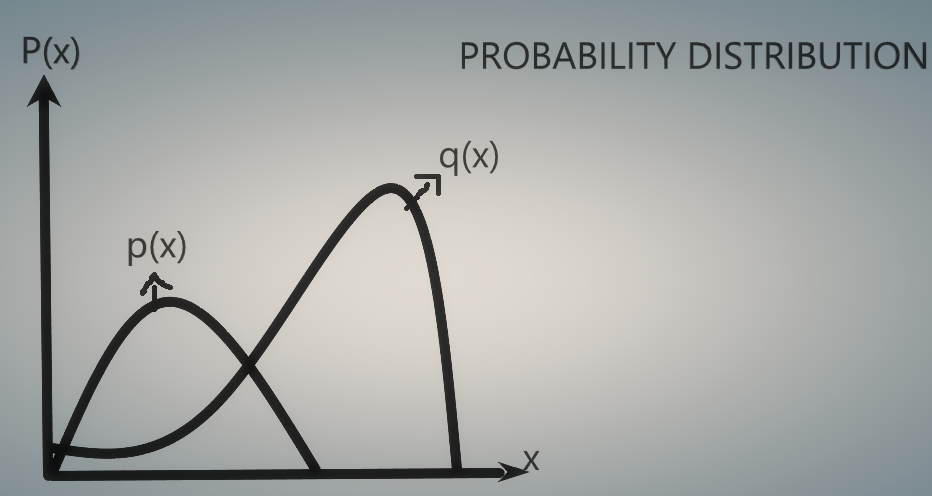

Kl Divergence Explore kl divergence, one of the most common yet essential tools used in machine learning. Pytorch offers robust tools for computing kl divergence, making it accessible for various applications in deep learning and beyond. by understanding the different methods available in pytorch and their appropriate use cases, practitioners can effectively leverage kl divergence in their models. Sometimes, as in this article, it may be described as the divergence of p from q or as the divergence from q to p. this reflects the asymmetry in bayesian inference, which starts from a prior distribution q and updates to the posterior p. We have learned about the fundamental concepts of kl divergence, how to compute it using pytorch's built in functions, common practices in applications such as vaes and model evaluation, and best practices for handling numerical stability and choosing the right reduction method.

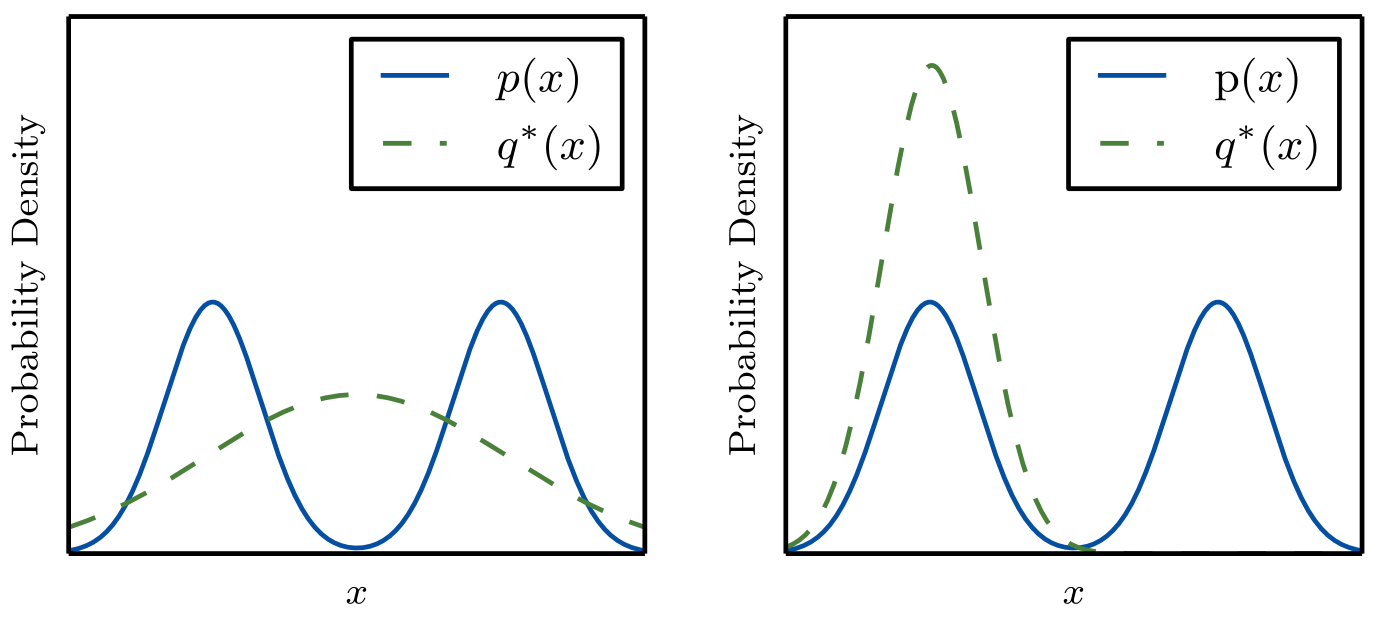

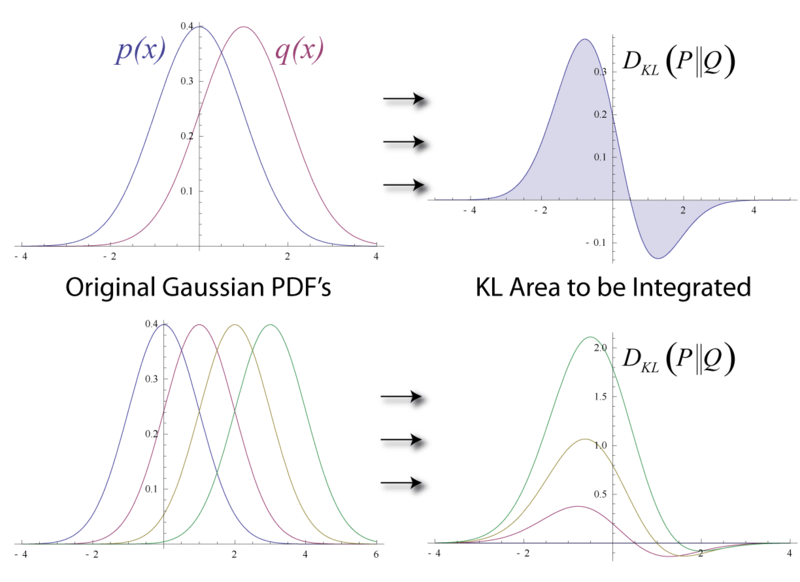

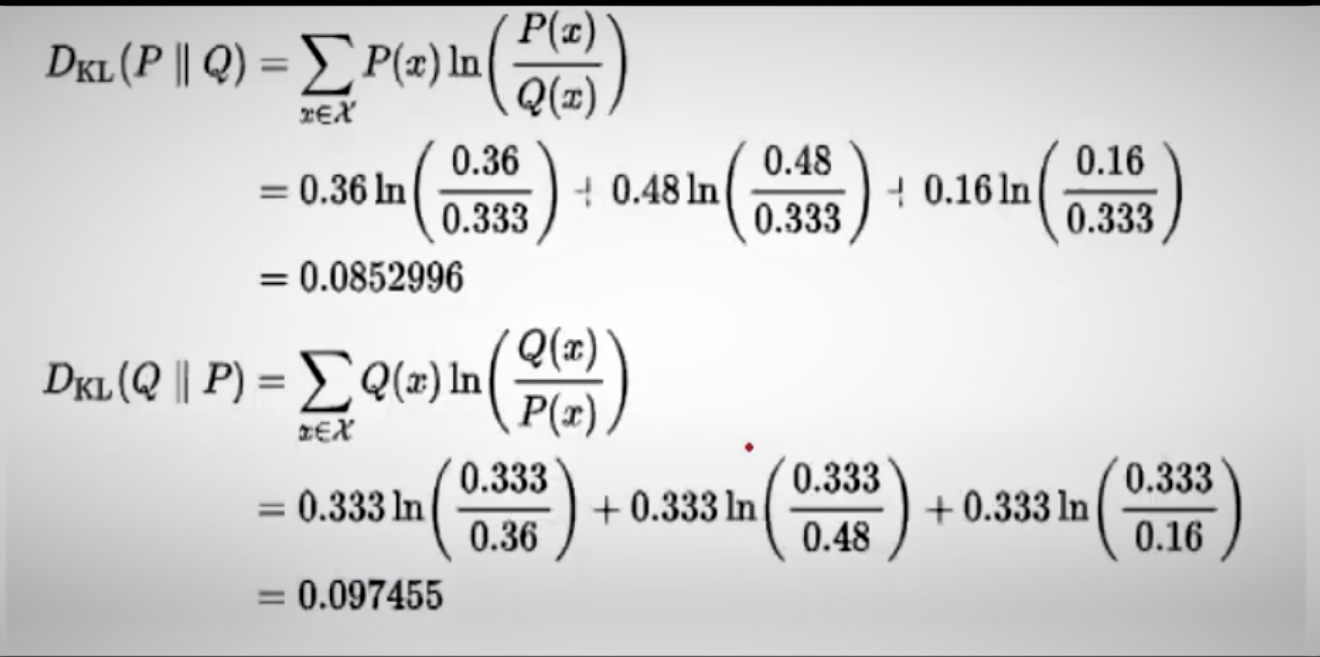

Kl Divergence Relative Entropy Sometimes, as in this article, it may be described as the divergence of p from q or as the divergence from q to p. this reflects the asymmetry in bayesian inference, which starts from a prior distribution q and updates to the posterior p. We have learned about the fundamental concepts of kl divergence, how to compute it using pytorch's built in functions, common practices in applications such as vaes and model evaluation, and best practices for handling numerical stability and choosing the right reduction method. This guide is built to change that. here, we’ll go straight to the heart of applying kl divergence in pytorch with practical, hands on code. We defined kl divergence and showed that mle is secretly minimizing it. we saw that the two directions of kl (forward and reverse) produce fundamentally different behavior. This blog covers how to use kl divergence, how it works in practice, and when kl divergence should and should not be used to monitor for drift. how do you calculate kl divergence?. We establish a rigorous upper bound on the kullback leibler (kl) divergence between the true data distribution and the distribution estimated by flow matching, expressed in terms of the l2 flow matching training loss.

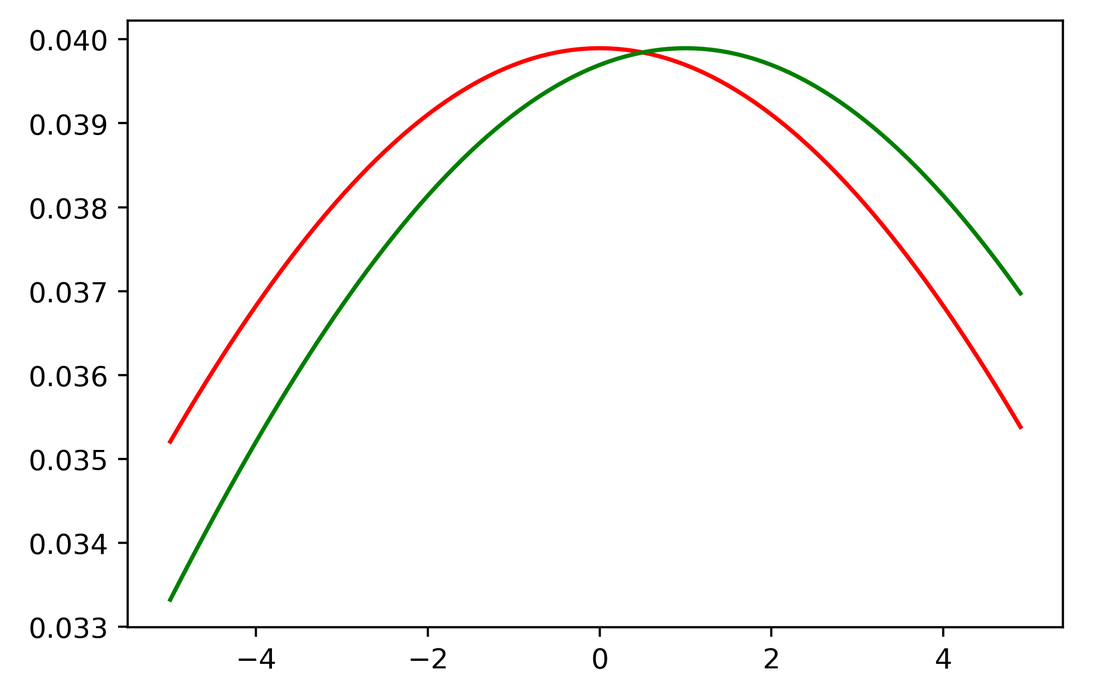

Kl Divergence This guide is built to change that. here, we’ll go straight to the heart of applying kl divergence in pytorch with practical, hands on code. We defined kl divergence and showed that mle is secretly minimizing it. we saw that the two directions of kl (forward and reverse) produce fundamentally different behavior. This blog covers how to use kl divergence, how it works in practice, and when kl divergence should and should not be used to monitor for drift. how do you calculate kl divergence?. We establish a rigorous upper bound on the kullback leibler (kl) divergence between the true data distribution and the distribution estimated by flow matching, expressed in terms of the l2 flow matching training loss.

Kl Divergence This blog covers how to use kl divergence, how it works in practice, and when kl divergence should and should not be used to monitor for drift. how do you calculate kl divergence?. We establish a rigorous upper bound on the kullback leibler (kl) divergence between the true data distribution and the distribution estimated by flow matching, expressed in terms of the l2 flow matching training loss.

Kl Divergence

Comments are closed.