Exponential Linear Unit Elu

Exponential Linear Unit Elu Applies the exponential linear unit (elu) function, element wise. method described in the paper: fast and accurate deep network learning by exponential linear units (elus). Exponential linear unit (elu) is an activation function that modifies the negative part of relu by applying an exponential curve. it allows small negative values instead of zero which improves learning dynamics.

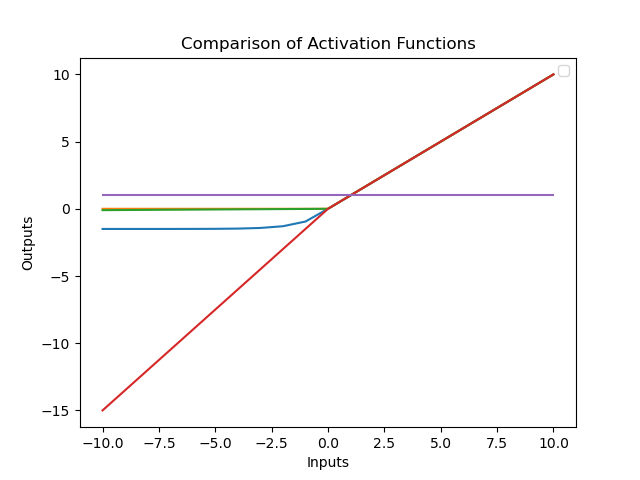

Exponential Linear Unit Elu Like rectified linear units (relus), leaky relus (lrelus) and parametrized relus (prelus), elus alleviate the vanishing gradient problem via the identity for positive values. however, elus have improved learning characteristics compared to the units with other activation functions. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. elus saturate to a negative value when the argument gets smaller. In this article, we will cover the internal workings of relu (rectified linear unit) and elu (exponential linear unit), their strengths and weaknesses compared to each other, demonstrate their implementation in major frameworks, and provide some guidelines for when to use each. Exponential linear unit (elu) is an activation function which is an improved to relu. we have explored elu in depth along with pseudocode.

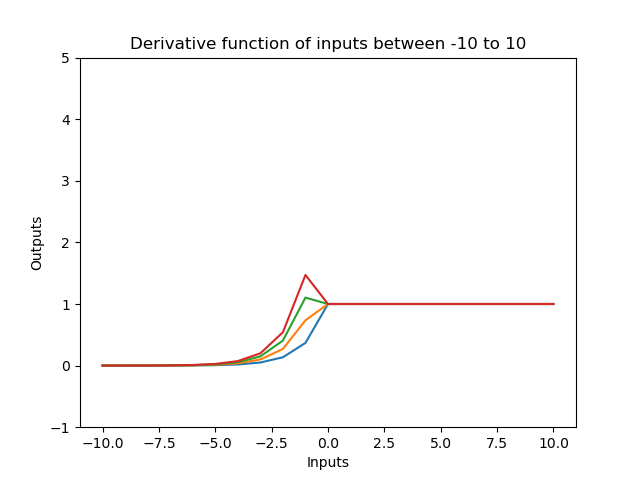

Exponential Linear Unit Elu In this article, we will cover the internal workings of relu (rectified linear unit) and elu (exponential linear unit), their strengths and weaknesses compared to each other, demonstrate their implementation in major frameworks, and provide some guidelines for when to use each. Exponential linear unit (elu) is an activation function which is an improved to relu. we have explored elu in depth along with pseudocode. Abstract and figures we introduce the "exponential linear unit" (elu) which speeds up learning in deep neural networks and leads to higher classification accuracies. The exponential linear unit (elu) activation function is a type of activation function commonly used in deep neural networks. it was introduced as an alternative to the rectified linear unit (relu) and addresses some of its limitations. Elu (exponential linear unit) introduces a smooth exponential curve in the negative region to create a gradual transition at zero. instead of an abrupt change in slope, the function bends smoothly into negative values, producing continuous derivatives and more stable gradient flow. The exponential linear unit (elu) is a powerful activation function in pytorch. it addresses some of the limitations of other activation functions like relu, such as the vanishing gradient problem.

Bmss Elu Exponential Linear Unit Download Table Abstract and figures we introduce the "exponential linear unit" (elu) which speeds up learning in deep neural networks and leads to higher classification accuracies. The exponential linear unit (elu) activation function is a type of activation function commonly used in deep neural networks. it was introduced as an alternative to the rectified linear unit (relu) and addresses some of its limitations. Elu (exponential linear unit) introduces a smooth exponential curve in the negative region to create a gradual transition at zero. instead of an abrupt change in slope, the function bends smoothly into negative values, producing continuous derivatives and more stable gradient flow. The exponential linear unit (elu) is a powerful activation function in pytorch. it addresses some of the limitations of other activation functions like relu, such as the vanishing gradient problem.

Functions Including Exponential Linear Unit Elu Parametric Rectified Elu (exponential linear unit) introduces a smooth exponential curve in the negative region to create a gradual transition at zero. instead of an abrupt change in slope, the function bends smoothly into negative values, producing continuous derivatives and more stable gradient flow. The exponential linear unit (elu) is a powerful activation function in pytorch. it addresses some of the limitations of other activation functions like relu, such as the vanishing gradient problem.

Comments are closed.