Exploring Neural Radiance Fields For 3d Scene Synthesis

Nerf Representing Scene As Neural Radiance Fields For View Synthesis Neural radiance fields (nerfs) are a deep learning technique that is revolutionizing the way we represent and interact with 3d scenes. discover the core concepts behind nerfs novel view synthesis, learn about cutting edge variations, explore their applications and a code example. This survey presents a systematic analysis of over 200 papers focused on dynamic scene representation using radiance field, spanning the spectrum from implicit neural representations to explicit gaussian primitives.

Nerf Art Text Driven Neural Radiance Fields Stylization 54 Off Reconstructing 3d scenes from blurred images is a challenging task, especially under fast motions. event cameras complement standard rgb frames with microsecond level temporal resolution and asynchronous outputs. to harness these advantages, we introduce sk2 enerf,. However, there is still room for improvement in non rigid motion scenarios. the research presented in this paper offers a novel solution for dynamic scene view synthesis and provides a promising direction for future studies. This quantitative analysis was complemented by a qualitative evaluation of the neural radiance field mapping, which focused on the fidelity and detail of the 3d scene reconstructions generated by our method. Ction and rendering, spotlighting neural radiance fields (nerf) and 3d gaussian splatting as transformative methods. while nerf introduces a neural network for high fidelity scene reconstruction.

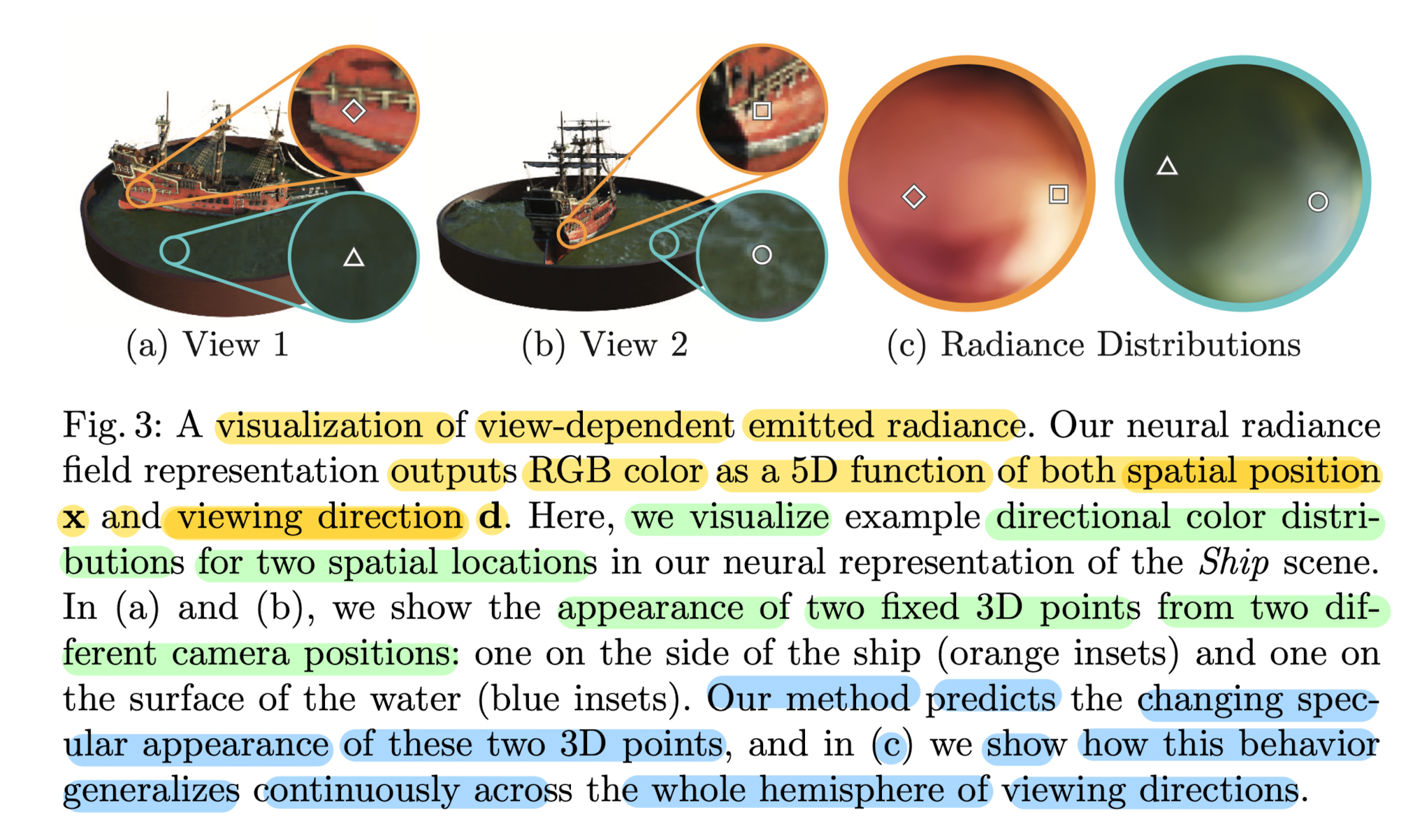

Multi Plane Neural Radiance Fields For Novel View Synthesis Deepai This quantitative analysis was complemented by a qualitative evaluation of the neural radiance field mapping, which focused on the fidelity and detail of the 3d scene reconstructions generated by our method. Ction and rendering, spotlighting neural radiance fields (nerf) and 3d gaussian splatting as transformative methods. while nerf introduces a neural network for high fidelity scene reconstruction. Text2nerf: turn a few words into lifelike 3d scenes a new tool called text2nerf lets you type a short description and get back a real looking 3d scene. type a line about a cozy cafe or a misty forest, and it imagines the look and build a scene you can spin around. this text driven idea creates rich 3d scenes with real textures, not just dreamy sketches, and often the result is surprisingly. We evaluate our method on the simulated and captured datasets and use transient neural radiance fields to render intensity, depth, and time resolved lidar measurements from novel views. Recently, neural radiance fields (nerfs) have emerged as a powerful new paradigm for 3d representation and novel view synthesis. this literature review provides an overview of both classical 3d reconstruction methods and modern neural rendering approaches, with equal emphasis on each. Citation: this paper presents a superior method for synthesizing pho torealistic views of complex scenes using a continuous neural radiance field representation, outperforming previous approaches.

Baking Neural Radiance Fields For Real Time View Synthesis Deepai Text2nerf: turn a few words into lifelike 3d scenes a new tool called text2nerf lets you type a short description and get back a real looking 3d scene. type a line about a cozy cafe or a misty forest, and it imagines the look and build a scene you can spin around. this text driven idea creates rich 3d scenes with real textures, not just dreamy sketches, and often the result is surprisingly. We evaluate our method on the simulated and captured datasets and use transient neural radiance fields to render intensity, depth, and time resolved lidar measurements from novel views. Recently, neural radiance fields (nerfs) have emerged as a powerful new paradigm for 3d representation and novel view synthesis. this literature review provides an overview of both classical 3d reconstruction methods and modern neural rendering approaches, with equal emphasis on each. Citation: this paper presents a superior method for synthesizing pho torealistic views of complex scenes using a continuous neural radiance field representation, outperforming previous approaches.

Lidar Nerf Novel Lidar View Synthesis Via Neural Radiance Fields Deepai Recently, neural radiance fields (nerfs) have emerged as a powerful new paradigm for 3d representation and novel view synthesis. this literature review provides an overview of both classical 3d reconstruction methods and modern neural rendering approaches, with equal emphasis on each. Citation: this paper presents a superior method for synthesizing pho torealistic views of complex scenes using a continuous neural radiance field representation, outperforming previous approaches.

Comments are closed.