Exploring Data Ingestion With Python On Azure Databricks S3 Bucket

Exploring Data Ingestion With Python On Azure Databricks S3 Bucket In this comprehensive guide, we'll explore three distinct approaches for connecting an aws s3 bucket with azure databricks. these approaches not only facilitate data integration but also. This article describes how to load data to azure databricks with lakeflow spark declarative pipelines.

Exploring Data Ingestion With Python On Azure Databricks Sftp Loading data from azure cloud storage to databricks is simplified with the dlt library. this verified source streams csv, parquet, and jsonl files from azure cloud storage using the reader source. The following example creates a delta table and uses the copy into sql command to load sample data from databricks datasets into the table. you can run the example python, r, scala, or sql code from a notebook attached to an azure databricks cluster. With the databricks data intelligence platform, you can effortlessly ingest data from virtually any source, unifying your entire data estate into a single, intelligent foundation. Before you start exchanging data between databricks and s3, you need to have the necessary permissions in place. we have a separate article that takes you through configuring s3 permissions for databricks access.

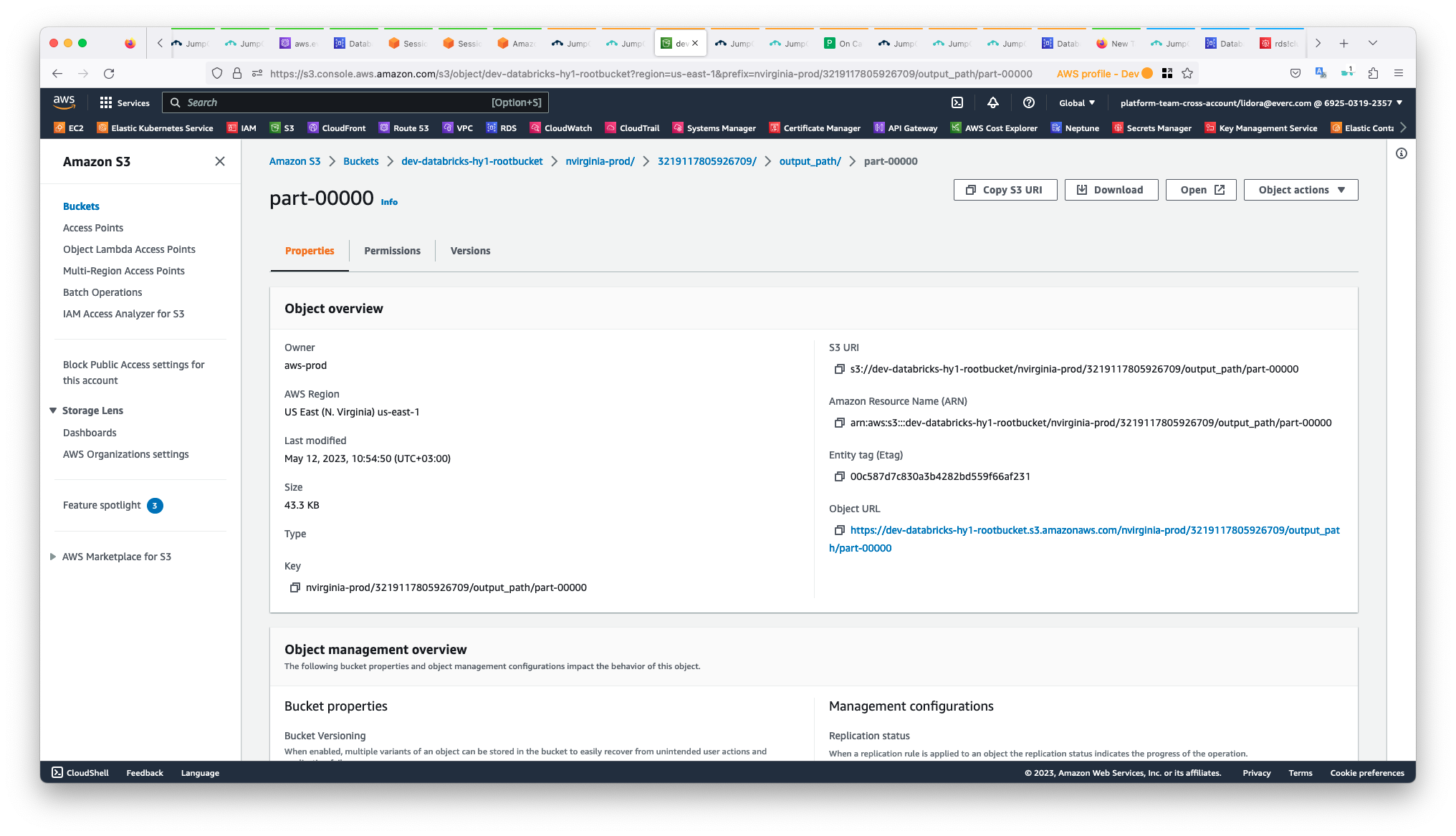

Bucket Ownership Of S3 Bucket In Databricks Databricks Community 4348 With the databricks data intelligence platform, you can effortlessly ingest data from virtually any source, unifying your entire data estate into a single, intelligent foundation. Before you start exchanging data between databricks and s3, you need to have the necessary permissions in place. we have a separate article that takes you through configuring s3 permissions for databricks access. In this article, we’ll explore how alex can address the data ingestion challenge for each source, the best practices for a production parallel setup, and how lakeflow connect can serve as an. With data present on databricks, you can deploy your application on multiple platforms, including azure, aws, and gcp. this article will explore six popular methods to connect amazon s3 to databricks. This project establishes an etl (extract, transform, load) pipeline using azure and databricks. the pipeline fetches raw data from various sources into azure blob storage, where it’s then processed, cleaned, and transformed in databricks using python and sql. It watches a directory in your cloud storage (s3, adls, gcs), detects new files automatically, and ingests them without you having to track file states manually.

Github Ios00 Azure Databricks And Spark Sql Python Contains In this article, we’ll explore how alex can address the data ingestion challenge for each source, the best practices for a production parallel setup, and how lakeflow connect can serve as an. With data present on databricks, you can deploy your application on multiple platforms, including azure, aws, and gcp. this article will explore six popular methods to connect amazon s3 to databricks. This project establishes an etl (extract, transform, load) pipeline using azure and databricks. the pipeline fetches raw data from various sources into azure blob storage, where it’s then processed, cleaned, and transformed in databricks using python and sql. It watches a directory in your cloud storage (s3, adls, gcs), detects new files automatically, and ingests them without you having to track file states manually.

Comments are closed.