Exploit Your Hyperparameters Batch Size And Learning Rate As

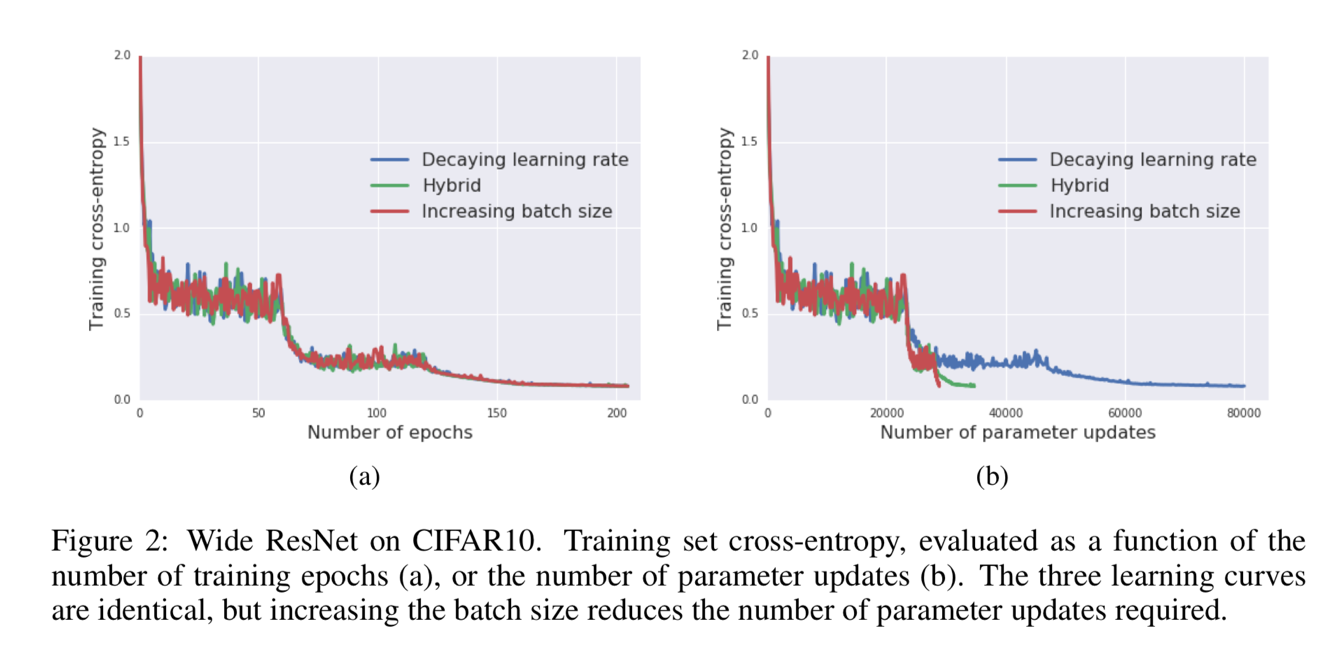

Don T Decay The Learning Rate Increase The Batch Size Optimization Higher learning rates and lower batch sizes can prevent our models from getting stuck in deep, narrow minima. as a result, they will be more robust to changes between our training data and real world data, performing better where we need them to. Yet, before applying other regularization steps, we can reimagine the role of learning rate and batch size. doing so can reduce overfitting to create better, simpler models.

Tweaking Hyperparameters To Stabilize Learning Rate Iulian Serban The learning rate and batch size are interdependent hyperparameters that significantly influence the training dynamics and performance of neural networks. their relationship is critical for achieving optimal training efficiency and model accuracy. Learn how to tune the values of several hyperparameters—learning rate, batch size, and number of epochs—to optimize model training using gradient descent. Hyperparameters: learning rate, batch size, and epochs mastering the knobs. learn how to tune the three most critical parameters of fine tuning to find the balance between 'slow learning' and 'catastrophic forgetting'. In this blog post, we’ll explore some of the most important hyperparameters, including the learning rate, batch size, and more, along with tips on how to set them effectively.

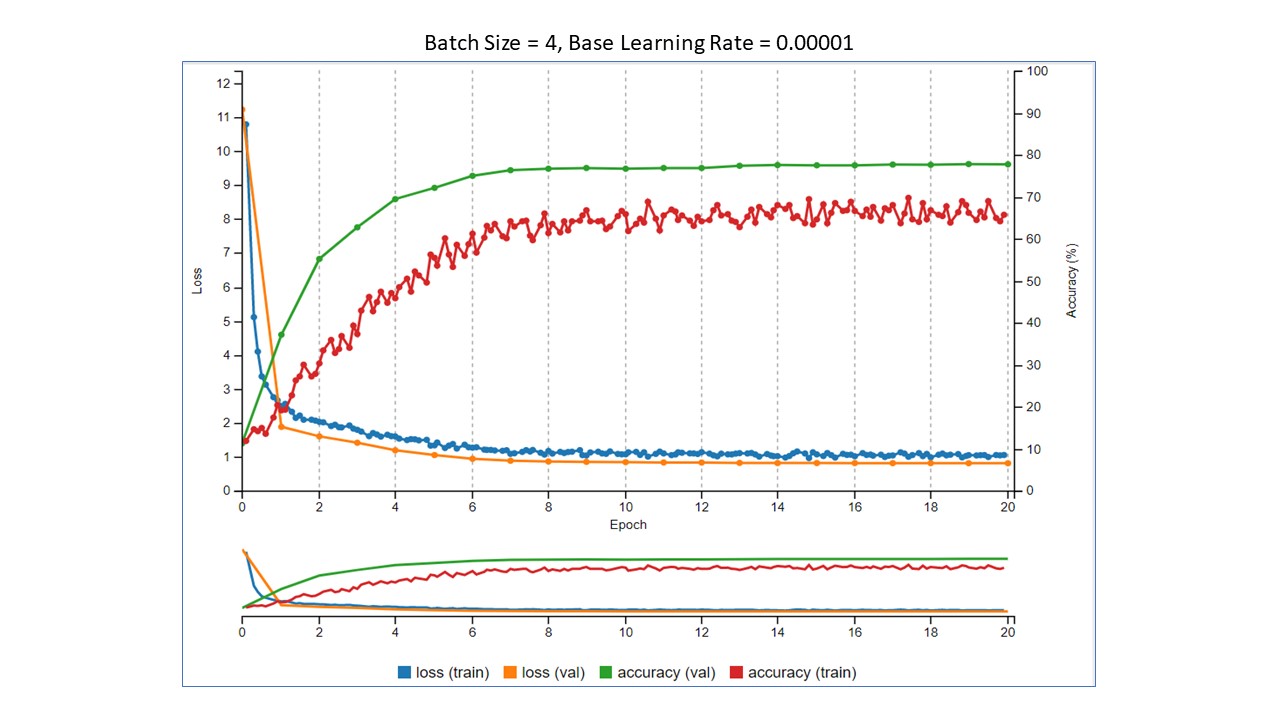

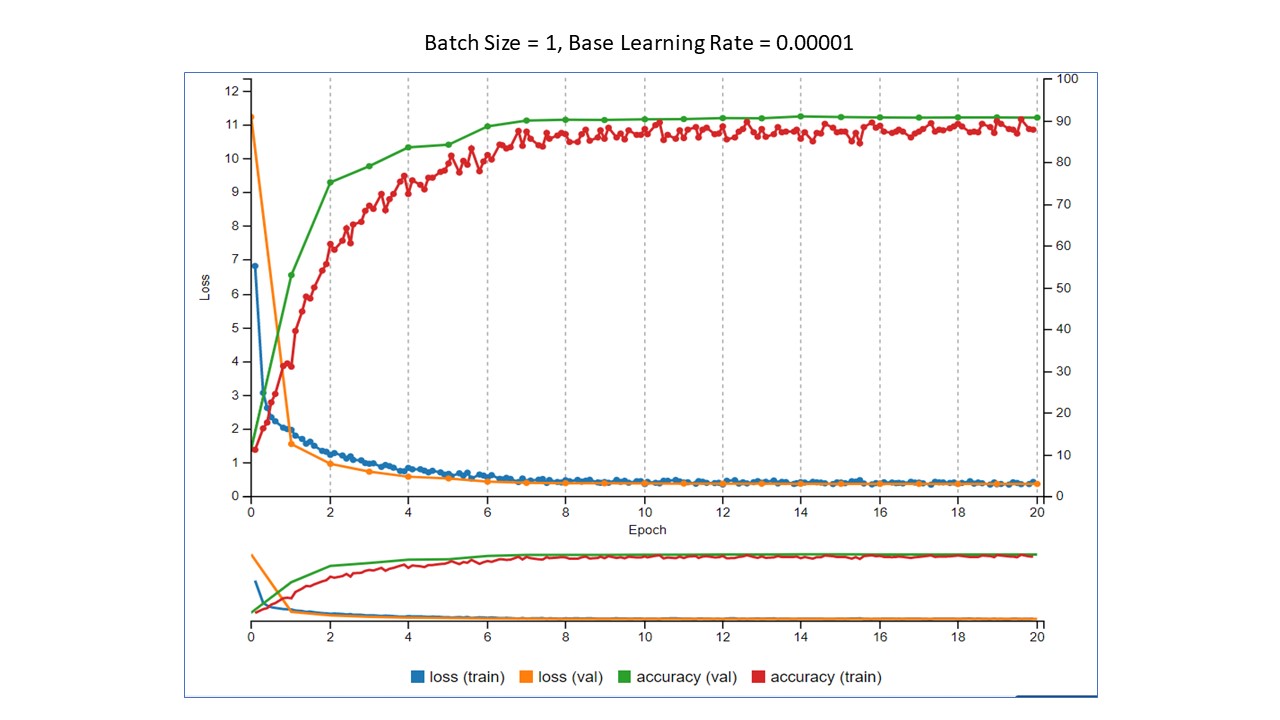

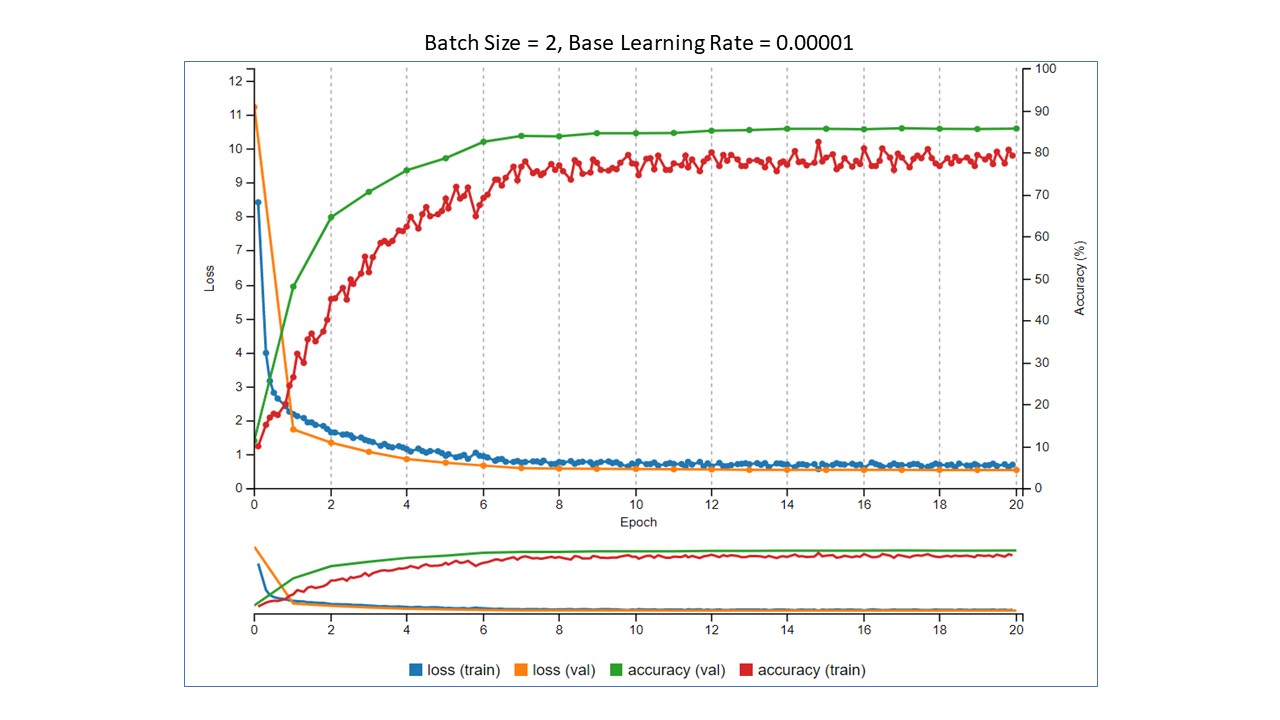

Hyperparameters For Classifying Images With Convolutional Neural Hyperparameters: learning rate, batch size, and epochs mastering the knobs. learn how to tune the three most critical parameters of fine tuning to find the balance between 'slow learning' and 'catastrophic forgetting'. In this blog post, we’ll explore some of the most important hyperparameters, including the learning rate, batch size, and more, along with tips on how to set them effectively. Hyperparameter tuning involves adjusting parameters that are set before training a model, such as learning rate, batch size, and number of hidden layers. the goal of hyperparameter tuning is to find the optimal combination of parameters that minimizes overfitting and maximizes the model's performance on unseen data. In this tutorial, we’ll discuss learning rate and batch size, two neural network hyperparameters that we need to set up before model training. we’ll introduce them both and, after that, analyze how to tune them accordingly. In the realm of deep learning, hyperparameters play a crucial role in determining the performance of a model. hyperparameters are variables that are set before the training process begins and control aspects such as learning rate, batch size, and the number of hidden layers in a neural network. Unlike standard machine learning models, deep neural networks have numerous hyperparameters—learning rate, batch size, optimizer settings, and more—that directly influence training efficiency and model accuracy.

Hyperparameters For Classifying Images With Convolutional Neural Hyperparameter tuning involves adjusting parameters that are set before training a model, such as learning rate, batch size, and number of hidden layers. the goal of hyperparameter tuning is to find the optimal combination of parameters that minimizes overfitting and maximizes the model's performance on unseen data. In this tutorial, we’ll discuss learning rate and batch size, two neural network hyperparameters that we need to set up before model training. we’ll introduce them both and, after that, analyze how to tune them accordingly. In the realm of deep learning, hyperparameters play a crucial role in determining the performance of a model. hyperparameters are variables that are set before the training process begins and control aspects such as learning rate, batch size, and the number of hidden layers in a neural network. Unlike standard machine learning models, deep neural networks have numerous hyperparameters—learning rate, batch size, optimizer settings, and more—that directly influence training efficiency and model accuracy.

Hyperparameters For Classifying Images With Convolutional Neural In the realm of deep learning, hyperparameters play a crucial role in determining the performance of a model. hyperparameters are variables that are set before the training process begins and control aspects such as learning rate, batch size, and the number of hidden layers in a neural network. Unlike standard machine learning models, deep neural networks have numerous hyperparameters—learning rate, batch size, optimizer settings, and more—that directly influence training efficiency and model accuracy.

Comments are closed.