Explain Cache Mapping Techniques Design Talk

Explain Cache Mapping Techniques Design Talk But since cache is limited in size, the system needs a smart way to decide where to place data from main memory — and that’s where cache mapping comes in. cache mapping is a technique used to determine where a particular block of main memory will be stored in the cache. Here, we will study different cache memory mapping techniques in computer architecture such as direct mapping, set & fully associative mapping.

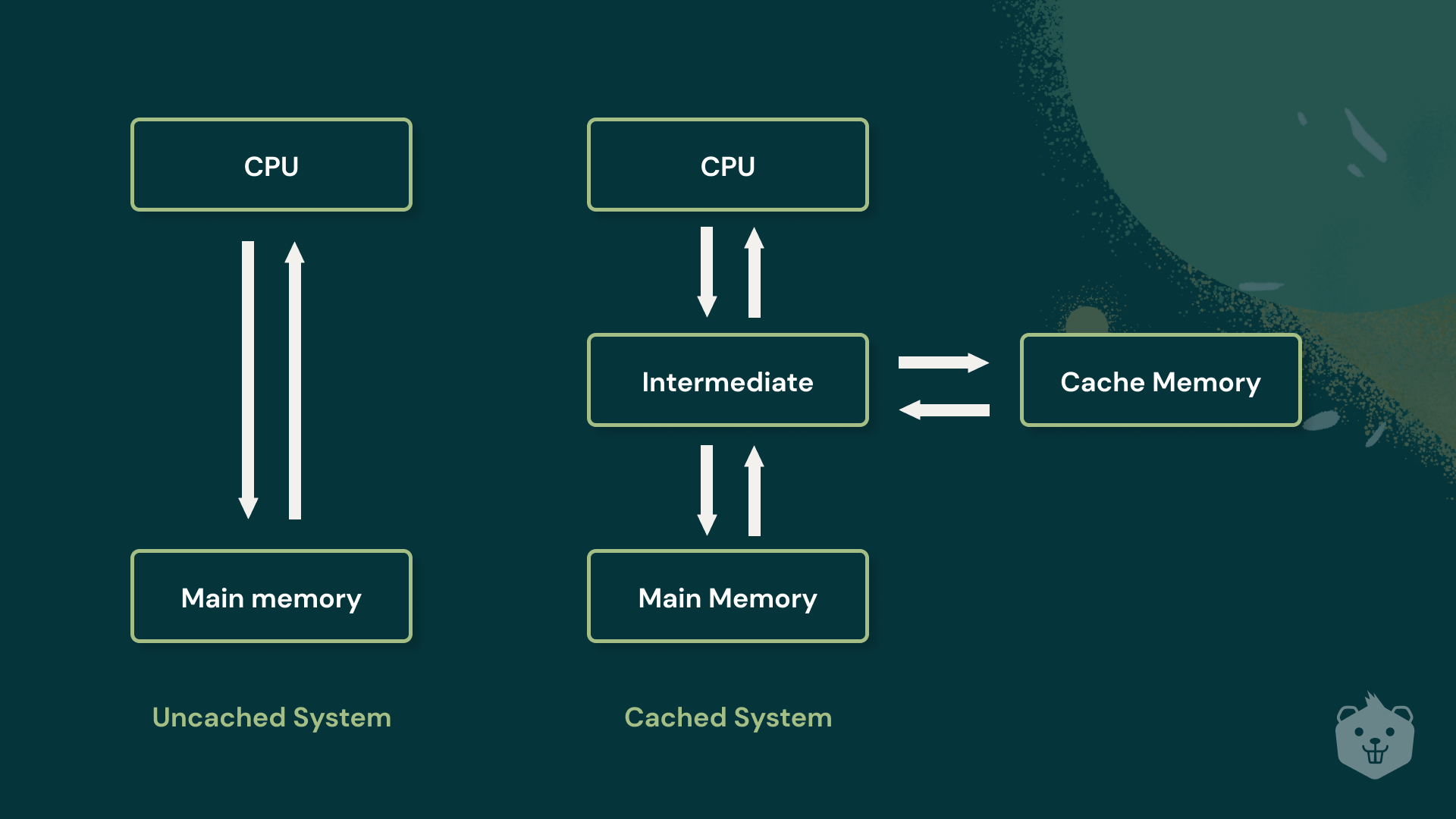

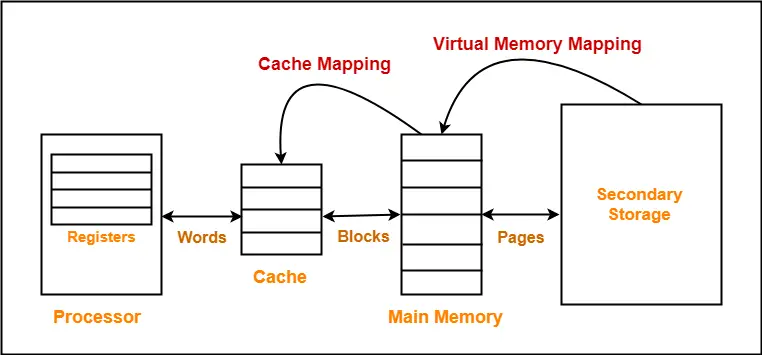

Explain Cache Mapping Techniques Design Talk The mapping techniques are used to determine how the memory blocks are mapped to cache blocks. the following three types of cache mapping techniques are commonly used. This document discusses mapping techniques in cache memory, focusing on direct, associative, and set associative mapping methods. each technique has its advantages and disadvantages, impacting performance and efficiency in data retrieval. Cache memory sits between the processor and main memory, giving the cpu fast access to the data it needs most. without it, the processor would constantly wait on slower main memory, wasting cycles. this topic covers how caches are designed, how data gets mapped into them, and how the system decides what to evict when the cache is full. Cache mapping techniques are a crucial aspect of computer architecture, playing a vital role in optimizing data retrieval and enhancing system performance. in this guide, we will explore the different types of cache mapping techniques, their advantages, and disadvantages, as well as their use cases.

Explain Cache Mapping Techniques Design Talk Cache memory sits between the processor and main memory, giving the cpu fast access to the data it needs most. without it, the processor would constantly wait on slower main memory, wasting cycles. this topic covers how caches are designed, how data gets mapped into them, and how the system decides what to evict when the cache is full. Cache mapping techniques are a crucial aspect of computer architecture, playing a vital role in optimizing data retrieval and enhancing system performance. in this guide, we will explore the different types of cache mapping techniques, their advantages, and disadvantages, as well as their use cases. In this article, i will try to explain the cache mapping techniques using their hardware implementation. this will help you visualize the difference between different mapping techniques, using which you can take informed decisions on which technique to use given your requirements. In this article, we will discuss different cache mapping techniques. cache mapping defines how a block from the main memory is mapped to the cache memory in case of a cache miss. cache mapping is a technique by which the contents of main memory are brought into the cache memory. Cache: smaller, faster storage device that keeps copies of a subset of the data in a larger, slower device if the data we access is already in the cache, we win!. Cache mapping lets learn about cache mapping process and its different types like associative mapping, set associative mapping and direct mapping. we will also learn about merits or advantages and demerits or disadvantages of each one of them.

Cache Mapping Cache Mapping Techniques Gate Vidyalay In this article, i will try to explain the cache mapping techniques using their hardware implementation. this will help you visualize the difference between different mapping techniques, using which you can take informed decisions on which technique to use given your requirements. In this article, we will discuss different cache mapping techniques. cache mapping defines how a block from the main memory is mapped to the cache memory in case of a cache miss. cache mapping is a technique by which the contents of main memory are brought into the cache memory. Cache: smaller, faster storage device that keeps copies of a subset of the data in a larger, slower device if the data we access is already in the cache, we win!. Cache mapping lets learn about cache mapping process and its different types like associative mapping, set associative mapping and direct mapping. we will also learn about merits or advantages and demerits or disadvantages of each one of them.

Cache Mapping Cache Mapping Techniques Gate Vidyalay Cache: smaller, faster storage device that keeps copies of a subset of the data in a larger, slower device if the data we access is already in the cache, we win!. Cache mapping lets learn about cache mapping process and its different types like associative mapping, set associative mapping and direct mapping. we will also learn about merits or advantages and demerits or disadvantages of each one of them.

Comments are closed.