Experiment Workflow A Data Processing B Model Evaluation

Experiment Workflow A Data Processing B Model Evaluation We define five data quality evaluation dimensions: comprehensiveness, correctness, variety, class imbalance, and duplication, and conducted a quantitative evaluation on these dimensions for. Data science projects involve testing many models and settings, which can get messy without proper tracking. keeping a log of experiments in your data science workflow helps you compare results, choose the best model, and avoid repeating mistakes.

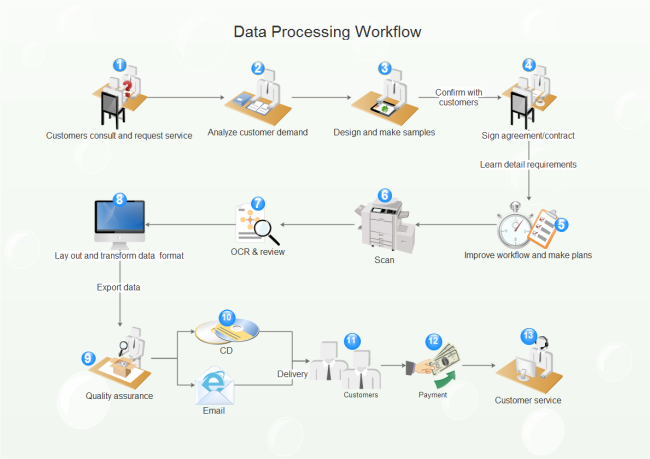

Experiment Workflow A Data Processing B Model Evaluation When working on a data science project, we often need to explore different approaches to solve a problem – hyperparameter tuning, model architecture, data processing methods, etc. every approach might be different and even orthogonal to the other. Doe workflow urney for optimization. figure 1 provides a visual representation of the workflow, alongside detailed descriptions of each step to enhance your understanding and make the p le factors to optimize. these multiple aspects can make the process both time consuming. The doe workflow consists of six steps: define, model, design, data entry, analyze, and predict. the framework for designing an experiment remains the same regardless of the method of experimental design you choose. Training a model is not a one and done process. it involves an iterative cycle of hypothesis testing, parameter tuning, and evaluation to optimize performance. data scientists experiment with hyperparameters, datasets, preprocessing techniques, and algorithms to achieve the best outcomes.

Data Processing Workflow The doe workflow consists of six steps: define, model, design, data entry, analyze, and predict. the framework for designing an experiment remains the same regardless of the method of experimental design you choose. Training a model is not a one and done process. it involves an iterative cycle of hypothesis testing, parameter tuning, and evaluation to optimize performance. data scientists experiment with hyperparameters, datasets, preprocessing techniques, and algorithms to achieve the best outcomes. Learn about experimental design in data science: explore the design flow, principles, and real world examples for effective experimentation. We show that python is well suited to performing the computational analyses required for experimental data processing, fitting of enzyme kinetic parameters, construction of kinetic models, as well as model validation and further analysis. In this paper we present the design of data processing workflow for scientific experiments, which require complicated multi step analysis procedure. we test it on datasets from single particle imaging (spi) experiments. Automl based workflow for design of experiments (doe) selection and benchmarking data acquisition strategies with simulation models.

Comments are closed.