Expectation Maximization Explained

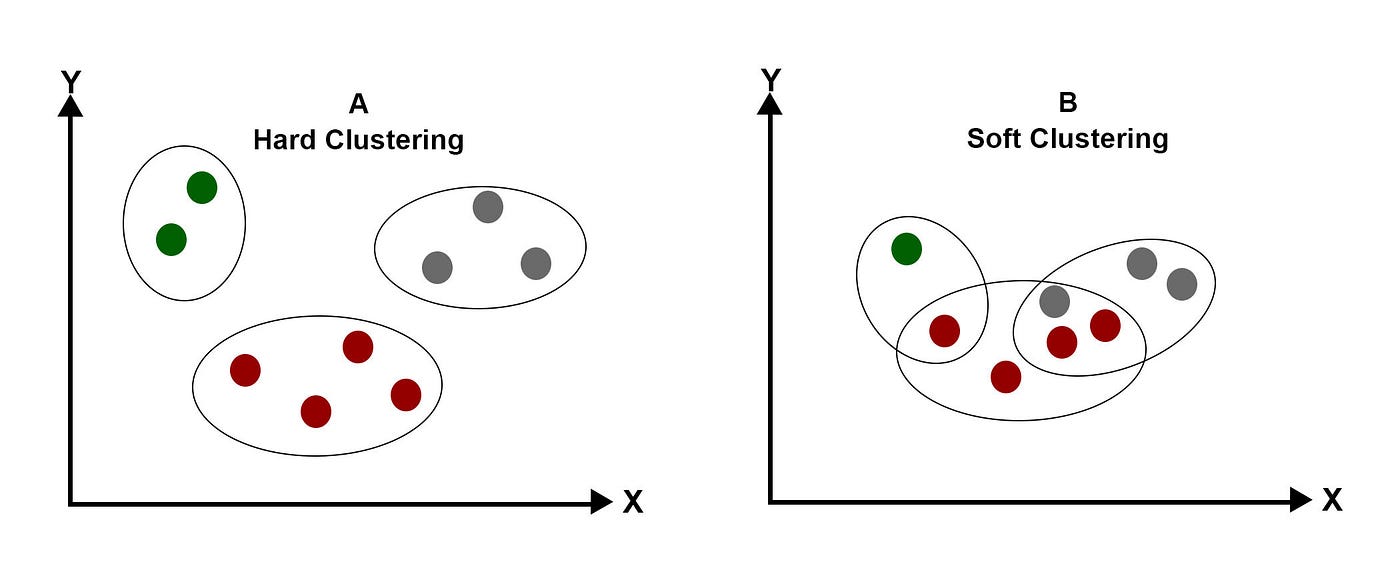

Expectation Maximization The expectation maximization (em) algorithm is a powerful iterative optimization technique used to estimate unknown parameters in probabilistic models, particularly when the data is incomplete, noisy or contains hidden (latent) variables. In statistics, an expectation–maximization (em) algorithm is an iterative method to find (local) maximum likelihood or maximum a posteriori (map) estimates of parameters in statistical models, where the model depends on unobserved latent variables. [1].

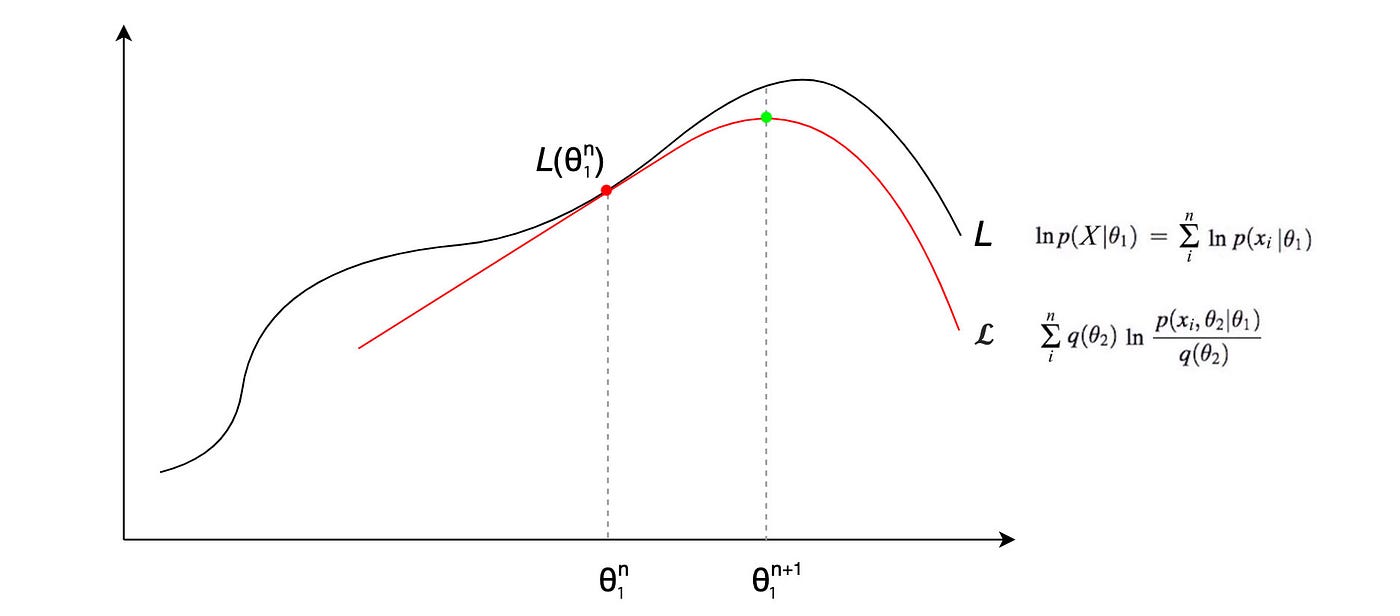

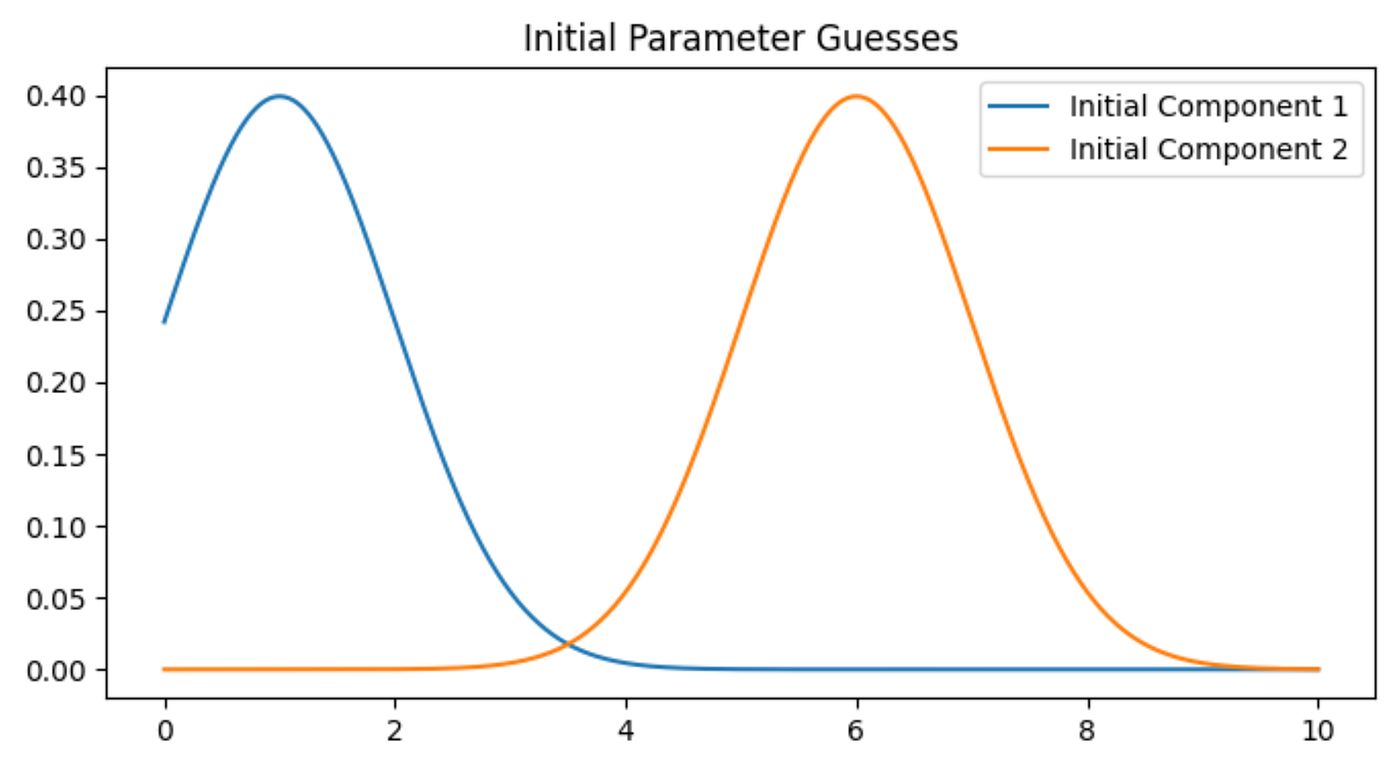

Expectation Maximization Now we begin the e m cycle. the e step (expectation): calculating the "soft" contributions. goal: for each round of data, we will calculate how much "credit" or "responsibility" to assign to coin a vs. coin b. this creates a fractional, weighted dataset that we can work with. let's do this in detail for round 1 (5 heads, 5 tails):. Let’s talk about the expectation maximization algorithm (em, for short). if you are in the data science “bubble”, you’ve probably come across em at some point in time and wondered: what is em, and do i need to know it?. You’ve now walked through the expectation maximization algorithm from its conceptual roots to its practical application in gaussian mixture models, and even explored several powerful variants. The expectation maximization algorithm, formalized in a seminal 1977 paper by arthur dempster, nan laird, and donald rubin, is an elegant iterative optimization technique designed to bypass the intractable marginalization problem of latent variables.

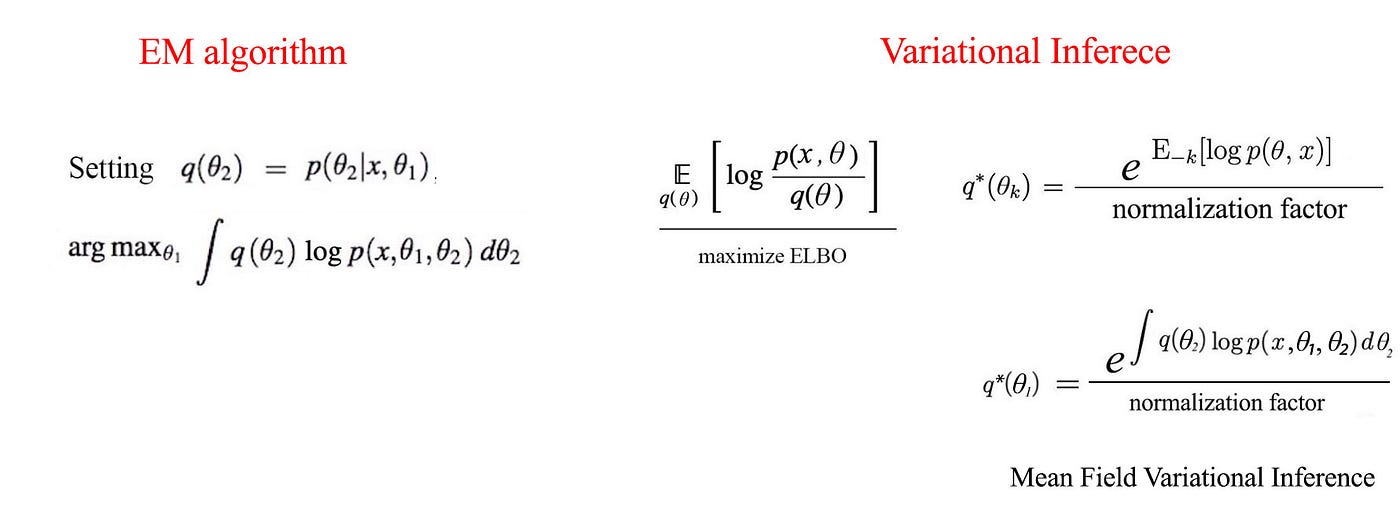

Expectation Maximization You’ve now walked through the expectation maximization algorithm from its conceptual roots to its practical application in gaussian mixture models, and even explored several powerful variants. The expectation maximization algorithm, formalized in a seminal 1977 paper by arthur dempster, nan laird, and donald rubin, is an elegant iterative optimization technique designed to bypass the intractable marginalization problem of latent variables. Is a refinement on this basic idea. rather than picking the single most likely completion of the missing coin assignments on each iteration, the expectation maximization algorithm computes probabilities for each possible completion of the missing data, . The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. In this tutorial, we’re going to explore expectation maximization (em) – a very popular technique for estimating parameters of probabilistic models and also the working horse behind popular algorithms like hidden markov models, gaussian mixtures, kalman filters, and others. Discover how the expectation maximization algorithm works and how it is applied. learn about its convergence properties.

Expectation Maximization Is a refinement on this basic idea. rather than picking the single most likely completion of the missing coin assignments on each iteration, the expectation maximization algorithm computes probabilities for each possible completion of the missing data, . The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. In this tutorial, we’re going to explore expectation maximization (em) – a very popular technique for estimating parameters of probabilistic models and also the working horse behind popular algorithms like hidden markov models, gaussian mixtures, kalman filters, and others. Discover how the expectation maximization algorithm works and how it is applied. learn about its convergence properties.

Expectation Maximization In this tutorial, we’re going to explore expectation maximization (em) – a very popular technique for estimating parameters of probabilistic models and also the working horse behind popular algorithms like hidden markov models, gaussian mixtures, kalman filters, and others. Discover how the expectation maximization algorithm works and how it is applied. learn about its convergence properties.

Comments are closed.