Expectation Maximization Em Algorithm Download Scientific Diagram

Expectation Maximization Algorithm Ml Geeksforgeeks Download scientific diagram | expectation maximization (em) algorithm from publication: a hybrid ids for detection and mitigation of sinkhole attack in 6lowpan networks | the internet. Jensen's inequality the em algorithm is derived from jensen's inequality, so we review it here. = e[ g(e[x]).

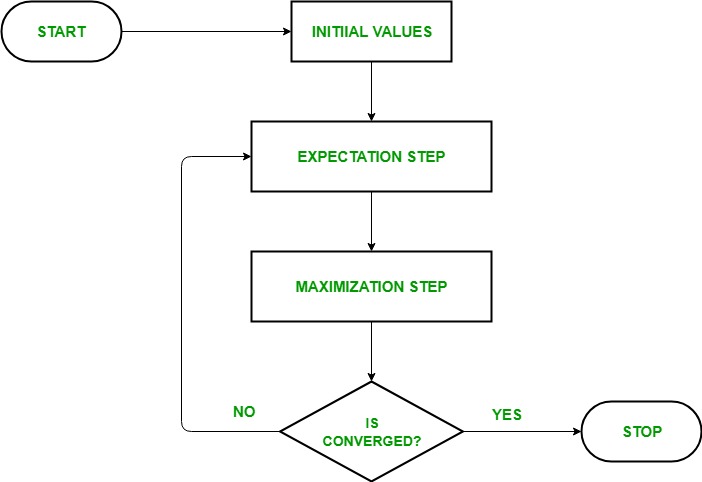

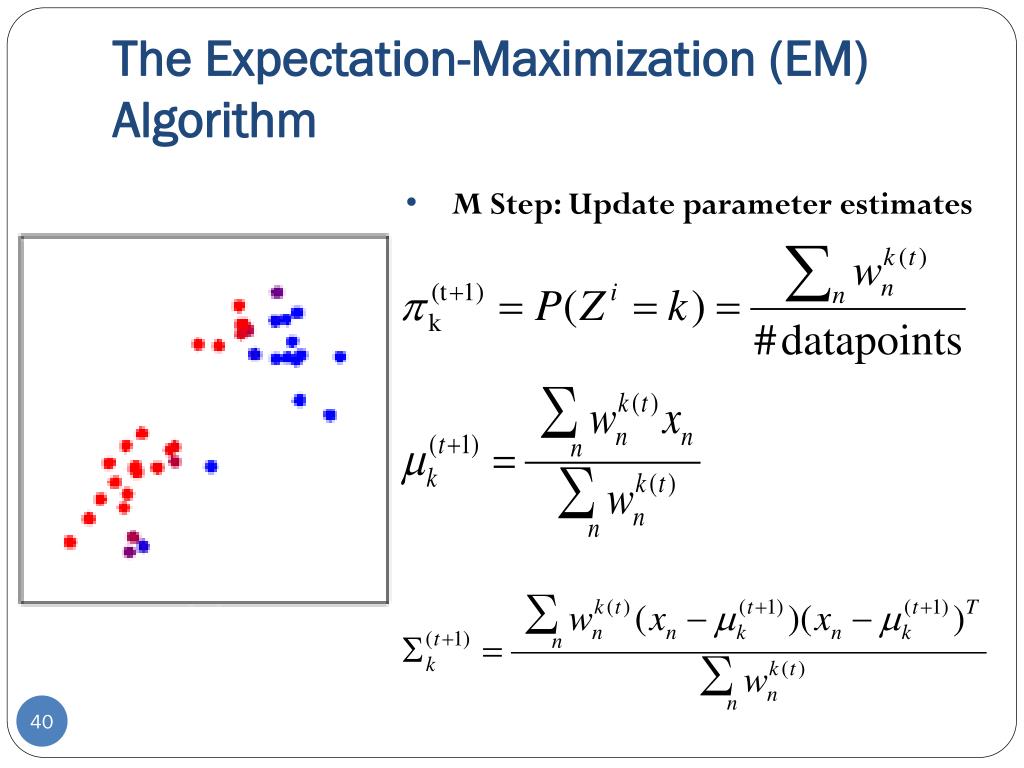

Ppt Clustering K Means Powerpoint Presentation Free Download Id Once we have introduced the missing data, we can execute the em algorithm. starting from an initial estimate of θ, ˆθ(0), the em algorithm iterates between the e step and the m step:. The likelihood, p(y ), is the probability of the visible variables given the j parameters. the goal of the em algorithm is to find parameters which maximize the likelihood. the em algorithm is iterative and converges to a local maximum. throughout, q(z) will be used to denote an arbitrary distribution of the latent variables, z. The expectation maximization algorithm is an iterative method for nding the maximum likelihood estimate for a latent variable model. it consists of iterating between two steps (\expectation step" and \maximization step", or \e step" and \m step" for short) until convergence. Is a refinement on this basic idea. rather than picking the single most likely completion of the missing coin assignments on each iteration, the expectation maximization algorithm computes probabilities for each possible completion of the missing data,.

The Step By Step Procedure Of A The Standard Expectation Maximization The expectation maximization algorithm is an iterative method for nding the maximum likelihood estimate for a latent variable model. it consists of iterating between two steps (\expectation step" and \maximization step", or \e step" and \m step" for short) until convergence. Is a refinement on this basic idea. rather than picking the single most likely completion of the missing coin assignments on each iteration, the expectation maximization algorithm computes probabilities for each possible completion of the missing data,. In this section, we introduce an algorithm, called the expectation maximization (em) algorithm that is widely used to compute maximum likelihood estimates when some elements of the data set are either missing, unobservable or incomplete. We simply assume that the latent data is missing and proceed to apply the em algorithm. the em algorithm has many applications throughout statistics. it is often used for example, in machine learning and data mining applications, and in bayesian statistics where i. The em algorithm can fail due to singularity of the log likelihood function. for example, when learning a gmm with 10 components, the algorithm may decide that the most likely solution is for one of the gaussians to only have one data point assigned to it. This repo implements and visualizes the expectation maximization algorithm for fitting gaussian mixture models. we aim to visualize the different steps in the em algorithm.

Schematic Implementation Of The Expectation Maximization Em Algorithm In this section, we introduce an algorithm, called the expectation maximization (em) algorithm that is widely used to compute maximum likelihood estimates when some elements of the data set are either missing, unobservable or incomplete. We simply assume that the latent data is missing and proceed to apply the em algorithm. the em algorithm has many applications throughout statistics. it is often used for example, in machine learning and data mining applications, and in bayesian statistics where i. The em algorithm can fail due to singularity of the log likelihood function. for example, when learning a gmm with 10 components, the algorithm may decide that the most likely solution is for one of the gaussians to only have one data point assigned to it. This repo implements and visualizes the expectation maximization algorithm for fitting gaussian mixture models. we aim to visualize the different steps in the em algorithm.

Comments are closed.