Expectation Maximization Em Algorithm And Application To Belief

Expectation Maximization Em Algorithm Download Scientific Diagram In this post, we will go over the expectation maximization (em) algorithm in the context of performing mle on a bayesian belief network, understand the mathematics behind it and make analogies with mle for probability distributions. The em algorithm (and its faster variant ordered subset expectation maximization) is also widely used in medical image reconstruction, especially in positron emission tomography, single photon emission computed tomography, and x ray computed tomography.

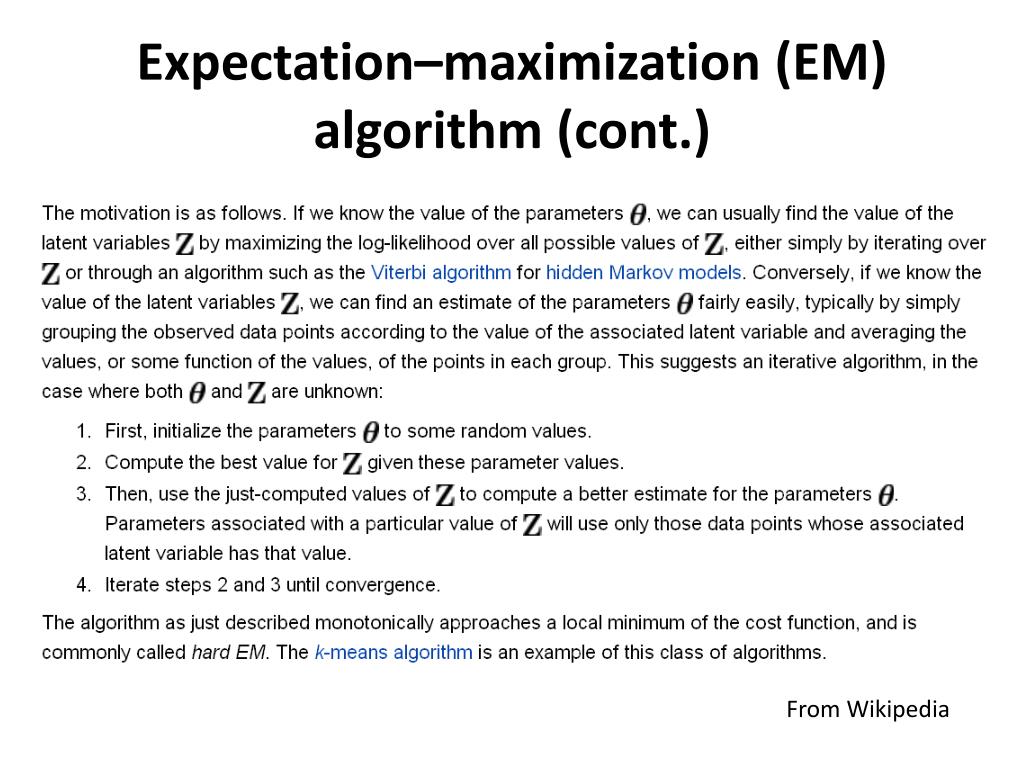

Expectation Maximization Em Algorithm Download Scientific Diagram The expectation maximization (em) algorithm is a powerful iterative optimization technique used to estimate unknown parameters in probabilistic models, particularly when the data is incomplete, noisy or contains hidden (latent) variables. Learn about the expectation maximization (em) algorithm, its mathematical formulation, key steps, applications in machine learning, and python implementation. understand how em handles missing data for improved parameter estimation. The likelihood, p(y ), is the probability of the visible variables given the j parameters. the goal of the em algorithm is to find parameters which maximize the likelihood. the em algorithm is iterative and converges to a local maximum. throughout, q(z) will be used to denote an arbitrary distribution of the latent variables, z. Understand the expectation maximization (em) algorithm, its mathematical foundation, and how it is used to find maximum likelihood estimates in models with latent variables. learn about its applications in clustering, missing data problems, and gaussian mixture models.

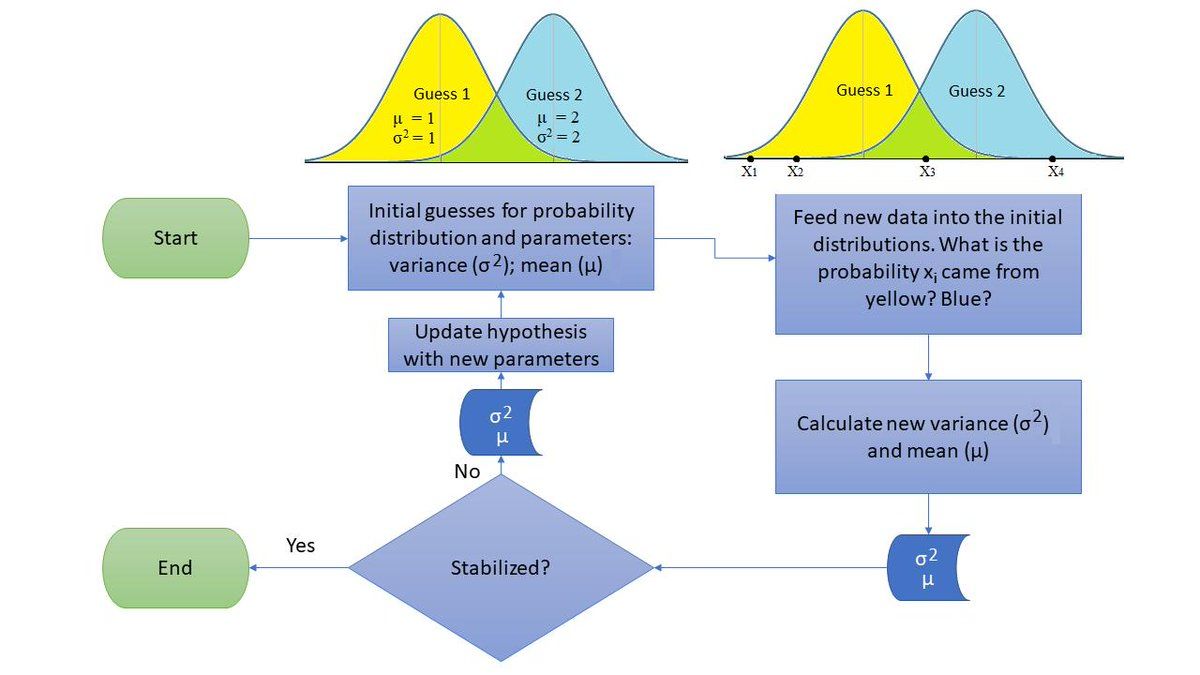

What Is Expectation Maximization Em Algorithm The likelihood, p(y ), is the probability of the visible variables given the j parameters. the goal of the em algorithm is to find parameters which maximize the likelihood. the em algorithm is iterative and converges to a local maximum. throughout, q(z) will be used to denote an arbitrary distribution of the latent variables, z. Understand the expectation maximization (em) algorithm, its mathematical foundation, and how it is used to find maximum likelihood estimates in models with latent variables. learn about its applications in clustering, missing data problems, and gaussian mixture models. The expectation maximization (em) algorithm is an elegant algorithmic tool to maximize the likelihood function for problems with latent variables. we will state the problem in a general formulation, and then we will apply it to different tasks, including regression. We discuss the integration of the expectation maximization (em) algorithm for maximum likelihood learning of bayesian networks with belief propagation algorithms for approximate inference. Jensen's inequality the em algorithm is derived from jensen's inequality, so we review it here. = e[ g(e[x]). It consists of iterating between two steps (\expectation step" and \maximization step", or \e step" and \m step" for short) until convergence. both steps involve maximizing a lower bound on the likelihood.

Ppt Expectation Maximization Em Algorithm Powerpoint Presentation The expectation maximization (em) algorithm is an elegant algorithmic tool to maximize the likelihood function for problems with latent variables. we will state the problem in a general formulation, and then we will apply it to different tasks, including regression. We discuss the integration of the expectation maximization (em) algorithm for maximum likelihood learning of bayesian networks with belief propagation algorithms for approximate inference. Jensen's inequality the em algorithm is derived from jensen's inequality, so we review it here. = e[ g(e[x]). It consists of iterating between two steps (\expectation step" and \maximization step", or \e step" and \m step" for short) until convergence. both steps involve maximizing a lower bound on the likelihood.

Comments are closed.