Expectation Maximization Algorithm In Machine Learning

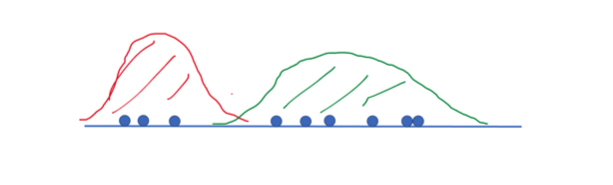

Affective Analysis In Machine Learning Using Amigos With Gaussian The expectation maximization (em) algorithm is a powerful iterative optimization technique used to estimate unknown parameters in probabilistic models, particularly when the data is incomplete, noisy or contains hidden (latent) variables. In statistics, an expectation–maximization (em) algorithm is an iterative method to find (local) maximum likelihood or maximum a posteriori (map) estimates of parameters in statistical models, where the model depends on unobserved latent variables. [1].

Ml 2 Expectation Maximization Pdf Support Vector Machine Cluster The expectation maximization methodology was first presented in a general way by dempster, laird and rubin in 1977. they define em algorithm as an iterative estimation algorithm that can derive the maximum likelihood (ml) estimates in the presence of missing hidden data (“incomplete data”). The expectation maximization algorithm, formalized in a seminal 1977 paper by arthur dempster, nan laird, and donald rubin, is an elegant iterative optimization technique designed to bypass the intractable marginalization problem of latent variables. You’ve now walked through the expectation maximization algorithm from its conceptual roots to its practical application in gaussian mixture models, and even explored several powerful variants. Learn the principles and steps of the expectation maximization (em) algorithm. explore the advantages and disadvantages of the em algorithm in parameter estimation and missing data handling.

Guide To Expectation Maximization Algorithm Built In You’ve now walked through the expectation maximization algorithm from its conceptual roots to its practical application in gaussian mixture models, and even explored several powerful variants. Learn the principles and steps of the expectation maximization (em) algorithm. explore the advantages and disadvantages of the em algorithm in parameter estimation and missing data handling. Learn about the expectation maximization (em) algorithm, its mathematical formulation, key steps, applications in machine learning, and python implementation. understand how em handles missing data for improved parameter estimation. The expectation maximisation (em) algorithm is a statistical machine learning method to find the maximum likelihood estimates of models with unknown latent variables. The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. Learn its applications, advantages, and implementation. the expectation maximization (em) algorithm is a widely used iterative method in machine learning and statistics for maximum likelihood estimation in probabilistic models with latent variables.

A Gentle Introduction To Expectation Maximization Em Algorithm Learn about the expectation maximization (em) algorithm, its mathematical formulation, key steps, applications in machine learning, and python implementation. understand how em handles missing data for improved parameter estimation. The expectation maximisation (em) algorithm is a statistical machine learning method to find the maximum likelihood estimates of models with unknown latent variables. The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. Learn its applications, advantages, and implementation. the expectation maximization (em) algorithm is a widely used iterative method in machine learning and statistics for maximum likelihood estimation in probabilistic models with latent variables.

A Gentle Introduction To Expectation Maximization Em Algorithm The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. Learn its applications, advantages, and implementation. the expectation maximization (em) algorithm is a widely used iterative method in machine learning and statistics for maximum likelihood estimation in probabilistic models with latent variables.

A Gentle Introduction To Expectation Maximization Em Algorithm

Comments are closed.