Existential Risk Researcher On Why Were Headed For Collapse And How To Stop It The Goose

60 Minutes Uses Failed Doomsday Biologist To Predict Mass Extinction Luke kemp discusses his new book goliath's curse: the history and future of societal collapse📖 get your own copy here: penguinrandomhouse bo. The risk engendered by radioactive particles prompted a quick mobilization among scientists and intellectuals, notoriously exemplified by the russell–einstein manifesto, in 1955, which warned about the possibility of a human extinction.

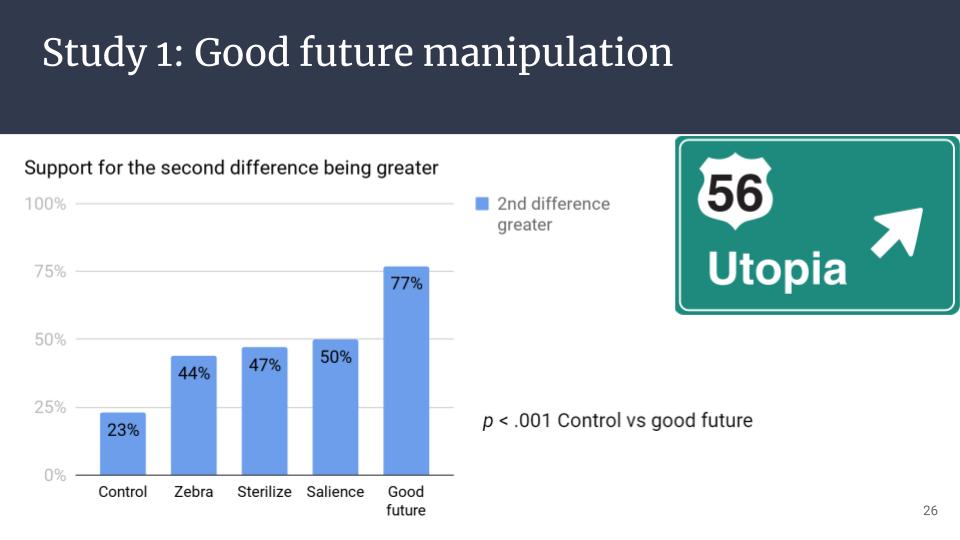

Psychology Of Existential Risk And Long Termism Effective Altruism In this episode, nate is joined by existential risk researcher luke kemp to explore the intricate history of societal collapse – connecting patterns of dominance hierarchies, resource control, and inequality to create societies which he calls goliaths. The breadth of perspectives presented reflects the belief that x risk studies should not only focus on preventing human extinction but also foster a broader dialogue on what makes human existence meaningful and how to navigate risks while maintaining an open future. These essays pair insights from decades of research and activism around global risk with the latest academic findings from the emerging field of existential risk studies. This paper develops the outline of an accumulative perspective on ai x risk, by examining how multiple types of ai induced risks could compound and cascade over time to gradually bring about an ai generated existential catastrophe.

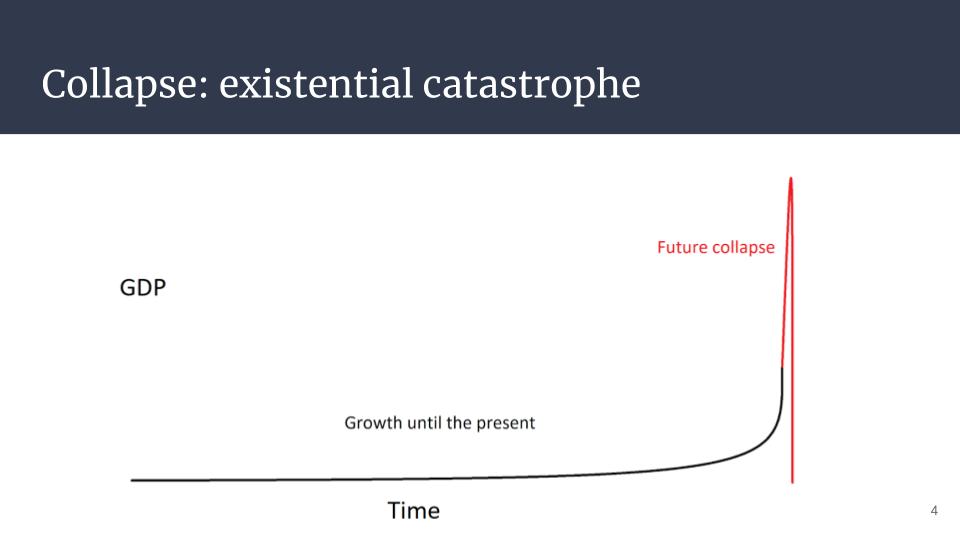

Psychology Of Existential Risk And Long Termism Effective Altruism These essays pair insights from decades of research and activism around global risk with the latest academic findings from the emerging field of existential risk studies. This paper develops the outline of an accumulative perspective on ai x risk, by examining how multiple types of ai induced risks could compound and cascade over time to gradually bring about an ai generated existential catastrophe. New research suggests a fifth of global ecosystems could collapse before 2100, and a recent intergovernmental panel on climate change report warns of “a rapidly closing window of opportunity” for action, with the impact of decisions made this decade reverberating “for thousands of years”. Let me try to list where i think we stand in terms of “existential risks,” that is, civilization destroying events. i classified them into three categories of risk: low, medium, and high, at least in my opinion. Our work is focused on preventing events that could result in hundreds of millions of deaths or permanently curtail civilization’s long term potential. a 10 week, independent research training program for undergraduate and graduate students, focused on topics in existential risk. Toby ord, senior researcher at oxford university’s ai governance initiative and author of the precipice, argues that the odds of a civilization ending catastrophe this century are roughly one in six.

Comments are closed.