Executable Code Actions Elicit Better Llm Agents A Kaboyo Collection

Executable Code Actions Elicit Better Llm Agents A Kaboyo Collection The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. Integrated with a python interpreter, codeact can execute code actions and dynamically revise prior actions or emit new actions upon new observations (e.g., code execution results) through multi turn interactions (check out this example!).

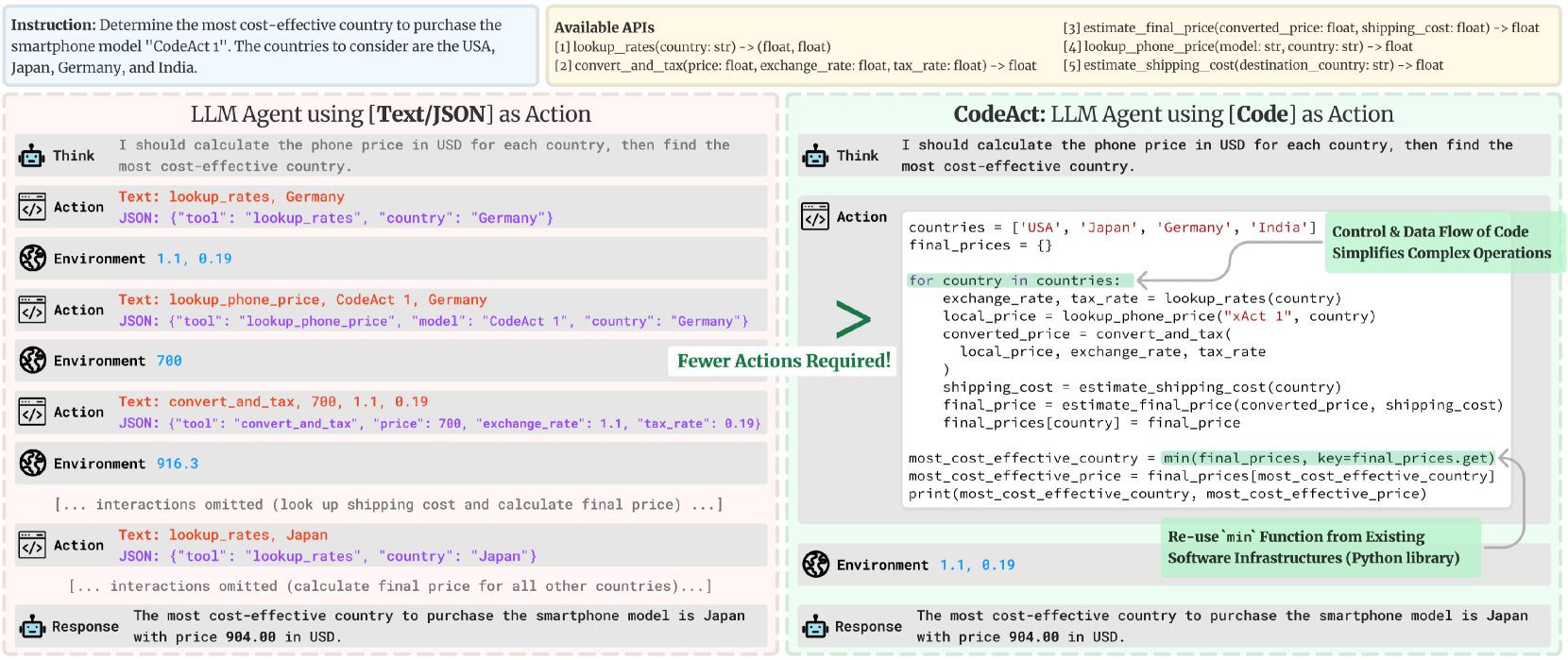

Executable Code Actions Elicit Better Llm Agents Fxis Ai Our extensive analysis of 17 llms on api bank and a newly curated benchmark shows that codeact outperforms widely used alternatives (up to 20% higher success rate). This work proposes to use executable python code to consolidate llm agents' actions into a unified action space (codeact). integrated with a python interpreter, codeact can execute code actions and dynamically revise prior actions or emit new actions upon new observations through multi turn interactions. Codeact, a framework using executable python code for llm agents, enhances flexibility and performance in real world tasks through dynamic action revision and multi turn interactions. The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language.

Executable Code Actions Elicit Better Llm Agents Ai Research Paper Codeact, a framework using executable python code for llm agents, enhances flexibility and performance in real world tasks through dynamic action revision and multi turn interactions. The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. The paper presents codeact, enabling llm agents to execute python code for dynamic multi turn interactions, improving task performance by up to 20%. The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. Taskweaver is proposed as a code first framework for building llm powered autonomous agents that converts user requests into executable code and treats user defined plugins as callable functions.

Executable Code Actions Elicit Better Llm Agents Ai Research Paper The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. The paper presents codeact, enabling llm agents to execute python code for dynamic multi turn interactions, improving task performance by up to 20%. The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. Taskweaver is proposed as a code first framework for building llm powered autonomous agents that converts user requests into executable code and treats user defined plugins as callable functions.

Executable Code Actions Elicit Better Llm Agents Ai Research Paper The encouraging performance of codeact motivates us to build an open source llm agent that interacts with environments by executing interpretable code and collaborates with users using natural language. Taskweaver is proposed as a code first framework for building llm powered autonomous agents that converts user requests into executable code and treats user defined plugins as callable functions.

Comments are closed.