Evaluation Methods For Llms

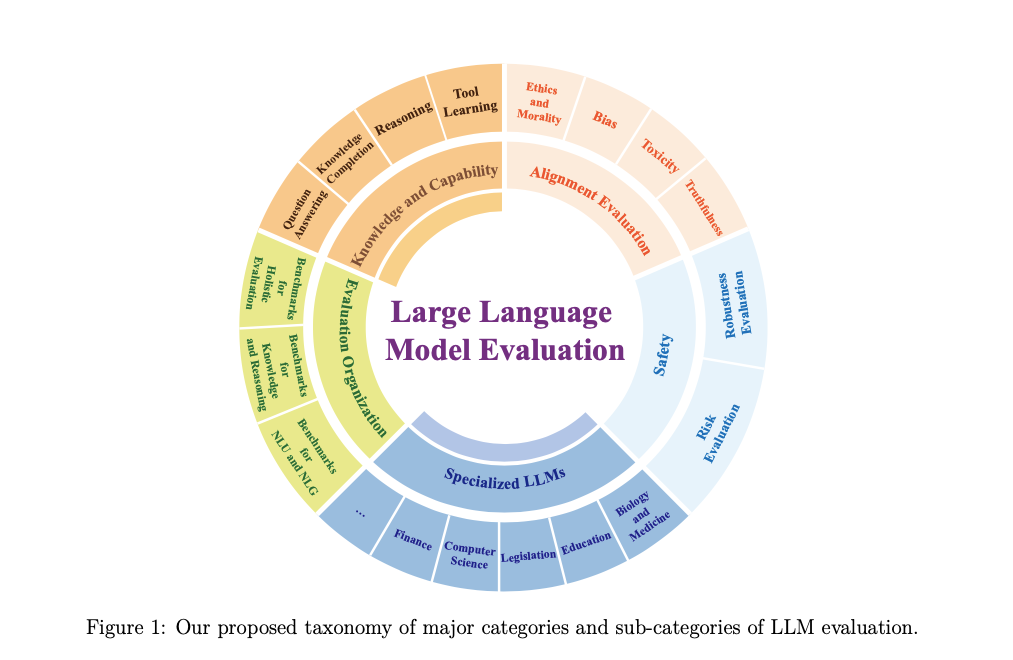

Github Gurpreetkaurjethra Llms Evaluation Llms Evaluation Learn the fundamentals of large language model (llm) evaluation, including key metrics and frameworks used to measure model performance, safety, and reliability. explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. Understanding the main evaluation methods for llms there are four common ways of evaluating trained llms in practice: multiple choice, verifiers, leaderboards, and llm judges, as shown in figure 1 below.

Llm Guided Evaluation Using Llms To Evaluate Llms Complete guide to llm evaluation metrics, benchmarks, and best practices. learn about bleu, rouge, glue, superglue, and other evaluation frameworks. In this section, we discuss how to incorporate specific methodologies into the design and im plementation of evaluation suites to address the real world challenges inherent in llm reliant sys tems. While this article focuses on the evaluation of llm systems, it is crucial to discern the difference between assessing a standalone large language model (llm) and evaluating an llm based. Learn how to evaluate large language models (llms) using key metrics, methodologies, and best practices to make informed decisions.

Llm Guided Evaluation Using Llms To Evaluate Llms While this article focuses on the evaluation of llm systems, it is crucial to discern the difference between assessing a standalone large language model (llm) and evaluating an llm based. Learn how to evaluate large language models (llms) using key metrics, methodologies, and best practices to make informed decisions. Some frameworks for these evaluation prompts include reason then score (rts), multiple choice question scoring (mcq), head to head scoring (h2h), and g eval (see the page on evaluating the performance of llm summarization prompts with g eval). This guide covers evaluation metrics for llms: what they measure, when to use them, and how to implement them systematically. we'll explore metrics for general llm outputs, rag applications, and specialized use cases, with practical implementation examples. A comprehensive guide to llm evaluation methods designed to assist in identifying the most suitable evaluation techniques for various use cases, promote the adoption of best practices in llm assessment, and critically assess the effectiveness of these evaluation methods. A complete look into llm evaluation: explore the metrics, methods and workflows used to build safe, effective, and scalable ai applications.

Evaluation Methods For Llms Some frameworks for these evaluation prompts include reason then score (rts), multiple choice question scoring (mcq), head to head scoring (h2h), and g eval (see the page on evaluating the performance of llm summarization prompts with g eval). This guide covers evaluation metrics for llms: what they measure, when to use them, and how to implement them systematically. we'll explore metrics for general llm outputs, rag applications, and specialized use cases, with practical implementation examples. A comprehensive guide to llm evaluation methods designed to assist in identifying the most suitable evaluation techniques for various use cases, promote the adoption of best practices in llm assessment, and critically assess the effectiveness of these evaluation methods. A complete look into llm evaluation: explore the metrics, methods and workflows used to build safe, effective, and scalable ai applications.

Llms Evaluation Benchmarks Challenges And Future Trends A comprehensive guide to llm evaluation methods designed to assist in identifying the most suitable evaluation techniques for various use cases, promote the adoption of best practices in llm assessment, and critically assess the effectiveness of these evaluation methods. A complete look into llm evaluation: explore the metrics, methods and workflows used to build safe, effective, and scalable ai applications.

Llms Evaluation Benchmarks Challenges And Future Trends

Comments are closed.