Evaluate Llms Effectively Using Deepeval A Practical Guide Datacamp

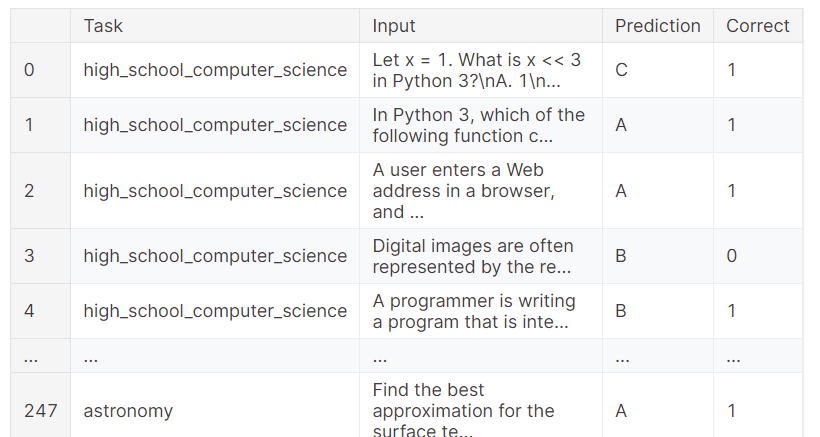

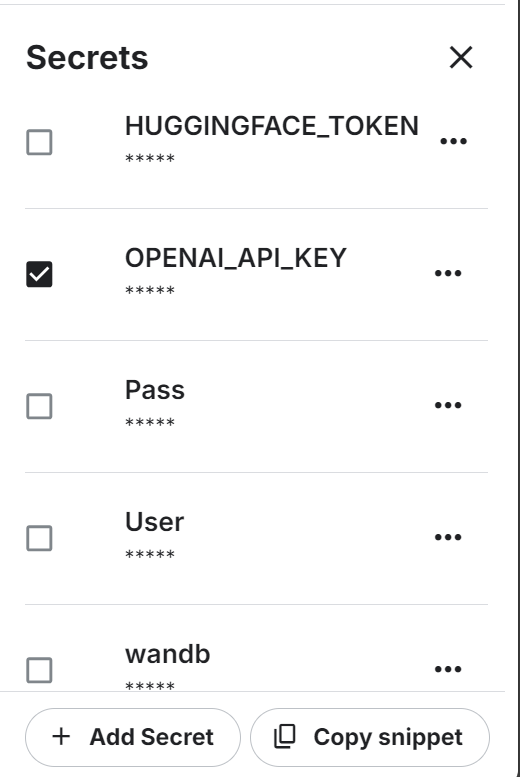

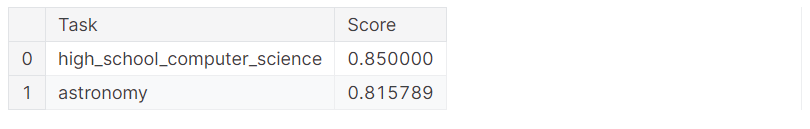

Evaluate Llms Effectively Using Deepeval A Practical Guide Datacamp In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model. Deepeval is an open source evaluation framework designed specifically for large language models, enabling developers to efficiently build, improve, test, and monitor llm based applications.

Evaluate Llms Effectively Using Deepeval A Practical Guide Datacamp In these tutorials we'll show you how you can use deepeval to improve your llm application one step at a time. these tutorials walk you through the process of evaluating and testing your llm applications — from initial development to post production. Learn how to use deepeval in python to evaluate large language models with metrics like correctness and relevance. follow step by step guide with code examples. As llms continue to evolve, robust evaluation methodologies are crucial for maintaining their effectiveness and addressing challenges such as bias and safety such as deepeval. deepeval is an open source evaluation framework designed to assess large language model (llm) performance. This document provides a comprehensive guide to enabling, using, configuring, and extending deepeval within the litmus framework for evaluating llm responses. what is deepeval? deepeval is a python library specifically designed for evaluating the quality of responses generated by llms.

Evaluate Llms Effectively Using Deepeval A Practical Guide Datacamp As llms continue to evolve, robust evaluation methodologies are crucial for maintaining their effectiveness and addressing challenges such as bias and safety such as deepeval. deepeval is an open source evaluation framework designed to assess large language model (llm) performance. This document provides a comprehensive guide to enabling, using, configuring, and extending deepeval within the litmus framework for evaluating llm responses. what is deepeval? deepeval is a python library specifically designed for evaluating the quality of responses generated by llms. To explore more about llm evaluation, check out my llm evaluation series, where i cover key metrics, best practices, and hands on examples for effectively testing language models. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. Datacamp wrote a great tutorial on evaluating your llm application with confident ai (yc w25) 's deepeval. check it out below!. Learn deepeval: llm evaluation framework tutorial interactive ai tutorial with hands on examples, code snippets, and practical applications. master ai engineering with step by step guidance.

Evaluate Llms Effectively Using Deepeval A Practical Guide Datacamp To explore more about llm evaluation, check out my llm evaluation series, where i cover key metrics, best practices, and hands on examples for effectively testing language models. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. Datacamp wrote a great tutorial on evaluating your llm application with confident ai (yc w25) 's deepeval. check it out below!. Learn deepeval: llm evaluation framework tutorial interactive ai tutorial with hands on examples, code snippets, and practical applications. master ai engineering with step by step guidance.

Evaluate Llms Effectively Using Deepeval A Practical Guide Datacamp Datacamp wrote a great tutorial on evaluating your llm application with confident ai (yc w25) 's deepeval. check it out below!. Learn deepeval: llm evaluation framework tutorial interactive ai tutorial with hands on examples, code snippets, and practical applications. master ai engineering with step by step guidance.

Comments are closed.